iRules Style Guide

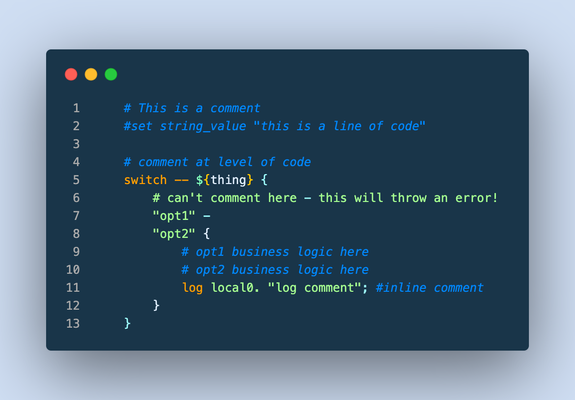

This article (formatted here in collaboration with and from the notes of F5er Jim_Deucker) features an opinionated way to write iRules, which is an extension to the Tcl language. Tcl has its own style guide for reference, as do other languages like my personal favorite python. From the latter, Guido van Rossum quotes Ralph Waldo Emerson: "A foolish consistency is the hobgoblin of little minds..," or if you prefer, Morpheus from the Matrix: "What you must learn is that these rules are no different than the rules of a computer system. Some of them can be bent. Others can be broken." The point? This is a guide, and if there is a good reason to break a rule...by all means break it! Editor Settings Setting a standard is good for many reasons. It's easier to share code amongst colleagues, peers, or the larger community when code is consistent and professional looking. Settings for some tools are provided below, but if you're using other tools, here's the goal: indent 4 spaces (no tab characters) 100-column goal for line length (120 if you must) but avoid line continuations where possible file parameters ASCII Unix linefeeds (\n) trailing whitespace trimmed from the end of each line file ends with a linefeed Visual Studio Code If you aren't using VSCode, why the heck not? This tool is amazing, and with the F5 Networks iRules extension coupled with The F5 Extension, you get the functionality of a powerful editor along with the connectivity control of your F5 hosts. With code diagnostics and auto formatting based on this very guide, the F5 Networks iRules Extension will make your life easy. Seriously...stop reading and go set up VSCode now. EditorConfig For those with different tastes in text editing using an editor that supports EditorConfig: # 4 space indentation [*.{irule,irul}] indent_style = space indent_size = 4 end_of_line = lf insert_final_newline = true charset = ascii trim_trailing_whitespace = true Vim I'm a vi guy with sys-admin work, but I prefer a full-fledge IDE for development efforts. If you prefer the file editor, however, we've got you covered with these Vim settings: # in ~/.vimrc file set tabstop=4 set shiftwidth=4 set expandtab set fileencoding=ascii set fileformat=unix Sublime There are a couple tools for sublime, but all of them are a bit dated and might require some work to bring them up to speed. Unless you're already a Sublime apologist, I'd choose one of the other two options above. sublime-f5-irules (bitwisecook fork, billchurch origin) for editing Sublime Highlight for export to RTF/HTML Guidance Watch out for smart-quotes and non-breaking spaces inserted by applications like Microsoft Word as they can silently break code. The VSCode extension will highlight these occurrences and offer a fix automatically, so again, jump on that bandwagon! A single iRule has a 64KB limit. If you're reaching that limit it might be time to question your life choices, I mean, the wisdom of the solution. Break out your iRules into functional blocks. Try to separate (where possible) security from app functionality from stats from protocol nuances from mgmt access, etc. For example, when the DevCentral team managed the DevCentral servers and infrastructure, we had 13 iRules to handle maintenance pages, masking application error codes and data, inserting scripts for analytics, managing vanity links and other structural rewrites to name a few. With this strategy, priorities for your events are definitely your friend. Standardize on "{" placement at the end of a line and not the following line, this causes the least problems across all the BIG-IP versions. # ### THIS ### if { thing } { script } else { other_script } # ### NOT THIS ### if { thing } { script } else { other_script } 4-character indent carried into nested values as well, like in switch. # ### THIS ### switch -- ${thing} { "opt1" { command } default { command } } Comments (as image for this one to preserve line numbers) Always comment at the same indent-level as the code (lines 1, 4, 9-10) Avoid end-of-line comments (line 11) Always hash-space a comment (lines 1, 4, 9-10) Leave out the space when commenting out code (line 2) switch statements cannot have comments inline with options (line 6) Avoid multiple commands on a single line. # ### THIS ### set host [getfield [HTTP::host] 1] set port [getfield [HTTP::host] 2] # ### NOT THIS ### set host [getfield [HTTP::host] 1]; set port [getfield [HTTP::host] 2] Avoid single-line if statements, even for debug logs. # ### THIS ### if { ${debug} } { log local0. "a thing happened...." } # ### NOT THIS ### if { ${debug} } { log local0. "a thing happened..."} Even though Tcl allows a horrific number of ways to communicate truthiness, Always express or store state as 0 or 1 # ### THIS ### set f 0 set t 1 if { ${f} && ${t} } { ... } # ### NOT THIS ### # Valid false values set f_values "n no f fal fals false of off" # Valid true values set t_values "y ye yes t tr tru true on" # Set a single valid, but unpreferred, state set f [lindex ${f_values} [expr {int(rand()*[llength ${f_values}])}]] set t [lindex ${t_values} [expr {int(rand()*[llength ${t_values}])}]] if { ${f} && ${t} } { ... } Always use Tcl standard || and && boolean operators over the F5 special and and or operators in expressions, and use parentheses when you have multiple arguments to be explicitly clear on operations. # ### THIS ### if { ${state_active} && ${level_gold} } { if { (${state} == "IL") || (${state} == "MO") } { pool gold_pool } } # ### NOT THIS ### if { ${state_active} and ${level_gold} } { if { ${state} eq "IL" or ${state} eq "MO" } { pool gold_pool } } Always put a space between a closing curly bracket and an opening one. # ### THIS ### if { ${foo} } { log local0.info "something" } # ### NOT THIS ### if { ${foo} }{ log local0.info "something" } Always wrap expressions in curly brackets to avoid double expansion. (Check out a deep dive on the byte code between the two approaches shown in the picture below) # ### THIS ### set result [expr {3 * 4}] # ### NOT THIS ### set result [expr 3 * 4] Always use space separation around variables in expressions such as if statements or expr calls. Always wrap your variables in curly brackets when referencing them as well. # ### THIS ### if { ${host} } { # ### NOT THIS ### if { $host } { Terminate options on commands like switch and table with "--" to avoid argument injection if if you're 100% sure you don't need them. The VSCode iRules extension will throw diagnostics for this. SeeK15650046 for more details on the security exposure. # ### THIS ### switch -- [whereis [IP::client_addr] country] { "US" { table delete -subtable states -- ${state} } } # ### NOT THIS ### switch [whereis [IP::client_addr] country] { "US" { table delete -subtable states ${state} } } Always use a priority on an event, even if you're 100% sure you don't need them. The default is 500 so use that if you have no other starting point. Always put a timeout and/or lifetime on table contents. Make sure you really need the table space before settling on that solution, and consider abusing the static:: namespace instead. Avoid unexpected scope creep with static:: and table variables by assigning prefixes. Lacking a prefix means if multiple rules set or use the variable changing them becomes a race condition on load or rule update. when RULE_INIT priority 500 { # ### THIS ### set static::appname_confvar 1 # ### NOT THIS ### set static::confvar 1 } Avoid using static:: for things like debug configurations, it's a leading cause of unintentional log storms and performance hits. If you have to use them for a provable performance reason follow the prefix naming rule. # ### THIS ### when CLIENT_ACCEPTED priority 500 { set debug 1 } when HTTP_REQUEST priority 500 { if { ${debug} } { log local0.debug "some debug message" } } # ### NOT THIS ### when RULE_INIT priority 500 { set static::debug 1 } when HTTP_REQUEST priority 500 { if { ${static::debug} } { log local0.debug "some debug message" } } Comments are fine and encouraged, but don't leave commented-out code in the final version. Wrapping up that guidance with a final iRule putting it all into practice: when HTTP_REQUEST priority 500 { # block level comments with leading space #command commented out if { ${a} } { command } if { !${a} } { command } elseif { ${b} > 2 || ${c} < 3 } { command } else { command } switch -- ${b} { "thing1" - "thing2" { # thing1 and thing2 business reason } "thing3" { # something else } default { # default branch } } # make precedence explicit with parentheses set d [expr { (3 + ${c} ) / 4 }] foreach { f } ${e} { # always braces around the lists } foreach { g h i } { j k l m n o p q r } { # so the lists are easy to add to } for { set i 0 } { ${i} < 10 } { incr i } { # clarity of each parameter is good } } What standards do you follow for your iRules coding styles? Drop a comment below!6.4KViews22likes12CommentsDevCentral's Featured Member for November - Mohamed Salah

Our Featured Member series is a way for us to show appreciation and highlight active contributors in our community. Communities thrive on interaction and ourFeatured Seriesgives you some insight on some of our most engaged folks. F5 Community Member Mohamed Salahis our DevCentral Featured Member for November! He's been helping many other members with some great tips so let's catch up with Mohamed!1.5KViews15likes6CommentsHow to get a F5 BIG-IP VE Developer Lab License

(applies to BIG-IP TMOS Edition) To assist DevOps teams improve their development for the BIG-IP platform, F5 offers a low cost developer lab license.This license can be purchased from your authorized F5 vendor. If you do not have an F5 vendor, you can purchase a lab license online: CDW BIG-IP Virtual Edition Lab License CDW Canada BIG-IP Virtual Edition Lab License Once completed, the order is sent to F5 for fulfillment and your license will be delivered shortly after via e-mail. F5 is investigating ways to improve this process. To download the BIG-IP Virtual Edition, please log into downloads.f5.com (separate login from DevCentral), and navigate to your appropriate virtual edition, example: For VMware Fusion or Workstation or ESX/i:BIGIP-16.1.2-0.0.18.ALL-vmware.ova For Microsoft HyperV:BIGIP-16.1.2-0.0.18.ALL.vhd.zip KVM RHEL/CentoOS: BIGIP-16.1.2-0.0.18.ALL.qcow2.zip Note: There are also 1 Slot versions of the above images where a 2nd boot partition is not needed for in-place upgrades. These images include_1SLOT- to the image name instead of ALL. The below guides will help get you started with F5 BIG-IP Virtual Edition to develop for VMWare Fusion, AWS, Azure, VMware, or Microsoft Hyper-V. These guides follow standard practices for installing in production environments and performance recommendations change based on lower use/non-critical needs fo Dev/Lab environments. Similar to driving a tank, use your best judgement. DeployingF5 BIG-IP Virtual Edition on VMware Fusion Deploying F5 BIG-IP in Microsoft Azure for Developers Deploying F5 BIG-IP in AWS for Developers Deploying F5 BIG-IP in Windows Server Hyper-V for Developers Deploying F5 BIG-IP in VMware vCloud Director and ESX for Developers Note: F5 Support maintains authoritativeAzure, AWS, Hyper-V, and ESX/vCloud installation documentation. VMware Fusion is not an official F5-supported hypervisor so DevCentral publishes the Fusion guide with the help of our Field Systems Engineering teams.74KViews13likes143CommentsAS3 Best Practice

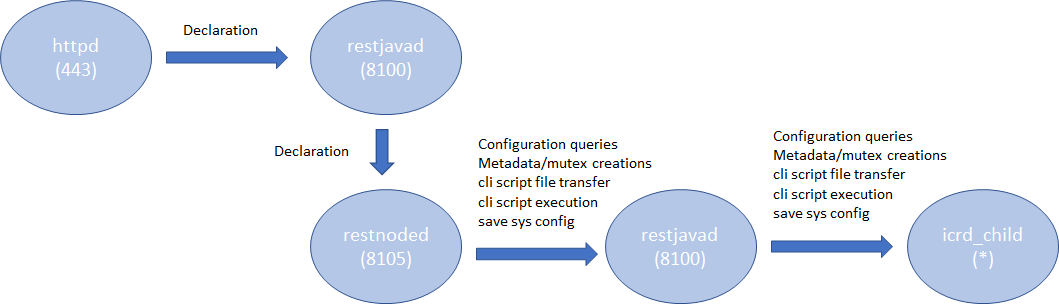

Introduction AS3 is a declarative API that uses JSON key-value pairs to describe a BIG-IP configuration. From virtual IP to virtual server, to the members, pools, and nodes required, AS3 provides a simple, readable format in which to describe a configuration. Once you've got the configuration, all that's needed is to POST it to the BIG-IP, where the AS3 extension will happily accept it and execute the commands necessary to turn it into a fully functional, deployed BIG-IP configuration. If you are new to AS3, start reading the following references: Products - Automation and orchestration toolchain(f5.com; Product information) Application Services 3 Extension Documentation(clouddocs; API documentation and guides) F5 Application Services 3 Extension(AS3) (GitHub; Source repository) This article describes some considerations in order to efficiently deploy the AS3 configurations. Architecture In the TMOS space, the services that AS3 provides are processed by a daemon named 'restnoded'. It relies on the existing BIG-IP framework for deploying declarations. The framework consists of httpd, restjavad and icrd_child as depicted below (the numbers in parenthesis are listening TCP port numbers). These processes are also used by other services. For example, restjavad is a gateway for all the iControl REST requests, and is used by a number of services on BIG-IP and BIG-IQ. When an interaction between any of the processes fails, AS3 operation fails. The failures stem from lack of resources, timeouts, data exceeding predefined thresholds, resource contention among the services, and more. In order to complete AS3 operations successfully, it is advised to follow the Best Practice outlined below. Best Practice Your single source of truth is your declaration Refrain from overwriting the AS3-deployed BIG-IP configurations by the other means such as TMSH, GUI or iControl REST calls. Since you started to use the AS3 declarative model, the source of truth for your device's configurations is in your declaration, not the BIG-IP configuration files. Although AS3 tries to weigh BIG-IP locally stored configurations as much as it can do, discrepancy between the declaration and the current configuration on BIG-IP may cause the AS3 to perform less efficiently or error unexpectedly. When you wish to change a section of a tenant (e.g., pool name change), modify the declaration and submit it. Keep the number of applications in one tenant to a minimum AS3 processes each tenant separately.Having too many applications (virtual servers) in a single tenant (partition) results in a lengthy poll when determining the current configuration. In extreme cases (thousands of virtuals), the action may time out. When you want to deploy a thousand or more applications on a single device, consider chunking the work for AS3 by spreading the applications across multiple tenants (say, 100 applications per tenant). AS3 tenant access behavior behaves as BIG-IP partition behavior.A non-Common partition virtual cannot gain access to another partition's pool, and in the same way, an AS3 application does not have access to a pool or profile in another tenant.In order to share configuration across tenants, AS3 allows configuration of the "Shared" application within the "Common" tenant.AS3 avoids race conditions while configuring /Common/Shared by processing additions first and deletions last, as shown below.This dual process may cause some additional delay in declaration handling. Overwrite rather than patching (POSTing is a more efficient practice than PATCHing) AS3 is a stateless machine and is idempotent. It polls BIG-IP for its full configuration, performs a current-vs-desired state comparison, and generates an optimal set of REST calls to fill the differences.When the initial state of BIG-IP is blank, the poll time is negligible.This is why initial configuration with AS3 is often quicker than subsequent changes, especially when the tenant contains a large number of applications. AS3 provides the means to partially modify using PATCH (seeAS3 API Methods Details), but do not expect PATCH changes to be performant.AS3 processes each PATCH by (1) performing a GET to obtain the last declaration, (2) patching that declaration, and (3) POSTing the entire declaration to itself.A PATCH of one pool member is therefore slower than a POST of your entire tenant configuration.If you decide to use PATCH,make sure that the tenant configuration is a manageable size. Note: Using PATCH to make a surgical change is convenient, but using PATCH over POST breaks the declarative model. Your declaration should be your single source of truth.If you include PATCH, the source of truth becomes "POST this file, then apply one or more PATCH declarations." Get the latest version AS3 is evolving rapidly with new features that customers have been wishing for along with fixes for known issues. Visitthe AS3 section of the F5 Networks Github.Issuessection shows what features and fixes have been incorporated. For BIG-IQ, check K54909607: BIG-IQ Centralized Management compatibility with F5 Application Services 3 Extension and F5 Declarative Onboarding for compatibilities with BIG-IQ versions before installation. Use administrator Use a user with the administrator role when you submit your declaration to a target BIG-IP device. Your may find your role insufficient to manipulate BIG-IP objects that are included in your declaration. Even one authorized item will cause the entire operation to fail and role back. See the following articles for more on BIG-IP user and role. Manual Chapter : User Roles (12.x) Manual Chapter : User Roles (13.x) Manual Chapter : User Roles (14.x) Prerequisites and Requirements(clouddocs AS3 document) Use Basic Authentication for a large declaration You can choose either Basic Authentication (HTTP Authorization header) or Token-Based Authentication (F5 proprietary X-F5-Auth-Token) for accessing BIG-IP. While the Basic Authentication can be used any time, a token obtained for the Token-Based Authentication expires after 1,200 seconds (20 minutes). While AS3 does re-request a new token upon expiry, it requires time to perform the operation, which may cause AS3 to slow down. Also, the number of tokens for a user is limited to 100 (since 13.1), hence if you happen to have other iControl REST players (such as BIG-IQ or your custom iControl REST scripts) using the Token-Based Authentication for the same user, AS3 may not be able to obtain the next token, and your request will fail. See the following articles for more on the Token-Based Authentication. Demystifying iControl REST Part 6: Token-Based Authentication(DevCentral article). iControl REST Authentication Token Management(DevCentral article) Authentication and Authorization(clouddocs AS3 document) Choose the best window for deployment AS3 (restnoded daemon) is a Control Plane process. It competes against other Control Plane processes such as monpd and iRules LX (node.js) for CPU/memory resources. AS3 uses the iControl REST framework for manipulating the BIG-IP resources. This implies that its operation is impacted by any processes that use httpd (e.g., GUI), restjavad, icrd_child and mcpd. If you have resource-hungry processes that run periodically (e.g., avrd), you may want to run your AS3 declaration during some other time window. See the following K articles for alist of processes K89999342 BIG-IP Daemons (12.x) K05645522BIG-IP Daemons (v13.x) K67197865BIG-IP Daemons (v14.x) K14020: BIG-IP ASM daemons (11.x - 15.x) K14462: Overview of BIG-IP AAM daemons (11.x - 15.x) Workarounds If you experience issues such as timeout on restjavad, it is possible that your AS3 operation had resource issues. After reviewing the Best Practice above but still unable to alleviate the problem, you may be able to temporarily fix it by applying the following tactics. Increase the restjavad memory allocation The memory size of restjavad can be increased by the following tmsh sys db commands tmsh modify sys db provision.extramb value <value> tmsh modify sys db restjavad.useextramb value true The provision.extramb db key changes the maximum Java heap memory to (192 + <value> * 8 / 10) MB. The default value is 0. After changing the memory size, you need to restart restjavad. tmsh restart sys service restjavad See the following article for more on the memory allocation: K26427018: Overview of Management provisioning Increase a number of icrd_child processes restjavad spawns a number of icrd_child processes depending on the load. The maximum number of icrd_child processes can be configured from /etc/icrd.conf. Please consult F5 Support for details. See the following article for more on the icrd_child process verbosity: K96840770: Configuring the log verbosity for iControl REST API related to icrd_child Decrease the verbosity levels of restjavad and icrd_child Writing log messages to the file system is not exactly free of charge. Writing unnecessarily large amount of messages to files would increase the I/O wait, hence results in slowness of processes. If you have changed the verbosity levels of restjavad and/or icrd_child, consider rolling back the default levels. See the following article for methods to change verbosity level: K15436: Configuring the verbosity for restjavad logs on the BIG-IP system13KViews12likes2CommentsHow to Split DNS with Managed Namespace on F5 Distributed Cloud (XC) Part 1 – DNS over HTTPS

Introduction DNS, everyone’s least favorite infrastructure service. So simple, yet so hard. Simple because it’s really just some text files served up, so hard because get it wrong and everything breaks. And it really doesn’t require a ton of resources, so why use a lot? Containers, rulers of our age, everything must be a container! Not really, but we are in a major shift from waterfall to modern architecture, and its handy to have something small that can be spun up in a lot of locations for redundancy and automated for our needs. "Nature is a mutable cloud, which is always and never the same." - Ralph Waldo Emerson We might not wax that philosophically around here, but our heads are in the cloud nonetheless! Join the F5 Distributed Cloud user group today and learn more with your peers and other F5 experts. But also, if I don’t want to spin up servers or hardware, I probably don’t want to spin up a container infrastructure either. So, use F5 XC Managed K8s solutions… F5 XC is a platform that can not only be used to provide native security solutions in any cloud or datacenter but is also a compute platform like any other cloud service provider. Bring us all your containers to host and secure. For this use-case we are going to use our Managed Namespace solution. It’s very similar to our Managed K8s solution, but more of a sandbox with hardened security policies. Part 1 will focus on DNS over HTTPS, Part 2 will cover TCP/UDP, however, the initial deployment will set up all the ports and services needed for Part 2 now. Managed Namespace - Sandbox Policies Architecture For this solution I went with Bind 9.19-dev, which seemed to have some issues with grpc and HTTP/2 conversion, which I was able to resolve by slapping NGINX in front of the DNS over HTTPS listener to proxy grpc to http/2, all other TCP/UDP traffic goes directly to Bind. Hopefully this is patched in future Bind releases. Otherwise, it’s just a standard tcp/udp DNS deployment. An important note about the architecture is that the workloads can be deployed on Customer Edge Nodes or on F5 owned Regions, so if there is no desire to manage a node on-premises, or manage / host k8s whether on-prem or in a 3 rd party Cloud Service Provider, running on our regions works perfectly fine and give a tremendous amount of redundancy. Managed Namespace Deployment From within the XC Console, we need to ensure that there is a Managed Namespace deployed, so click on the Distributed Apps Tile. Under Applications, select Virtual K8s. From here, if there is not already a vk8s deployed in the namespace, deploy one now. We won’t be covering deploying virtual k8s here, but it’s not too complex, click add new, give it a name, select some virtual sites, leave service isolation disabled and choose a default workload flavor. Once the Managed Namespace (virtual k8s) is online, you can download the kubeconfig by clicking the ellipses on the far right and selecting Kubeconfig. For a more detailed walkthrough of Creating a Managed Namespace you can go to the F5 Tech Docs located here: https://docs.cloud.f5.com/docs/how-to/app-management/create-vk8s-obj Click-Ops Deployment Since it would take up a ton of space I will not cover Click-Ops deployment of workloads, while it may be in a future article a detailed walkthrough can be found here today:https://docs.cloud.f5.com/docs/how-to/app-management/vk8s-workload Kubectl Deployment We WILL be covering deployment via kubectl with a manifest in this guide, so now we can actually start getting into it. As detailed in the architecture we are going to proxy requests to bind via NGINX, and to get NGINX set up as a proxy to Bind we need to get it configured. Posting the YAML in the article was a bit long, so all sources are posted in github and the yaml images link to the specific sections, while a full manifest is located near the end of the article. NGINX Config-Map There are a couple of critical or key differences to pay attention to when deploying to Managed Namespaces versus another k8s provider. The main one that we care about now is annotations. In the context we will be using them, they determine where the configurations and workloads will be deployed, and in other scenarios also include things like workload flavors and other internal details. ves.io/sites: determines the sites we are going to want the objects deployed to. This can be a Customer Site, a Virtual Site, or to all F5 XC Owned Regions. In our nginx.conf, All of these configs are standard as well with a location added for a health check and some self signed certs to force a secure channel. If you need a quick command to generate Cert & Key without searching: openssl req -x509 -nodes -subj '/CN=bind9.local' -newkey rsa:4096 -keyout /etc/ssl/private/dns.key -out /etc/ssl/certs/dns.pem -sha256 -days 3650 Server Block & Upstream upstream http2-doh { server 127.0.0.1:80; } server { listen 8080 default_server; listen 4443 ssl http2; server_name _; # TLS certificate chain and corresponding private key ssl_certificate /etc/ssl/certs/dns.pem; ssl_certificate_key /etc/ssl/private/dns.key; location / { grpc_pass grpc://http2-doh; } location /health-check { add_header Content-Type text/plain; return 200 'what is up buttercup?!'; } } Source: config-map.yml Deployment The deployment models in XC are pretty great, deploy to a cloud site, deploy to on-prem datacenters, deploy to our compute, or any combination. The services can then be published to the internet, to a cloud site, or to an on-prem site with all of the same security models for every facility. The deployment is pretty standard as well, the important pieces are ves.io/sites: this is important for the same reasons mentioned previously, but determines where the workloads will reside, with the same options as before. Environment Variables are where we need to tweek the settings a bit. A full listing of the values can be seen here:https://github.com/Mikej81/docker-bind Some of the values should be self-explanatory, but an important setting for a zone / a mapping is DNS_A. If there is a desire to bring in full zone files, it is possible to create that via a FILE value or a config map for Bind and storing the zone file in the proper named path and mapping the volume.**Not covered here** We will also be mapping some example self-signed certificates, which are only required if encryption is desired all the way to the container / pod. apiVersion: apps/v1 kind: Deployment metadata: name: bind-doh-dep labels: app: bind annotations: ves.io/sites: system/coleman-azure,system/coleman-cluster-100,system/colemantest spec: replicas: 1 selector: matchLabels: app: bind template: metadata: labels: app: bind spec: containers: - name: bind image: mcoleman81/bind-doh env: - name: DOCKER_LOGS value: "1" - name: ALLOW_QUERY value: "any" - name: ALLOW_RECURSION value: "any" - name: DNS_FORWARDER value: "8.8.8.8, 8.8.4.4" - name: DNS_A value: domain1.com=68.183.126.197,domain2.com=68.183.126.197 Source: deployment.yml Services We are almost done building out the manifest. We created the config map, we created the deployment, now we just need to expose some services. The targetPorts need to be above 1024 in the managed namespace so if those are changed, just follow that guideline. apiVersion: v1 kind: Service metadata: name: bind-services annotations: ves.io/sites: system/coleman-azure spec: type: ClusterIP selector: app: bind ports: - name: dns-udp port: 53 targetPort: 5553 protocol: UDP - name: dns-tcp port: 53 targetPort: 5353 protocol: TCP - name: dns-http port: 80 targetPort: 8888 protocol: TCP - name: nginx-http-listener port: 8080 targetPort: 8080 protocol: TCP - name: nginx-https-listener port: 4443 targetPort: 4443 protocol: TCP Source: service.yml Based on everything we have done, we know that the service name will be our [servicename].[namespace created previously], in my case it will be bind-services.m-coleman. We will need that value in a few steps when creating our Origin Pool. bind-manifest.yml Putting it all together! Full manifest can be found here:bind-manifest.yml Apply! kubectl apply -f bind-manifest.yml Application Deliver & Load Balancers HTTPS Origin Now we can create an origin pool. Over on the left menu, under Manage, Load Balancers, click Origin Pools. Let’s give our origin pool a name, and add some Origin Servers, so under Origin Servers, click Add Item. In the Origin Server settings, we want to select K8s Service Name of Origin Server on given Sites as our type, and enter our service name, which will be the service name we remembered from earlier and our namespace, so “servicename.namespace”. For the Site, we select one of the sites we deployed the workload to, and under Select Network on the Site, we want to select vK8s Networks on the Site, then click Apply. Do this for each site we deployed to so we have several servers in our Origin Pool. We also need to tweak the TLS settings since it will be encrypted over 4443 to the origin, but we don’t want to validate the certs since they are self-signed certs with low security settings, in my case, so update this as needed. Once everything is set right, click Save and Exit. HTTPS Load Balancer Now we need a load balancer, on the left menu bar, under Manage, select Load Balanacers and click HTTP Load Balancers. Click Add HTTP Load Balancer and lets assign a name. This is another location where configurations will diverge, for ease of deployment I am going to use an HTTPS Load Balancer with Auto Generated Certificates, but you can use HTTPS with Custom certificates as well. Note: For HTTPS with Auto-Certificate, Advertisement is Internet only, Custom Certificate allows Internet and Internal based advertising. HSTS is optional, as are most of the options shown below aside from Load Balancer Type and HTTPS Port. Under Origin, we Add Item and add the origin pool we created previously. Under Other Settings is where we can configure how & where the service is advertised. If we are going to advertise this service to an internal network only, we would select Custom here, then click configure. An example of what that would look like would be to click add item under the Custom Advertise VIP Configuration menu, Select the type of Site to advertise to, the type of interface to advertise on, and the specific site location. Click Apply as needed, then Save and Exit. Moment of Truth There are several ways to test to make sure we have everything up and running, first lets make sure our services are up. kubectl get services -n m-coleman NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE bind-services ClusterIP 192.168.175.193 <none> 53/UDP,53/TCP,80/TCP,8080/TCP,4443/TCP 3h57m Curl has built in DNS over HTTPS support so we can test via curl to see if our sites are resolving so first ill test one of our custom zones / A records. We are in business! We can also test with firefox or chrome or any number of other tools. Dig Dog Kdig Etc In Part 2 we will cover publishing TCP and UDP.2.6KViews11likes0CommentsCertified Kubernetes Administrator - Study Group

I recently completed my CKA and want to encourage others as I was encouraged. To that end, I'm going to facilitate a study group that will kick off the week of April 24th. Requirements You're welcome to join in on the fun for weeks 1 and 2, but you must register for the exam by week 3 and set a test date to continue on with the study group. Commitment is key! The exam is $395 but I have a code that should get you 50% off if you register in the first two weeks of our study group. You will sign up for a week of material to learn and share with the group what you learned, and walk the group through the lab exercises that challenged you the most and what you learned from them. You'll commit the time to study, it's a lot of material to learn You'll show up for and participate in meetings (with the understanding that life happens) Material The only required material for this study group is the Certified Kubernetes Administrator with Practice Tests course on Udemy. It is $35, but sometimes it's discounted, I think I got it at $19 when I registered. In the course material, there is a coupon that will unlock the CKA course labs for free on KodeCloud.com. Schedule As far as time is concerned, I know that will be tricky. I'm available most days Tuesday-Friday between 3pm - 6pm central. We can nail down a timeslot once everyone interested is set. From a weekly perspective, you can expect about 3-4 hours of course content, plus the labs, plus any additional studying you might do on your own. Week Date Concepts 1 April 24th Introduction | Core Concepts 2 May 1st Scheduling | Logging & Monitoring | Storage 3 May 8th Application Lifecycle Management | Cluster Maintenance 4 May 15th Security 5 May 22nd Review | Killer.sh Lab Attempt #1 6 May 29th Networking 7 June 5th Designing & Installing a Cluster | Installing the kubeadm way | Troubleshooting 8 June 12th Mock Exams | Killer.sh Lab Attempt #2 9 June 19th Prep for / take your exam Any questions on the exam, the material, the study group, drop them below. I hope to see you the week of April 24th! If you want to join, send me a DM here on DevCentral or shoot me an email at j.rahm@f5.comand I'll add you to the group. First group I'll likely limit to the first 8-10 to keep it small enough to encourage conversation.1.7KViews10likes4CommentsF5 Hybrid Security Architectures: One WAF Engine, Total Flexibility (Intro)

Layered security, we have been told for years that the most effective security strategy is composed of multiple, loosely coupled or independent layers of security controls. A WAF fits snuggly into the technical security controls area and has long been known as an essential piece of application security. What if we take this further and apply the layered approach directly to our WAF deployment? The F5 Hybrid Security Architectures explores this approach utilizing F5's best in class WAF products.7.2KViews10likes0CommentsBolt-on Auth with NGINX Plus and F5 Distributed Cloud

Inarguably, we are well into the age wherein the user interface for a typical web application has shifted from server-generated markup to APIs as the preferred point of interaction. As developers, we are presented with a veritable cornucopia of tools, frameworks, and standards to aid us in the development of these APIs and the services behind them. What about securing these APIs? Now more than ever, attackers have focused their efforts on abusing APIs to exfiltrate data or compromise systems at an increasingly alarming rate. In fact, a large portion of the 2023 OWASP Top 10 API Security Risks list items are caused by a lack of (or insufficient) authentication and authorization. How can we provide protection for existing APIs to prevent unauthorized access? What if my APIs have already been developed without considering access control? What are my options now? Enter the use of a proxy to provide security services. Solutions such as F5 NGINX Plus can easily be configured to provide authorization and auditing for your APIs - irrespective of where they are deployed. For instance, you can enable OpenID Connect (OIDC) on NGINX Plus to provide authentication and authorization for your applications (including APIs) without having to change a single line of code. In this article, we will present an existing application with an API deployed in an F5 Distributed Cloud cluster. This application lacks authentication and authorization features. The app we will be using is the Sentence demo app, deployed into a Kubernetes cluster on Distributed Cloud. The Kubernetes cluster we will be using in this walkthrough is a Distributed Cloud Virtual Kubernetes (vk8s) instance deployed to host application services in more than one Regional Edge site. Why? An immediate benefit is that as a developer, I don’t have to be concerned with managing my own Kubernetes cluster. We will use automation to declaratively configure a virtual Kubernetes cluster and deploy our application to it in a matter of seconds! Once the Sentence demo app is up and running, we will deploy NGINX Plus into another vk8s cluster for the purpose of providing authorization services. What about authentication? We will walk through configuring Microsoft Entra ID (formerly Azure Active Directory) as the identity provider for our application, and then configure NGINX Plus to act as an OIDC Relying Party to provide security services for the deployed API. Finally, we will make use of Distributed Cloud HTTP load balancers. We will provision one publicly available load balancer that will securely route traffic to the NGINX Plus authorization server. We will then provision an additional Load Balancer to provide application routing services to the Sentence app. This second load balancer differs from the first in that it is only “advertised” (and therefore only reachable) from services inside the namespace. This results in a configuration that makes it impossible for users to bypass the NGINX authorization server in an attempt to directly consume the Sentence app. The following is a diagram representing what will be deployed: Let’s get to it! Deployment Steps The detailed steps to deploy this solution are located in a GitHub repository accompanying this article. Follow the steps here, and be sure to come back to this article for the wrap-up! Conclusion You did it! With the power and reach of Distributed Cloud combined with the security that NGINX Plus provides, we have been able to easily provide authorization for our example API-based application. Where could we go from here? Do you remember we deployed these applications to two specific geographical sites? You could very easily extend the reach of this solution to more regions (distributed globally) to provide reliability and low-latency experiences for the end users of this application. Additionally, you can easily attach Distributed Cloud’s award-winning DDoS mitigation, WAF, and Bot mitigation to further protect your applications from attacks and fraudulent activity. Thanks for taking this journey with me, and I welcome your comments below. Acknowledgments This article wouldn’t have been the same without the efforts ofFouad_Chmainy, Matt_Dierick, and Alexis Da Costa. They are the original authors of the distributed design, the Sentence app, and the NGINX Plus OIDC image optimized for Distributed Cloud. Additionally, special thanks toCody_GreenandKevin_Reynoldsfor inspiration and assistance in the Terraform portion of the solution. Thanks, guys!1.3KViews8likes3CommentsAPI Security Strategy - Discover and map APIs, block unwanted connection and prevent data leakage

F5 Distributed Cloud helps organisation in their API Security Strategy. This article and demonstration showcase how F5 helps to discover and map APIs, block unwanted connection and prevent data leakage.2.4KViews8likes3Comments