management

5726 TopicsDynamic import of data groups

Hello. We use data groups for various kind of black lists, such as undesirable user agents, for instance. That's really efficient, but requires a BigIP administrator intervention for any update. We'd like to switch authoritative origin for those lists to an external location, such as an internal git repository, in order to allow trusted people without access to the administration interface to update those lists in auditable manner, as we do for instance with our firewalls using "dynamic list" feature. There seems to be no such native fonctionality in BigIPs, as even "external" dynamic lists actually relies on files hosted on local filesystem, not to arbitrary URLs. We could probably use a cron task to implement a pull-based update mechanism, or use the API to periodically push changes, but I'm not sure of the reliability of such ad-hoc mechanism, and the potential consequences in case of failure. Is there any alternative for such kind of configuration delegation ? Regards, Guillaume147Views0likes4CommentsWhat’s New in F5 BIG-IQ v8.4.2?

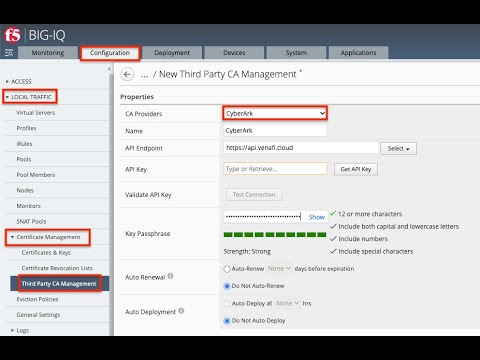

Introduction F5 BIG-IQ Centralized Management, a key component of the F5 Application Delivery and Security Platform (ADSP), helps teams maintain order and streamline administration of BIG-IP app delivery and security services. In this article, I’ll highlight some of the key features, enhancements, and use cases introduced in the BIG-IQ v8.4.2 release and cover the value of these updates. Effective management of this complex application landscape requires a single point of control that combines visibility, simplified management and automation tools. Demo Video New Features in BIG-IQ 8.4.2 Support for Red Hat OpenShift BIG-IQ v8.4.2 provides full support and validation for standalone deployments in Red Hat Open Shift (please note that HA deployments are not yet supported). Red Hat OpenShift virtualization is a popular, flexible, and lower-cost alternative to VMware virtual machines. This KVM-based virtualization platform ensures easy, simple migration of application workloads and deployments and works seamlessly with BIG-IP and BIG-IQ 8.4.2+. The qcow2 image available for download from F5.com is now supported on Red Hat OpenShift: Sample yaml file: New Third-Party CA Management: CyberArk BIG-IQ v8.4.2 has been updated to support CyberArk Certificate Manager for 3 rd -Party CA Management. CyberArk, now the parent company for the Venafi TLS Protect certificate management product, offers a cloud/SaaS version of Venafi (rather than deployable software). This new product form factor is fully supported in BIG-IQ, making it easy and more flexible to assign, manage, and renew device certificates as part of your BIG-IP management workflows. AFM Policy Deployment Control BIG-IQ v8.4.2 introduces per-device AFM Deployment Controls. Only devices with AFM discovered and enabled for Policy Deployment can have policies deployed. This allows you to disable Policy Deployment to specific devices or device groups/clusters without needing to remove AFM Services. For devices imported with the AFM module selected, this enhancement introduces a toggle button labeled Disable Firewall Policy Deployment. If the AFM module is not discovered, the Properties Tab will not display this toggle, as firewall deployment is inherently disabled for such devices. This enhancement helps the team more granularly deploy and manage network firewalls via the user interface or API. Additionally, per-device AFM management helps teams maintain consistency for device clusters by applying changes to all devices or single instances. This enhancement also adheres to any roles or user policies, helping ensure enforcement of RBAC—only admins can make changes to AFM policies while other roles are read-only. In short, this new feature enables teams to build consistent and resilient AFM policies and deployments—even during device re-import and re-discovery. Support for F5 BIG-IP v21.1 BIG-IQ v8.4.2 has been updated for interoperability with BIG-IP up to v21.1, including full support for SSL Orchestrator v14. With this interoperability, teams can: Manage the latest versions of BIG-IP (17.5.X and 21.1) including both device/instance management as well as services running on these instances Configure and deploy BIG-IP devices and services in a repeatable and consistent manner at enterprise scale Provision, license, configure, and deploy the latest BIG-IP VEs, HW instances (including VELOS and rSeries), and the app services running on them Effectively troubleshoot issues with infrastructure, policies, configurations, app services, or apps themselves Updated TMOS Layer In the v8.4.2 release, BIG-IQ's underlying TMOS version has been upgraded to v17.5.1.4, which will enhance the control plane performance, improve security efficacy, and enable better resilience of the BIG-IQ solution. Upgrading to v8.4.2 You can upgrade from BIG-IQ v8.X to BIG-IQ v8.4.2. BIG-IQ Centralized Management Compatibility Matrix Refer to Knowledge Article K34133507 BIG-IQ Virtual Edition supported platforms BIG-IQ Virtual Edition Supported Platforms provides a matrix describing the compatibility between the BIG-IQ VE versions and the supported hypervisors and platforms. Conclusion Effective management—orchestration, visibility, and compliance—relies on consistent app services and security policies across on-premises and cloud deployments. Easily control all your BIG-IP devices and services with a single, unified management platform, F5® BIG-IQ®. F5® BIG-IQ® Centralized Management reduces complexity and administrative burden by providing a single platform to create, configure, provision, deploy, upgrade, and manage F5® BIG-IP® security and application delivery services. Related Content F5 BIG-IQ Centralized Management Boosting BIG-IP AFM Efficiency with BIG-IQ: Technical Use Cases and Integration Guide Five Key Benefits of Centralized Management What’s New in BIG-IQ v8.4.1? 72Views1like0Comments

72Views1like0Commentsmigrate from serie I to R. Cluster LTM-GTM

We currently need to carry out a migration of six 2600i devices to 6 new 2600r models. There are three Active-Standby clusters at the LTM level. In addition, four of these devices form a cluster for GTM-DNS. I would like to know whether you have any specific procedure for this type of migration. We would also like your recommendation on whether to perform the migration of the four devices within the same maintenance window, or to migrate them in pairs, allowing two devices from the i series and two from the r series to coexist in the same DNS cluster. Additional information: The source and target version will be the same: 17.5.1.3 We will use Journeys for the configuration conversion. On the other hand, would you keep the management IP addresses of the I series on the R Series chassis or tenants, or would you request new IP addresses for all? What steps would you follow during the migration window?.243Views0likes3CommentsAPM URL encoding Hardening?

Some companies still use on-prem Sharepoint.. and Sharepoint is what it is. We have had multiple servers deployed for quite some while now with ASM tuned for its quirks and so on. However - after upgrading to version 17.5.1.6 from 17.5.1.5 we noticed some rather strange behaviors. Like the edit modal button stopped working on certain sites, the upload button stopped working amongst some of the stuff. After some testing and stripping of functions we noticed that it started working when removing the APM policy. So the cogs started turning, what could be the issue with APM? Finally figured out that the links which did not work where not encoded, and the links which worked were. So after some tweaking I got to building a simple http request rewrite iRule for simply encoding the stuff before sending to server. But I do have some qualms about it - Are there any security risks according to you dear people that I might introduce by deploying this externally? Would you have solved it in any other way? basically it's this: when HTTP_REQUEST { # Re-encode characters that are illegal in URIs per RFC 3986 §2.2 / §3.4 set orig_uri [HTTP::uri] set new_uri [string map { "\{" "%7B" "\}" "%7D" "|" "%7C" "\\" "%5C" "^" "%5E" "`" "%60" " " "%20" } $orig_uri] if { $new_uri ne $orig_uri } { HTTP::uri $new_uri } }Solved154Views0likes2CommentsBIG-IQ CM Logs Not Receiving

Once the disk space has been released, you need to rectify the condition below by running the provided command # for idx in $(curl -ks -u admin:admin https://localhost:9200/_cat/indices | awk '{print $3}'); do if [ $idx != ".opendistro_security" ]; then curl -k -u admin:admin -X PUT "https://localhost:9200/$(echo $(echo $idx | cut -d\+ -f1)*)/_settings?pretty" -H 'Content-Type: application/json' -d'{"index.blocks.read_only_allow_delete": false}'; fi; done32Views0likes0CommentsNeed BIG-IP VE Lab License for Personal Study/Learning

Hi F5 Community, I am setting up a personal home lab to learn. F5 BIG-IP for certification preparation. I have deployed BIG-IP VE but need a lab license. to access the management GUI. Could anyone help me get a free lab/evaluation? license for personal learning purposes? Thank you.87Views0likes1CommentCPU load when Prometheus is scraping metrics from F5 BIG-IP LTM

We are experiencing an issue where Prometheus is scraping metrics from F5 BIG-IP LTM, causing high CPU and memory utilization on the F5 device. Initial step, we have adjusted the scraping interval to 1 minute, but the issue still. Are there any recommended tuning options or best practices?326Views0likes5CommentsMigrate HW GTM 2600

Hi, I need to migrate 2 cluster Active/Passive frrom serie i to serie r. They are LTM (active standby) and GTM-DNS. I was thinking about adding two new members to the cluster and temporarily expanding it to a 6-node cluster. Then, on the day of the intervention, we could bring them online and remove two of the i-series nodes. To do this i have to ask for new SELF-IPS, new cables.....etc Anyone knows the best procedure to replacement these GTM/LTM nodes?1.2KViews0likes1CommentCLI Tool for BIG-IP - f5 cli

I'm releasing a CLI tool for inspecting and manipulating configuration. It’s a whole suite of tools in one, from `f5 grep` through to the advanced jq-style `f5 query` This tool is based on my last 20 years of using and abusing BIG-IP, and the ideas behind all the tooling I built along the way. https://github.com/bitwisecook/tcl-lsp/blob/main/INSTALL-cli.md https://github.com/bitwisecook/tcl-lsp/tree/main/docs/references/f5_query https://github.com/bitwisecook/tcl-lsp/tree/main/samples/for_f5_query there’s lots of documentation, worked examples, KCS style docs covering it, contending help including shell completion support. It requires Python 3.10+ for now. feel free to discuss here or raise issues on GitHub. This is part of my much larger work on an LSP, MCP, and AI tooling for all editors and harnesses to improve f5 tooling. The `query` verb can do stuff like $ f5 query --name ltm=ltm.conf --name gtm=gtm.conf --merge --raw ' $gtm.gtm.wideip[] as $w | $w.pools[] as $gp | $gp.members[] | last(split(., ":")) as $vspath | $ltm.ltm.virtual[] | select(."full-path" == $vspath) as $vs | $vs.pool.members[] | tsv($w.name, $gp.name, $vs.name, $vs.pool, .address, port(.name)) ' ltm.conf gtm.conf | sort -u api.example.com api_app_pool api_vs /Common/api_pool 10.0.2.20 8443 api.example.com api_app_pool api_vs /Common/api_pool 10.0.2.21 8443 www.example.com example_app_pool web_vs /Common/web_pool 10.0.1.10 80 www.example.com example_app_pool web_vs /Common/web_pool 10.0.1.11 80124Views1like0Commentsssh: Common Criteria mode initialized

I setup a new F5 and I am trying to SSH to an existing F5 but from the new F5 I get " ssh: Common Criteria mode initialized" I ran the command "tmsh list sys db security.commoncriteria" and it is set to false on both F5. I checked the sshd properties and both F5 have the following description none fips-cipher-version 2 inactivity-timeout 6000 include "Ciphers aes256-ctr,aes256-gcm@openssh.com,aes128-gcm@openssh.com,aes128-ctr KexAlgorithms ecdh-sha2-nistp256,ecdh-sha2-nistp384,ecdh-sha2-nistp521 MACs hmac-sha2-256-etm@openssh.com,hmac-sha2-256,hmac-sha2-512-etm@openssh.com,hmac-sha2-512" log-level info login enabled port 22 what am i missing181Views0likes2Comments