cloud

3982 TopicsAutomating TLS Certificates in Kubernetes with cert-manager and F5 Distributed Cloud DNS

Introduction If you run workloads in Kubernetes or Open Shift, you've almost certainly dealt with TLS certificates. You need them everywhere — Ingress controllers, internal services, mutual TLS between microservices, and API gateways. Managing them by hand is error-prone and doesn't scale: certificates expire silently, rotation is forgotten, and the person who originally created the wildcard cert is now working somewhere else. cert-manager solves this elegantly. It's a Kubernetes-native certificate controller that automates the issuance, renewal, and management of TLS certificates. It speaks ACME — the same protocol Let's Encrypt uses — but ACME is a standard, not a Let's Encrypt exclusive. You can point cert-manager at: Let's Encrypt (free, public, widely trusted) ZeroSSL, Buypass, Google Trust Services — other public CAs supporting ACME Step CA / HashiCorp Vault / Smallstep — for private PKI running inside your infrastructure Any commercial CA that has implemented an ACME endpoint This means the same workflow, the same Kubernetes manifests, and the same tooling can back both your public-facing services and your internal, corporate-signed certificates. One operator to rule them all. Why DNS-01 Challenge? ACME offers multiple ways to prove you own a domain. The most common is the HTTP-01 challenge, where the ACME server checks a well-known URL on your domain. It works well, but has limitations: The endpoint must be publicly reachable on port 80 It cannot issue wildcard certificates (*.example.com) The DNS-01 challenge takes a different approach: cert-manager (via a solver webhook) creates a _acme-challenge.example.com TXT record in your DNS zone. The ACME server checks for this record. Once verified, the TXT record is cleaned up automatically. Benefits: Works behind firewalls — no inbound HTTP needed Supports wildcard certificates — a single *.example.com certificate covers all subdomains Fully automated — the webhook handles record creation and deletion If your DNS is managed by F5 Distributed Cloud (F5 XC), you can now wire this entire flow together with the open-source cert-manager-webhook-f5xc solver. Architecture Overview Here's what happens when cert-manager issues a certificate using the F5 XC webhook: Developer applies Certificate manifest ▼ cert-manager creates Order + Challenge ▼ cert-manager calls webhook (DNS-01 solver) ▼ Webhook calls F5 XC DNS API → Creates TXT record: _acme-challenge.example.com ▼ ACME server (Let's Encrypt / other) validates the TXT record ▼ Webhook cleans up the TXT record ▼ cert-manager stores the issued certificate in a Kubernetes Secret The webhook runs as a standard Kubernetes Deployment inside your cluster, registered with cert-manager via a ValidatingWebhookConfiguration. It receives solver calls from cert-manager and translates them into F5 XC DNS API calls using an API token you provide as a Kubernetes Secret. Prerequisites Before we start, make sure you have the following in place: A Kubernetes cluster (1.21+) cert-manager installed (v1.14+) kubectl apply -f https://github.com/cert-manager/cert-manager/releases/latest/download/cert-manager.yaml Helm 3.8+ An F5 Distributed Cloud tenant with DNS management enabled Your domain's DNS zone managed in F5 XC F5 XC credentials for DNS API access — either an API Token or a Client Certificate (P12); see Step 1 below for details Least privilege note: The service account or user whose credentials you use must have permission to manage DNS records. As of the time of writing, the built-in role f5xc-dns-management-admin is sufficient. Avoid using tenant-admin or other overly broad roles — the webhook only needs to create and delete TXT records in your DNS zone. Step 1: Prepare F5 XC Credentials The webhook supports two authentication methods against the F5 XC DNS API: an API Token or a Client Certificate (P12). Both are stored as a Kubernetes Secret in the cert-manager namespace. Obtaining credentials in F5 XC Console Regardless of which method you choose, the service account must have sufficient permissions to manage DNS records. Follow the least-privilege principle: In the F5 XC Console, navigate to Account Settings → Administration. Create a dedicated service credential under IAM and assign it the f5xc-dns-management-admin role in the system namespace — this is the minimum required role as of the time of writing and grants access to DNS Zone Management without unnecessary privileges elsewhere in the tenant. Or use an existing account privileges under Personal Management credentials. Generate the credentials of your preferred type (API Token or API Certificate) Option A: API Token (simpler, recommended for most setups) Take the API Token obtained from the F5XC console and use it with the following command kubectl create secret generic f5xc-api-token \ --namespace cert-manager \ --from-literal=token=<YOUR_F5XC_API_TOKEN> Option B: Client Certificate (P12) F5 XC also supports certificate-based authentication using a P12 (PKCS#12) client certificate, which may be preferred in environments. Use the certificate and password generated in the previous step and store it as a Secret: kubectl create secret generic f5xc-client-cert \ --namespace cert-manager \ --from-file=certificate.p12=<PATH_TO_YOUR.p12> \ --from-literal=password=<P12_PASSWORD> Refer to the webhook documentation for the exact certificateSecretRef fields to use in the solver config when choosing this method. Verify the secret was created: kubectl get secret f5xc-api-token -n cert-manager Step 2: Install the Webhook via Helm The chart is published as an OCI artifact on GitHub Container Registry. Install it into the cert-manager namespace: helm install cert-manager-webhook-f5xc \ oci://ghcr.io/wenkow/charts/cert-manager-webhook-f5xc \ --namespace cert-managerbaba Check the rollout: kubectl rollout status \ deployment cert-manager-webhook-f5xc \ -n cert-manager kubectl get pods -n cert-manager Step 3: Configure a ClusterIssuer A ClusterIssuer tells cert-manager which ACME server to use and how to solve challenges. Here we're pointing it at Let's Encrypt production and configuring the F5 XC DNS-01 solver. Note on groupName: The field appears twice in the YAML below and serves different purposes. At the webhook level, it's a fixed identifier (acme.f5xc.io) that tells cert-manager which registered webhook to call. Inside config, it's the RRSet group name within your F5 XC DNS zone — a logical container for DNS records created by the webhook. You can choose any name; F5 XC will create the group if it doesn't exist. clusterissuer.yaml: apiVersion: cert-manager.io/v1 kind: ClusterIssuer metadata: name: letsencrypt-f5xc-prod spec: acme: email: admin@example.com server: https://acme-v02.api.letsencrypt.org/directory privateKeySecretRef: name: letsencrypt-f5xc-prod-account-key solvers: - dns01: webhook: # Fixed identifier — tells cert-manager which webhook to call groupName: acme.f5xc.io solverName: f5xc config: # Your F5 XC tenant name (subdomain part of your console URL) tenantName: my-tenant # RRSet group name in F5 XC DNS zone groupName: "cert-manager" # ttl: 120 # optional, default is 120 seconds apiTokenSecretRef: name: f5xc-api-token key: token # If using certificate-based auth instead of a token, replace # apiTokenSecretRef with certificateSecretRef — see webhook docs. Apply it: kubectl apply -f clusterissuer.yaml Verify it's ready: kubectl get clusterissuer letsencrypt-f5xc-prod Step 4: Request a Certificate Now the fun part. Create a Certificate resource. Note that we're requesting both the apex domain and a wildcard — something that's only possible with DNS-01. certificate.yaml: apiVersion: cert-manager.io/v1 kind: Certificate metadata: name: example-tls namespace: default spec: secretName: example-tls issuerRef: name: letsencrypt-f5xc-prod kind: ClusterIssuer dnsNames: - example.com - "*.example.com" Apply it: kubectl apply -f certificate.yaml Step 5: Watch the Certificate Lifecycle This is where it gets satisfying to watch. cert-manager creates an Order, which spawns one or more Challenge objects. Each Challenge triggers the F5 XC webhook to create a DNS TXT record. Watch Certificate status kubectl get certificate example-tls -n default -w Inspect the Order kubectl get orders -n default kubectl describe order example-tls-1-3552197254 -n default Status: Authorizations: Challenges: Token: <token> Type: dns-01 URL: https://acme-v02.api.letsencrypt.org/acme/chall/... Identifier: example.com Initial State: valid URL: https://acme-v02.api.letsencrypt.org/acme/authz/... Wildcard: true Challenges: Token: <token> Type: dns-01 URL: https://acme-v02.api.letsencrypt.org/acme/chall/... Identifier: example.com Initial State: valid URL: https://acme-v02.api.letsencrypt.org/acme/authz/... Wildcard: false Certificate: REDACTED Finalize URL: https://acme-v02.api.letsencrypt.org/acme/finalize/... State: valid URL: https://acme-v02.api.letsencrypt.org/acme/order/... Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Complete 8m37s cert-manager-orders Order completed successfully Inspect the Challenges before it's done kubectl get challenges -n default kubectl describe challenge example-tls-<1234567890> -n default Verify the Issued Certificate kubectl get secret example-tls -n default -o jsonpath='{.data.tls\.crt}' \ | base64 -d | openssl x509 -noout -text | grep -E "Subject:|DNS:|Not After" Not After : Aug 19 19:22:31 2026 GMT Subject: CN = example.com DNS:*.example.com, DNS:example.com Both the apex domain and the wildcard are covered by a single certificate. Using a Custom or Internal ACME Server One of the most powerful aspects of this setup is that the ACME server is completely configurable. If you run an internal CA — for example HashiCorp Vault with the ACME secrets engine, or Step CA — just change the server field in your ClusterIssuer: spec: acme: email: admin@internal.example.com server: https://vault.internal.example.com/v1/pki/acme/directory # or # server: https://step-ca.internal.example.com/acme/acme/directory The webhook doesn't care which ACME server you use — it only handles the DNS side of the challenge. This makes the setup equally useful for: Internet-facing services using Let's Encrypt Internal services using a corporate CA Mixed environments where different ClusterIssuer objects point to different CAs, all sharing the same F5 XC DNS solver Troubleshooting Tips Challenge stays in pending for a long time Check the webhook logs for API errors: kubectl logs -n cert-manager -l app=cert-manager-webhook-f5xc --tail=50 READY: False on ClusterIssuer Usually means cert-manager couldn't register an ACME account. Check that the email field is valid and the ACME server URL is reachable. TXT record not appearing in F5 XC - Verify that the credentials you stored in the Secret have the right DNS permissions. In F5 XC Console, check that the service account has the f5xc-dns-management-admin role (or equivalent). API token permission issues will typically surface as 403 Forbidden errors in the webhook logs. Summary The cert-manager-webhook-f5xc project closes the loop between F5 Distributed Cloud DNS and the Kubernetes-native certificate management ecosystem. With a few manifests and a Helm install, you get: Fully automated certificate issuance and renewal — no manual interventions, no expiry surprises Wildcard certificate support out of the box via DNS-01 ACME provider flexibility — works with Let's Encrypt, commercial CAs, or your internal PKI Clean Kubernetes-native UX — certificates are just resources; the entire lifecycle is observable with standard kubectl commands The webhook is open source, available on GitHub and packaged on Artifact Hub. Contributions and feedback are welcome. Related Resources cert-manager documentation cert-manager DNS01 webhook reference F5 Distributed Cloud DNS Management docs GitHub: Wenkow/cert-manager-webhook-f5xc Artifact Hub: cert-manager-webhook-f5xc116Views2likes1CommentSSL Virtual Server to Azure blob storage account

We have a requirement to use F5 as the frontend for Azure storage accounts hosting blob file containers. The SFTP Virtual servers work without issue however the https ones do not. I have tried both standard and performance layer 4 virtual servers but see connection errors when I try to connect though the F5. When we do this with App Services we have to use custom domains and upload the certificate but storage accounts don't have that option. Has anyone been able to get this working that can give me some pointers on what I might be doing wrong? Thanks,17Views0likes0CommentsDeploying an NGINX App across Kubernetes Multi-clusters with F5 BIG-IP Container Ingress Services

This tutorial simulates orchestrating multiple clusters using a single Kubernetes control plane with separate kubeconfig contexts, the same F5 CIS configuration patterns apply to genuinely separate Kubernetes clusters across different networks, cloud regions, or data centers. The simulation approach allows configuration testing without requiring multiple physical or cloud infrastructure environments.93Views1like0CommentsCustomer Edge as a Fallback for F5 Distributed Cloud Regional Edge

Introduction F5 Distributed Cloud Regional Edges (REs) form the backbone of F5’s globally distributed application delivery network. These F5-managed Points of Presence handle load balancing, Web Application Firewall (WAF) enforcement, bot protection, and API security for thousands of organizations worldwide. While F5 Regional Edges are engineered for extreme resilience—with built-in redundancy, geographic distribution, and continuous monitoring—no infrastructure is entirely immune to disruption. A defense-in-depth strategy demands that organizations consider even low-probability scenarios. This article explores how F5 Distributed Cloud Customer Edge (CE) nodes can serve as a fallback data path in the unlikely event of a Regional Edge outage, leveraging a capability that many organizations already have deployed but may not have considered for this purpose. Understanding the RE and CE Data Paths Regional Edge: The Default Path In a typical F5 Distributed Cloud deployment, application traffic follows this path: Client resolves the application’s FQDN via DNS DNS returns an F5 Regional Edge Anycast IP address Internet BGP routing directs the client to the nearest RE advertising that IP The RE terminates the connection, applies load balancing and security policies The RE forwards traffic to origin servers (directly or via CE nodes) The key mechanism here is IP Anycast. F5 Regional Edges advertise the same unicast IP address from multiple Points of Presence worldwide via BGP. When a client sends traffic to that IP, the internet’s BGP routing infrastructure naturally selects the shortest AS path—effectively directing the client to the geographically closest (or topologically nearest) RE. This means the DNS itself does not perform geographic selection. Every client worldwide receives the same IP address in the DNS response. It is the underlying BGP routing on the internet that ensures each client reaches its nearest RE. This is a fundamental difference from GeoDNS-based approaches, where different IPs are returned depending on the client’s location. Anycast routing typically delivers lower and more consistent latency than GeoDNS for several reasons: BGP routes on network topology, not geographic approximation. BGP is a path-vector protocol that selects routes based on AS path length, local preference, and policy attributes—not latency or congestion. However, in practice, routing to the topologically nearest PoP (fewest AS hops) generally correlates with reasonable latency, even if it is not optimized for it. GeoIP databases are approximations. GeoDNS relies on commercial GeoIP databases to map IP addresses to locations. These databases can be inaccurate or outdated. BGP doesn’t care about IP-to-location mapping; it routes based on actual network reachability. BGP adapts in real-time. If a link fails or a PoP goes offline, the BGP reconverges and traffic shifts to the next-best path automatically—often within seconds. GeoDNS is static until the DNS TTL expires. During that window, clients may continue hitting a degraded or unreachable endpoint. Regional Edges operate as a fully managed SaaS infrastructure. Organizations benefit from F5’s global Anycast network without deploying or maintaining edge nodes themselves. Customer Edge: The On-Premises Path Customer Edge nodes, typically deployed in on-premises data centers or customer cloud environments, can provide the same load balancing and WAF capabilities as Regional Edges. This is a critical but often underappreciated architectural property of the F5 Distributed Cloud platform. When a load balancer is configured in the F5 Distributed Cloud Console, it can be advertised on: Regional Edges only — the default for internet-facing applications Customer Edges only — common for internal applications Both Regional Edges and Customer Edges — the key to the fallback strategy described in this article The Shared Configuration Principle A single load balancer object in the F5 Distributed Cloud Console—with its full configuration including WAF policies, routes, rate limiting, and origin pools—can be advertised simultaneously on REs and CEs. There is no need to duplicate or maintain separate configurations. Aspect Regional Edge Customer Edge Load Balancing ✓ Same configuration ✓ Same configuration WAF / App Firewall ✓ Same policies ✓ Same policies Routes and Rewrites ✓ Same rules ✓ Same rules Origin Pool Selection ✓ Same pools ✓ Same pools Bot Defense ✓ ✓ API Protection ✓ ✓ Managed by F5 Customer Key Insight: This configuration parity is the foundation of the fallback strategy. The same security posture is enforced regardless of whether traffic enters through a RE or a CE. The Fallback Strategy: DNS-Based Traffic Switching How It Works The failover mechanism from Regional Edges to Customer Edges relies on a straightforward DNS change. Under normal operation, the application's DNS record points to the RE-assigned IP addresses. In a fallback scenario, the DNS record is updated to point to the public IP addresses of the Customer Edge nodes. Normal Operation: app.example.com → RE Anycast IP (e.g., 5.x.x.x, same IP globally) → BGP routing directs client to nearest Regional Edge → RE applies LB + WAF policies → RE forwards to origin Fallback Operation: app.example.com → CE Public IP (e.g., 203.0.113.10, standard unicast) → Client connects directly to Customer Edge (no Anycast routing) → CE applies the SAME LB + WAF policies → CE forwards to origin The application experience remains identical from the client's perspective in terms of security and functionality. The same policies are enforced, the same load balancing decisions are made, and the same origins are reached. The trade-off is that clients lose the Anycast proximity benefit—all traffic converges on the CE location(s) rather than being distributed to the nearest PoP. Prerequisites Before this strategy can be activated, several elements must be in place: The load balancer must be advertised on both REs and CEs. This is configured in the F5 Distributed Cloud Console under the load balancer's VIP advertisement settings. The CE advertisement must be active before an incident—configuring it during an outage adds delay and risk. The “Virtual Network“ type with value “ves-io-shared/public” is the equivalent of “Internet“ VIP advertisement. CE nodes must have public IP reachability. The CE's outside interface (or an NAT/firewall in front of it) must be reachable from the internet on the required ports (typically 443 and/or 80). This may require firewall rules, NAT configurations, or public IP assignments that should be validated in advance. CE nodes must have sufficient capacity for degraded-mode operation. Under normal operation, REs handle the full internet-facing traffic load. CEs used as fallback must be sized to sustain this traffic temporarily—long enough to bridge a RE outage, not to replace RE infrastructure indefinitely. This includes compute resources (CPU, memory), network bandwidth at the CE site, and upstream internet connectivity. DNS records must be prepared. The target CE IP addresses should be documented and ideally pre-staged in DNS management tooling so that the switchover can be executed rapidly. Important: All prerequisites must be validated before an incident occurs. A fallback strategy that hasn’t been tested is not a fallback strategy. Customer Edge: The On-Premises Path Customer Edge nodes, typically deployed in on-premises data centers or customer cloud environments, can provide the same load balancing and WAF capabilities as Regional Edges. This is a critical but often underappreciated architectural property of the F5 Distributed Cloud platform. When a load balancer is configured in the F5 Distributed Cloud Console, it can be advertised on: Regional Edges only — the default for internet-facing applications Customer Edges only — common for internal applications Both Regional Edges and Customer Edges — the key to the fallback strategy described in this article The Shared Configuration Principle A single load balancer object in the F5 Distributed Cloud Console—with its full configuration including WAF policies, routes, rate limiting, and origin pools—can be advertised simultaneously on REs and CEs. There is no need to duplicate or maintain separate configurations. Aspect Regional Edge Customer Edge Load Balancing ✓ Same configuration ✓ Same configuration WAF / App Firewall ✓ Same policies ✓ Same policies Routes and Rewrites ✓ Same rules ✓ Same rules Origin Pool Selection ✓ Same pools ✓ Same pools Bot Defense ✓ ✓ API Protection ✓ ✓ Managed by F5 Customer Key Insight: This configuration parity is the foundation of the fallback strategy. The same security posture is enforced regardless of whether traffic enters through a RE or a CE. DNS TTL: The Critical Factor Why TTL Matters The speed of a DNS-based failover is fundamentally governed by the Time-To-Live (TTL) value set on the application’s DNS records. TTL determines how long DNS resolvers and clients cache a DNS response before querying again. TTL Value Effective Switchover Time Trade-off 3600 (1 hour) Up to 1 hour Minimal DNS query load, slow failover 300 (5 minutes) Up to 5 minutes Moderate query load, reasonable failover 60 (1 minute) Up to 1 minute Higher query load, fast failover 30 (30 seconds) Up to 30 seconds Significant query load, near-instant failover Critical: TTL must be set before an incident occurs. Lowering TTL during an outage has no effect on records already cached by resolvers worldwide. The old, higher TTL continues to govern those cached entries until they expire naturally. Recommended Approach For organizations that consider CE fullbacks as part of their resilience strategy: Proactive TTL reduction: Set DNS TTL to 60–300 seconds on records that may need failover. This should be steady-state TTL, not an emergency change. Pre-incident preparation: Ensure the DNS change procedure is documented, tested, and can be executed by on-call staff within minutes. Automated failover (advanced): Integrate health checks on RE endpoints with DNS automation (via API calls to your DNS provider) to trigger the switch automatically. The TTL Reality Check Even with low TTL values, some clients and intermediate resolvers do not strictly honor TTL: Stub resolvers on end-user devices may cache beyond the stated TTL Some enterprise DNS resolvers impose minimum TTL floors (commonly 30–60 seconds) Browser DNS caches may hold entries independently of the OS resolver Connection keep-alive means existing TCP/TLS sessions continue to the old IP even after DNS changes Organizations should expect a gradual migration of traffic rather than an instantaneous cutover, even with aggressive TTL values. Plan for a transition window of several minutes during which traffic flows to both the old and new endpoints. Operational Caveats and Considerations Capacity Planning Under normal operations, Regional Edges absorb the full internet-facing traffic load across F5’s globally distributed infrastructure. Switching to Customer Edges concentrates this traffic onto a much smaller set of nodes, typically in one or two locations. Factor Regional Edges Customer Edges (Fallback) Geographic distribution Global Limited (customer sites) Bandwidth F5 backbone Customer uplink DDoS absorption F5 scrubbing capacity Customer/ISP capacity Horizontal scale Elastic Fixed (pre-provisioned) Recommendation: Conduct periodic load testing against CE nodes to validate they can sustain the expected RE traffic volume for a limited duration. CE infrastructure does not need to match RE capacity long-term, but it must hold up long enough to bridge an outage window without service degradation. DDoS Protection F5 Regional Edges benefit from F5’s network-level DDoS mitigation infrastructure. When traffic is redirected to Customer Edge nodes, this protection layer is bypassed. The CE site’s internet uplink becomes the direct target for any volumetric attack. Mitigation options: Ensure the CE site has upstream DDoS protection from the ISP or a dedicated scrubbing service Consider maintaining a DDoS-protected IP transit for CE public addresses If using BGP-routed DDoS protection, ensure CE public prefixes are covered Failover Workflow When a RE outage is confirmed and the decision to fail over is made, the procedure is: Step 1: Update DNS Records Change the application’s DNS A record (or AAAA for IPv6) from the RE IP addresses to the CE public IP addresses. If multiple CEs are available, configure multiple A records or use the BGP/ECMP architecture described in Part One of this series to distribute traffic across CEs behind a single public IP. Step 2: Monitor the Transition Observe traffic shifting to CEs as DNS caches expire. Expect a gradual ramp-up over a period aligned with your DNS TTL. Monitor CE resource utilization, error rates, and application response times. Step 3: Restore Normal Operation Once RE services are restored, reverse the DNS change to point back to RE IP addresses. Again, the transition back follows the same TTL-governed timeline. Validate that traffic has fully returned to the RE path before considering any CE-specific fallback infrastructure changes. Conclusion F5 Distributed Cloud Customer Edges provide a credible fallback path for the unlikely scenario of a Regional Edge outage. The platform’s ability to advertise a single load balancer—with identical configuration, WAF policies, and origin pools—on both REs and CEs is the enabling feature that makes this strategy viable without configuration duplication or drift. The key success factors are preparation and proactive configuration: DNS TTL must be set low before an incident, not during one CE capacity must be sufficient to handle traffic load in a degraded mode—this is a temporary measure, not a long-term replacement for RE infrastructure The full failover procedure must be tested periodically to remain viable Operational caveats—latency, DDoS exposure, session interruption—must be understood and accepted as part of the failover trade-off This approach does not replace the resilience built into F5’s Regional Edge infrastructure. Rather, it provides an additional layer of organizational confidence: even in a worst-case scenario, the application delivery path can be restored using infrastructure the organization already controls.94Views1like0CommentsHow to use F5 Distributed Cloud to block (OFAC) Sanctioned Countries

Over the last several days, the climate has changed significantly in Eastern Europe, and I have been getting asked a lot about the possibility of blocking Office of Foreign Assets Control - Sanctioned Countries (OFAC) with F5 Distributed Cloud, and how to do it. The answer is quite simple; yes we can do it and its a pretty simple configuration. I think its also important to point out that this same process can be used to configure any other required GeoFencing policies as well. Do we also have ways to block DDOS and provide WAAP services? Also yes, but outside the scope of this article. In this article, I will focus on how to deploy & create: [Namespace] Service Policies Origin Pool(s) to send traffic to Distributed listener / load balancer with the security policy assigned View Security Events. Level Set Most of this article will be based around ClickOps deployment of this use-case. That said, the F5 Distributed Cloud is an API first platform, so everything can be done with your tools of choice for interaction with declarative APIs. I will include JSON manifests of the configured items as well to provide examples of what delcarations will resemble, as well as support direct import into the distributed cloud. It would be possible to say, set the policy configuration JSON into github as a source of truth, and have webhooks set up to push to the platform any time a change is made to ensure configurations stay within proper alignment. Who do we block? Before we can start blocking things, we need to know what exactly to block, so we need to acquire a list of OFAC Sanctioned Countries. There are a couple of options here as well. CSV straight from the source: https://home.treasury.gov/policy-issues/office-of-foreign-assets-control-sanctions-programs-and-information Dig through available data sources on Data.Gov: https://catalog.data.gov/dataset/consolidated-non-sdn-sanctions-list Third Party ACL Sites like Country Block List: https://www.countryipblocks.net/ofac.php (I cannot personally vouch for the accuracy of this content.) For the sake of brevity here, I will focus on just some top level country sanctions based on country code versus IP Subnet, but thats up to preference. Configuration Log in to your F5 Distributed Cloud Console. (If you do not have a tenant today, you should reach out to your F5 Account Team immediately and rectify the situation!) Service Policies for everyone! There are a couple of options for service policies in the platform. Shared Policies: Policies created in the Shared namespace, can be shared globally within your tenant. Benefit: Globally set policy baseline for all applications in your tenant. Namespace Policies: Policies created in a Namespace can only be shared within that namespace. Benefit: Namespace service policies applied by default to all listeners / load balancers. For this example, we will create the policy in a namespace. From the tiles, select Load Balancers, and then we need to ensure that we are in the proper namespace. (As with everyting on the platform, there are multiple ways to get places, and multple ways to configure things, so this will only cover 1 example of how.) Now we need to expand Security, hover over Service Policies, Click Service Policies, and in the new window click Add service policy. Populate the Name under metadata, leave attachment as Any Server, and set Select Policy Rules to Denied Sources. Now, we could use IP blocks, but for this example we will just use the Country List configuration parameter and enter country codes. This will pull from GeoIP validation. UPDATE: I have been getting a lot of notes on this article based on Ukraine versus Crimea, and how to specify since its not seperated out as a country code. Can we Allow Ukraine and block Crimea? Absolutely! For Crimea, we need to pull from another database, so for this example I am going to pull from https://bgpview.io/search/Crimea and we are going to block by ASN. So within our service policy, click Add item under BGP ASN Set. And on this screen we enter in all the ASN's that we discovered from the previous link. Then click Apply. (Making sure to now remove Ukraine from our Block List.) Then we need to determine Default Action. This is where you can cause some pain for yourself, so pay special attention when selecting Default action (“Default Action for requests from sources that do not belong to this list”), if you are using Next Policy, ensure the next policy allows traffic, or set the Default action to Allow, or the application will not receive any traffic. In this example I will only be using one policy, so I will set the Default Action to Allow (as noted in the JSON). Save and Exit. UPDATE 2: OK, you guys are killing me! Can we go to City Level or target regions outside of ASN? Yes! Here is how you do that. (I should plan better in the future for less edits!) Just to keep things clean, lets add a new Service Policy. This time, under Select Policy Rules, we are going to select Custom Rule List from the drop down. Then from here, we want to click Configure under Rules, then Add Item. Give our new rule a name, and click Configure under Rule Specification. From here, we are going to change the dropdown under Clients - Client Selection from Any Client to Group of Clients by Label Selector. Then under Selector Expression, Add Label. In the available selections you will find geoip.ves.io/city, country, and region (as well as some really cool IP Intelligence options but those are out of scope for this article.). Lets do a Region first to see how that works. Selct geoip.ves.io/region, then =, then start typing a region, for example Crimea. Once we have it set, scroll down and click Apply. Now, lets add another rule, but this time we are going to select city. We are already blocking Russia in our earlier policy, but just for an example lets add = Moscow. Now scroll down and click Apply. Thats it! Now we have policies to block based on GeoIP Country, Region, and City, as well as BGP ASN. We can apply all of them to our application at the same time. Now, lets say you just want to cheat, and copy and paste some JSON in, or you want to build it into a pipeline. You can go and check out the API specifications for Service Policy here: https://docs.cloud.f5.com/docs/api/service-policy The JSON would resemble the following for our first policy: { "metadata": { "name": "coleman-ofac-deny", "namespace": "m-coleman", "labels": {}, "annotations": {}, "description": "", "disable": false }, "spec": { "algo": "FIRST_MATCH", "any_server": {}, "rules": [ { "kind": "service_policy_rule", "uid": "c12f8c5d-44c2-495c-83e8-30842e9e0a7f", "tenant": "f5-sa-rnxeudss", "namespace": "m-coleman", "name": "ves-io-service-policy-coleman-ofac-deny-asn-list" }, { "kind": "service_policy_rule", "uid": "5d24d0d0-6c6b-4c7b-b5b3-4bec67c2ffed", "tenant": "f5-sa-rnxeudss", "namespace": "m-coleman", "name": "ves-io-service-policy-coleman-ofac-deny-country-list" }, { "kind": "service_policy_rule", "uid": "f9df0b83-21de-4c13-aebf-4e27fc580ed0", "tenant": "f5-sa-rnxeudss", "namespace": "m-coleman", "name": "ves-io-service-policy-coleman-ofac-deny-default-action" } ], "deny_list": { "ip_prefix_set": [], "asn_list": { "as_numbers": [ 204791, 205515, 208090, 28761, 41269, 43222, 43564, 49617, 59744, 8654 ] }, "asn_set": [], "country_list": [ "COUNTRY_BY", "COUNTRY_BA", "COUNTRY_BI", "COUNTRY_CF", "COUNTRY_CU", "COUNTRY_IR", "COUNTRY_IQ", "COUNTRY_KP", "COUNTRY_XK", "COUNTRY_LY", "COUNTRY_MK", "COUNTRY_SO", "COUNTRY_SD", "COUNTRY_SY", "COUNTRY_ZW", "COUNTRY_CD", "COUNTRY_LB", "COUNTRY_NI", "COUNTRY_RU", "COUNTRY_SS", "COUNTRY_VE", "COUNTRY_YE" ], "tls_fingerprint_classes": [], "tls_fingerprint_values": [], "default_action_allow": {} }, "simple_rules": [] } } And our second policy JSON puts us over the limit for the article, but all JSON from these configs are in the attached file. Origin Pools Origin Pools are where we send traffic to, so if you are familiar with BIG-IP its just the Pool, and if you are familiar with NGINX its our Upstream. All of the settings and nerd knobs that are part of origin pools are out of scope of this article, so we are just going to point our origin at a single public IP and move on. To create an Origin Pool, Expand the Manage Block, hover over Load Balancers, click Origin Pools, and then Add Origin Pool. Give the Origin Pool a Name under Metadata. Then under Origin Servers click Add Item so we can add an upstream server. For this example I am going to use a Public DNS Name of Origin Server, but you should use whichever applies best to your situation. Click Add Item once done, which will return us to the Origin Pool Config. Make sure to select the correct port, and any TLS settings for your environment. Click Save and Exit. Load Balancer / Listener Now that we have somewhere to send traffic, we need a way to receive traffic and assign security policies. We will be staying within scope for this use-case as well and not highlighting all of the details within the load balancer configuration. To create a Load Balancer, Expand the Manage Block, hover over Load Balancers, click HTTP Load Balancers, and then Add HTTP load balancer. Set a Name under Metadata. Under Basic Configuration, Domains, add a domain for the application. If you have delegated a DNS zone to the platform, we can automate DNS (and make certificate management super easy, but not required). Under Default Origin Servers, click Add Item, and in the Origin Pool drop down, select the pool that was created previously, then click Add Item. For VIP configuration we will leave as Advertise on Internet. You will notice under Security Configuration, Service Policies are already set to Apply Namespace Service Policies. Since I have a few policies in my namespace already, and I want to demonstrate OFAC policies, I am going to change this to Apply Specified Service Policies, and under Apply Specified Service Policies click Configure and select the previously created policy, then click Apply. From here click Save and Exit. Testing Using a VPN we can make sure that our policy is up and running. Since we did not configure a custom response page of any sort we should just get a 403 - Forbidden page. While users accessing from non OFAC countries will be able to access the application. Reporting We should be able to access the security events now as well, Expand Virtual Hosts, HTTP Load Balancers, and then over the load balancer we created earlier a hyperlink for Security Monitoring will show up, click it. We can see in the dashboard a L7 security event, and if we select Security Events, we will see that our VPN based request was flagged (l7_policy_sec_event, Rule to match CountryList). If its not totally legible, I VPN'd to Belarus and tried to access the page. Hint: Being able to use the JSON to copy policies is really great, I have used this in action with several customers to show how quickly we can backup and copy policies between environments in a few seconds. Thats it, now your web application is blocking traffic from OFAC sanctioned countries, and anyone else you want to keep out.7.4KViews9likes3CommentsBridging NetOps and DevOps with BIG-IP CIS, NGINX Plus, and IngressLink

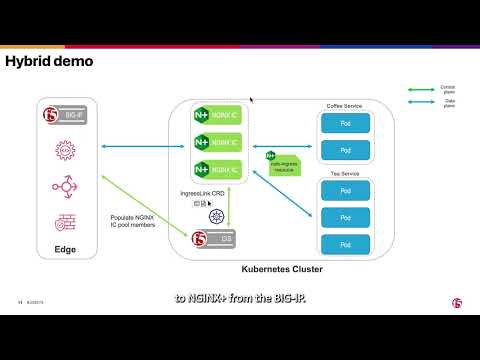

Introduction (The problem we are solving) Most organizations running Kubernetes at scale arrive at the same impasse. NetOps owns BIG-IP as the edge load balancer, providing HA, global traffic management, WAF, and DDoS protection. DevOps owns the Kubernetes cluster and everything inside it, including the ingress controller that routes HTTP traffic to application pods. These two teams have fundamentally different tools, workflows, and blast radii. Asking DevOps to configure BIG-IP directly creates a security and compliance problem: broad platform access for teams who shouldn’t need it. Asking NetOps to track pod IPs and manually update BIG-IP pool members as pods scale is operationally untenable. Pods are coming and going too fast for a human-driven workflow to keep up. The usual compromises fall short: A single-tier NGINX-only ingress puts all L4/L7 responsibility on a component with no enterprise HA outside the cluster. A CIS-only approach — where CIS programs BIG-IP virtual servers pointing directly at pod IPs — gives NetOps control but eliminates NGINX enhancements from the equation entirely. F5 IngressLink was designed specifically for this situation. It creates a clean two-tier architecture where BIG-IP and NGINX Plus each do what they’re best at, and CIS keeps them synchronized automatically without either team crossing into the other’s domain. Architecture overview: two tiers, one control plane Before any configuration, it’s important to understand what IngressLink is and isn’t. It is a Kubernetes Custom Resource Definition (CRD) that acts as the binding contract between CIS and NGINX Plus. It is not a proxy, not an additional data path component, and not a separate service. The data plane is simple: traffic flows from BIG-IP directly to NGINX Plus pods, then on to backend application pods. The CIS is purely a control plane agent. Tier 1 — BIG-IP (outside the cluster, at the network edge) BIG-IP handles everything at and below L4, from the internet to the cluster boundary: Enterprise-grade HA with active/standby or active/active pairs Global server load balancing and geographic traffic steering DDoS protection and rate-limiting at line rate TLS termination Health monitoring of NGINX Plus pods via the readiness port (8081) A stable, predictable VIP that NetOps controls and security teams can enumerate Critically, BIG-IP has no knowledge of individual application pods. It treats NGINX Plus pods as its pool members, which change far less frequently than application pod IPs. Tier 2 — NGINX Plus (inside the cluster) NGINX Plus runs as the in-cluster ingress controller and handles L7: Host and path-based routing to backend services TLS termination (for split-TLS deployments) JWT validation, OIDC integration, per-upstream rate-limiting Active health checks against backend pods — a NGINX Plus-only capability over OSS Dynamic upstream reconfiguration without reload when pods scale, via the NGINX Plus internal API NGINX App Protect WAF for in-cluster application layer protection NGINX Plus is configured entirely through Kubernetes-native resources — VirtualServer, VirtualServerRoute, Policy CRDs, or standard Ingress objects with NGINX annotations. DevOps teams never touch BIG-IP. CIS — the control plane bridge CIS runs as a Deployment inside the cluster and watches the Kubernetes API server for IngressLink resources. When it finds one, it: Discovers the NGINX Plus pods matched by the selector Constructs an AS3 declaration for a BIG-IP virtual server at virtualServerAddress, with a pool of NGINX Plus pod endpoints Posts that declaration to BIG-IP via the AS3 REST API Re-runs automatically whenever NGINX Plus pod membership changes — scaling, rolling updates — keeping BIG-IP in sync without human intervention Traffic flow and request path With everything in place, here is the complete end-to-end request path: Step by step: 1. Client hits the BIG-IP VIP (10.10.10.100:443). BIG-IP selects an NGINX Plus pod and forwards the TCP stream, prepending a Proxy Protocol header containing the original client IP/port via the Proxy_Protocol_iRule. 2. NGINX Plus reads and strips the Proxy Protocol header, resolves L7 routing rules (VirtualServer or Ingress), applies WAF/JWT/OIDC policy, and forwards to the target backend Service. 3. Responses traverse the reverse path. Real client IP is preserved in NGINX access logs and X-Forwarded-For headers throughout. TLS termination patterns Pattern Where TLS terminates When to use Edge TLS at BIG-IP BIG-IP VIP — plaintext to NGINX Plus BIG-IP and cluster on same trusted private network; centralized cert management on BIG-IP Split TLS / passthrough NGINX Plus — BIG-IP forwards encrypted stream BIG-IP–cluster segment is untrusted; per-app certs managed as Kubernetes Secrets How the IngressLink CRD wires it together The IngressLink resource is small but precise. Three fields do most of the work: apiVersion: "cis.f5.com/v1" kind: IngressLink metadata: name: nginx-ingress namespace: nginx-ingress spec: virtualServerAddress: "10.10.10.100" # BIG-IP VIP — NetOps-assigned iRules: - /Common/Proxy_Protocol_iRule # Pre-created on BIG-IP selector: matchLabels: app: ingresslink # Must match Service label below virtualServerAddress — The IP CIS programs on BIG-IP as the virtual server VIP. NetOps assigns and owns this IP; DevOps never touches it. iRules — References the Proxy Protocol iRule pre-created on BIG-IP. This is what enables BIG-IP to pass the original client IP to NGINX Plus. The iRule content is in the k8s-bigip-ctlr GitHub repo. selector.matchLabels — Tells CIS which pods form the BIG-IP pool. This label must match the label on the NGINX Plus Service. The NGINX Plus backing Service apiVersion: v1 kind: Service metadata: name: nginx-ingress-ingresslink namespace: nginx-ingress labels: app: ingresslink # Must match IngressLink selector spec: type: ClusterIP selector: app: nginx-ingress # Selects your NGINX Plus pods ports: - port: 80 targetPort: 80 name: http - port: 443 targetPort: 443 name: https - port: 8081 targetPort: 8081 name: readinessport # BIG-IP uses this for health monitoring NGINX Plus ConfigMap settings Two settings are required in the NGINX Plus ConfigMap to enable Proxy Protocol decoding and wire up IngressLink status reporting: controller: config: entries: proxy-protocol: "True" real-ip-header: "proxy_protocol" set-real-ip-from: "0.0.0.0/0" # Scope to BIG-IP SNAT subnet in production reportIngressStatus: ingressLink: nginx-ingress # Must match IngressLink resource name CIS deployment flag CIS must run in CRD mode. Without this flag, CIS ignores IngressLink resources entirely: --custom-resource-mode=true The operational model: who owns what With IngressLink in place, team responsibilities become explicit and non-overlapping: NetOps gets what they need: a stable VIP, a BIG-IP virtual server they can inspect and audit, and health monitoring they can observe. DevOps gets full autonomy inside the cluster — they configure NGINX Plus routing without raising a change request against BIG-IP. CIS ensures these two views stay synchronized without human intervention. When IngressLink is the right choice Use IngressLink when You have existing BIG-IP infrastructure providing edge services — HA pairs, GTM/DNS, an established WAF policy baseline — and need Kubernetes ingress to integrate with it rather than replace it. You have a real NetOps/DevOps team boundary and need both teams to retain their tooling and workflows. You need NGINX Plus capabilities inside the cluster (active health checks, dynamic upstream, App Protect WAF, JWT/OIDC) alongside BIG-IP capabilities at the edge. Your compliance posture requires that network-edge policy is managed separately from application-layer routing. Prerequisites and compatibility Component Minimum version F5 BIG-IP Container Ingress Services (CIS) v2.4+ BIG-IP v13.1+ NGINX Plus Ingress Controller v1.10+ AS3 3.18+ Full configuration examples — including the CIS CRD schema install command, the Proxy Protocol iRule, and test application manifests are in the F5Networks/k8s-bigip-ctlr GitHub repository under docs/config_examples/customResource/IngressLink/. Related resources F5 IngressLink — CIS Documentation NGINX Ingress Controller — F5 IngressLink Integration Guide IngressLink config examples (GitHub) IngressLink on OpenShift (GitHub) CIS Overview — F5 CloudDocs F5 Container Ingress Services (CIS) deployment using Cilium CNI and static routes Quick Deployment: Deploy F5 CIS/F5 IngressLink in a Kubernetes cluster on AWS 77Views2likes0Comments

77Views2likes0CommentsF5 XC HTTP 404 rout_not_found / rsp_code 404

I would like to add more point about the HTTP 404 error: route_not_found / rsp_code 404 in an XC (RE + CE) deployment. 1. Even if XC has the correct host match value in the route, you might still observe a 404 response. In such cases, check the DNS configuration on the CEs. A possible reason could be that the CEs are unable to resolve DNS for host which is configured in route. 2. Even if XC has the correct host match value, the path might not match. For example, if you have a single route as shown below and the request comes as https://example.com/, you may see rsp_code 404 , as it is not matching any routes. Example : HTTP Method:ANY Path Match : Prefix Prefix:/hello Headers Host example.com Orginpool: example_orgin pool https://my.f5.com/manage/s/article/K000147490231Views1like4CommentsNeed BIG-IP VE Lab License for Personal Study/Learning

Hi F5 Community, I am setting up a personal home lab to learn. F5 BIG-IP for certification preparation. I have deployed BIG-IP VE but need a lab license. to access the management GUI. Could anyone help me get a free lab/evaluation? license for personal learning purposes? Thank you.84Views0likes1CommentBIG-IP Cloud-Native Network Functions 2.3: What’s New in CNF and BNK

Introduction F5 BIG-IP continues to advance BIG-IP Next for Kubernetes (BNK) and Cloud-Native Network Functions (CNFs) to meet the growing demands of service providers and modern application environments. F5 provides the full stack required to make cloud-native networking work in a service provider environment. CNFs alone are not enough; you need functions, control, infrastructure, and observability, working together as one system. What is new in BIG-IP Cloud-Native Network functions 2.3 for BNK and CNF? Release 2.3 adds MPLS provider edge support (early access), native UDP/TCP load balancing, DPU-accelerated data plane offload on NVIDIA BlueField, and subscriber-aware Policy Enforcement Manager (PEM) with Gx interface integration. It also introduces VRF-aware AFM policies, GSLB with Sync Groups for multi-region deployments, BBRv2 congestion control, and crash diagnostics that operate without host-level Kubernetes access. This release targets service providers and telecom operators who need cloud-native networking without sacrificing the protocol support and policy control of traditional infrastructure. Cloud-Native Network Functions (CNFs): What Changed in 2.3? In release 2.3, CNF capabilities focus on strengthening the underlying network functions required for service provider deployments. How does BIG-IP CNF 2.3 handle crash diagnostics in restricted Kubernetes clusters? Operating CNFs in production environments requires strong observability, even in restricted clusters. Release 2.3 introduces improvements to the crash agent that allow core files to be collected directly from pods without requiring host-level access. This enables deployments in more secure Kubernetes environments and simplifies troubleshooting when issues occur. How does BIG-IP CNF 2.3 operate in multi-tenant and multi-VRF environments? Multi-tenant environments demand precise control over traffic behavior. Release 2.3, Advanced Firewall Manager (AFM), introduces VRF-aware ACL and NAT policies, allowing operators to apply firewall and translation rules within specific routing contexts. This enables better segmentation and supports overlapping address spaces while maintaining consistent policy enforcement. It aligns CNFs more closely with how service provider networks are designed and operated. Can BIG-IP CNF 2.3 operate at edge? One of the most significant additions to this release is MPLS support within CNFs. This is currently an early-access feature and is expected to reach general availability in a future release. CNFs can now operate as provider edge nodes, supporting label-based forwarding and applying policies based on MPLS labels. This allows service providers to extend existing MPLS architectures into Kubernetes environments without requiring major redesigns. It also provides a path for replacing legacy systems with cloud-native alternatives while maintaining familiar networking constructs. UDP and TCP Application Load Balancing Release 2.3 expanded BNK support for high-performance UDP and TCP application load balancing, endtending traffic management capabilities beyond HTTP-based workloads. This enhancement enables support for a broader range of cloud-native applications, AI infrastructure traffic and telco protocols that rely on Layer 4 traffic patterns. Traffic can be intelligently balanced across services both inside and outside the Kubernetes cluster, which is critical for hybrid deployments and incremental modernization efforts. BNK deployed on NVIDIA DPUs has supported hardware offload capabilities since the Limited Availability (LA) release. With Release 2.3, advanced AI traffic optimization capabilities are now brought to General Availability (GA), including Intelligent AI Load Balancing, LLM Routing integration, Semantic Caching, and Token Governance. These capabilities help optimize AI inference traffic, improve GPU utilization, reduce latency, and enable more intelligent traffic steering across modern AI infrastructure environments. This enhancement is especially important for service providers and large enterprises operating mixed environments where traditional applications, AI workloads, and new cloud-native services must coexist. Organizations can modernize incrementally by introducing cloud-native components without requiring immediate architectural redesigns or disrupting existing applications. Subscriber-Aware Policy Enforcement Subscriber awareness remains a core requirement for service providers. Release 2.3 enhances Policy Enforcement Manager (PEM) with GX interface integration, enabling real-time policy enforcement based on subscriber data. This allows traffic to be classified and controlled dynamically, supporting use cases such as QoS enforcement, traffic shaping, and content filtering. It also enables compliance with regulatory requirements and opens new opportunities for service differentiation. Improved Observability and Aggregated Insights As CNFs scale, visibility becomes more complex. Earlier approaches relied on per-pod metrics, which made it difficult to build a unified view of the system. Release 2.3 enhances PEM by introducing aggregation through TODA, allowing statistics and session data to be collected and presented as a single entity. Enhancements to MRFDB and PEM reporting further improve visibility into subscriber sessions and traffic behavior, giving operators a more complete and centralized view of network activity. Building on this foundation, release 2.3 expands PEM capabilities with subscriber-aware policy enforcement. By integrating external policy systems and classification services, CNFs can now correlate traffic with subscriber identity and apply policies dynamically. This provides deeper insight into how individual subscribers and applications behave on the network, enabling more precise control and improved operational awareness. Additional DNS visibility enhancements, such as adding dig support into netkvest, further strengthen troubleshooting capabilities. By enabling more detailed DNS query inspection and response analysis, operators can quickly diagnose resolution issues and better understand traffic patterns tied to application behavior. Together, these enhancements move CNFs beyond basic monitoring. They provide a richer, more contextual understanding of traffic, subscribers, and services, which simplifies operations and enables faster troubleshooting in large-scale environments. DNS and Traffic Behavior Enhancements Release 2.3 includes improvements that address real-world network behavior, particularly in how DNS and transport protocols operate at scale. One example is the handling of DNS requests during certain scenarios. Instead of silently dropping traffic, CNFs can now return NXDOMAIN responses, preventing upstream systems from interpreting the lack of response as a service failure. This improves reliability and ensures better interoperability with external DNS resolvers in distributed environments. In addition, support for BBRv2 congestion control improves TCP performance in challenging conditions. It provides better fairness across flows and adapts more effectively to latency and packet loss, improving overall user experience in mobile and distributed networks. Extending to Multi-Region Traffic Management Release 2.3 continues to expand DNS capabilities with early access support for Global Server Load Balancing, enabling traffic distribution across multiple locations such as data centers and cloud environments. This represents an important step toward multi-region and hybrid architectures, where applications are no longer tied to a single cluster or deployment location. Building on this, the introduction of GSLB Sync Groups improves how configurations are managed across distributed deployments. Within a sync group, one instance is designated as the sync agent and is responsible for propagating configuration changes to other members. This approach ensures consistency across environments while preventing conflicting updates and reducing the risk of synchronization issues. Release 2.3 also begins to introduce more intelligent traffic steering with topology-based load balancing. This capability allows traffic to be directed based on user-defined parameters such as location or network proximity. As a result, operators can optimize application delivery by sending users to the most appropriate endpoint, improving latency and overall service quality. Together, these enhancements move CNFs closer to providing a fully cloud-native, globally distributed traffic management solution that aligns with modern application deployment patterns. What is new in BNK 2.3 for AI use cases? BNK 2.3.0 hardens and productizes key AI traffic optimization capabilities previously delivered through Early Access or iRules-based implementations, bringing them into native code for production-scale AI deployments while further expanding support for next-generation accelerated AI infrastructure. AI Traffic Optimization: Semantic AI Model Caching (GA) Reduces redundant GPU compute for similar or repeated prompts, improving token economics while lowering latency and increasing GPU efficiency for inference workloads. Intelligent AI Load Balancing (GA) Dynamically distributes AI inference traffic using real-time telemetry and infrastructure awareness to optimize GPU utilization, reduce queue bottlenecks, and improve response times. LLM Routing Integration (GA) Enables intelligent routing of requests across different LLMs and AI services based on policies such as cost, performance, model specialization, or operational requirements. Token Governance (GA) Provides visibility and control over token consumption with capabilities such as token monitoring, accounting, and rate limiting to help customers better manage AI infrastructure costs and tenant usage policies. AI Hardware Support Expansion: ConnectX-8 SuperNIC Support at x86 systems (GA) Expands BNK’s accelerated infrastructure support to next-generation NVIDIA networking platforms, enabling customers who are not yet ready to adopt DPUs to further optimize CPU efficiency and AI traffic processing performance. Beside CX-8, BNK 2.3.0 also supports CX-7s and BlueField-3 running in SuperNIC mode. BNK for Telco and Modern Applications Modern environments rarely consist of purely cloud-native applications. Most organizations are running a mix of legacy protocols, telco workloads, and newer microservices. Release 2.3 addresses this reality directly. One of the most important additions to this release is TCP and UDP load balancing. This extends BNK beyond HTTP-based traffic management and enables support for telco protocols and other non-HTTP workloads. It also allows traffic to be balanced both inside and outside the Kubernetes cluster, which is critical for hybrid architectures and phased migrations This capability reflects a broader shift in BNK. It is no longer just an ingress layer. It is evolving into a unified traffic management platform that can handle diverse protocols and application types without forcing architectural changes. For service providers, this means they can modernize incrementally. Existing applications can continue to operate while new components are introduced in Kubernetes. For enterprise environments, it provides a consistent way to manage traffic across distributed services without introducing additional tools or complexity. What’s new in BNK 2.3 for modern apps & telcos? Multi-Tenant Debugging (RBAC-secured Debug API) We added a new Debug-API that uses the Kubernetes RBAC (Role-Based Access Control) to allow running debug commands according to defined permissions. The administrator can assign rules (Administrator, Operator, Tenant) to users. This allow or deny users to run debug commands according to their rule and creates separation between Namespace Tenants to limit the user access to their Namespace only. Grafana Sample Dashboard Template We created a detailed instruction document that describes the setup of Prometheus and Grafana for Multi Tenancy support. With that the customer can configure his own Dashboards to display the tenant stats and separate the display so each tenant can view its own. The customer can create its own Dashboards based on the samples. Each Namespace Tenant can view its own statistics only. Gateway API Conformance Improvements BNK’s development has largely been more focused on adding functionality to expand the Gateway API than on conformance with existing use cases. Gateway API Security Enhancement (Namespace-based FW) When working in Kubernetes, serving to isolate tenancy using namespace it is valuable to be able to create namespace-based firewall rules. With our isolation of tenancy, customers do not want to have separate tools for east-west (Kubernetes network policies) and north-south (firewall); we unify their security controls. Gateway API: Load Balancing External Resources BNK’s Gateway API routes are being extended to specifically cover load balancing to external endpoints. This can either leverage the Kubernetes concept of an “endpoint slice” or using the F5 “F5pool” CRD. Because external endpoints aren’t probed by Kubernetes, BIG-IP monitors are enabled for these Gateway routes. TMOS Integration: DNS (aka GTM/GSLB) Coordinate external DNS (GTM/GSLB) BIG-IP with BNK; publish/remove ingress VIPs as BIG-IP TMOS DNS Service WideIP pool members with automatic WideIP pool member enable/disable based on K8s service availability. This feature allows multi-tiered deployments with global service availability. BNK Integration with Openshift Platform Previously, the only Openshift-specific functionality in BNK was support for the OVN CNI (via Multiple External Gateways (MEG) feature). With this new work, FLO will support being used via an Openshift operator hub (initially private instance, until business/partner items worked out) and Openshift routes are translated to BNK Gateway objects. Gateway API: IPAM Support (Infoblox) Previously, we integrated the F5 IPAM controller with BNK for Gateway API. That IPAM now can leverage Infoblox, which is used by BNPP (and was a feature of the original IPAM controller, as leveraged by CIS). Proxy Protocol Support Our customer uses proxy protocol with BIG-IP to serve their applications. To migrate to BNK, they required similar support. Under the covers, this is enabled with an iRule, but that iRule is hidden from the customer. Active-Standby Interface Bonding Currently, having the node use LACP and applications like BNK use DPDK separately causes problems. This method is meant to allow the Linux host to control active links, leveraging a “floating MAC address”. Limited Availability/EA: IBM Cloud ROKS Support This initiative prioritizes support in IBM cloud. The feature is LA in this release and Future releases will GA the use of IBM Cloud with ROKS. Early Access: BNK Support for AWS/EKS Early access support for AWS/EKS – this should be used for F5-led demonstration, not for direct-to-customer deployment. First step toward Public Cloud support in AWS/EKS. Frequently asked questions These questions represent the most common queries architects and operators ask when evaluating BIG-IP Cloud-Native Network Functions 2.3. Q: What is new in BIG-IP Cloud-Native Network Functions 2.3? A: BIG-IP CNF 2.3 adds MPLS provider edge support (early access), native UDP/TCP load balancing, DPU-accelerated data plane offload on NVIDIA BlueField, subscriber-aware PEM with Gx integration, VRF-aware AFM policies, GSLB with Sync Groups, congestion control, and crash diagnostics that operate without host-level Kubernetes access. Q: What CPU savings does BNK on NVIDIA BlueField DPU deliver? A: Validated testing (Tolly Report #226104, February 2026) showed approximately 80% host CPU reduction, 40% more output tokens per second versus HAProxy on Llama 70B, and 61% faster time to first token (TTFT). These results reflect BNK offloading data plane processing from the host CPU to the BlueField DPU, freeing the host compute for application workloads. Q: Does BIG-IP CNF 2.3 support protocols beyond HTTP and HTTPS? A: Yes. Release 2.3 adds native UDP and TCP load balancing to both CNFs and BNK, extending traffic management beyond HTTP. This supports telco protocols such as GTP-U, Diameter, and RADIUS, with the ability to balance traffic across services inside and outside the Kubernetes cluster.175Views1like0CommentsThe Blind Spot in Cloud WAF Architectures: Shared IPs and the Origin Bypass Problem

Cloud WAFs are a widely adopted security control, but they carry a structural trust assumption that most operators never examine: whitelisting a vendor's IP ranges grants access not just to your WAF instance, but to every tenant on that platform. This article examines how that assumption can be exploited, why IP-based ownership validation cannot solve it, and what mitigations, including Zero Trust-aligned architectures like F5 Distributed Cloud Customer Edge, actually close the gap. When you deploy a Cloud WAF, whether it's F5, Imperva, Cloudflare or any similar service, you're trusting it to stand between the internet and your origin server. You configure your DNS to point to the WAF, tighten your firewall to only accept traffic from the WAF's published IP ranges, and consider yourself protected. The traffic gets inspected, filtered, and forwarded. Job done. Except there's a subtle but serious flaw baked into this architecture that is widely overlooked, and it stems from a property that is fundamental to how Cloud WAFs work: shared egress IPs. How Cloud WAF Proxying Actually Works When a Cloud WAF forwards traffic to your origin, it does so from a pool of IP addresses that the vendor owns and publishes. These ranges are shared across all customers of that vendor. In fact, every major Cloud WAF provider explicitly instructs you to whitelist their entire published IP range as part of the standard onboarding process. This is not a misconfiguration on your part, it is the vendor-recommended setup. Your firewall rule, "allow traffic from this vendor's IP ranges", doesn't mean "allow traffic from my WAF instance." It means "allow traffic from anyone who also happens to be a customer of that vendor." That distinction matters enormously. An attacker who is also a customer of the same WAF vendor can point their own WAF configuration at your origin IP. When they do, their traffic, potentially malicious and definitely uninspected by your WAF policy, arrives at your server from the very IP ranges you've whitelisted. Your firewall lets it through. Your WAF policy, which applies only to traffic routed through your tenant configuration, never sees it. Your server is now reachable by anyone, even with a $20/month account for some vendors, on the same WAF platform. Why This Matters More Than It Seems This isn't a theoretical edge case. The attack surface is: Broad: Every Cloud WAF customer on a given platform could potentially reach any other customer's origin. Silent: The origin server receives the traffic without any obvious indication it bypassed the WAF policy. Persistent: It doesn't require exploiting a vulnerability, it exploits an intentional architectural property. The classic goal of origin hardening, hiding your real IP and only allowing WAF traffic, is partially undermined the moment you realize that "WAF traffic" and "your WAF traffic" are not the same thing. The Missing Validation: Outbound Origin Ownership What makes this problem interesting is the asymmetry in how Cloud WAFs handle trust. On the inbound side, WAF vendors have robust validation. Before a vendor will proxy traffic for your domain, you must prove you own it, typically via a DNS TXT record or HTTP challenge, the same mechanisms used in TLS certificate issuance. No proof, no proxying. On the outbound side, the connection from the WAF to your origin, there is essentially no equivalent validation. Any tenant can point their configuration at any IP address. The WAF will dutifully forward traffic to it. The origin has no way, at the network layer, to distinguish "traffic from my WAF tenant" from "traffic from another tenant who decided to target my server." An obvious question is: why not apply the same ownership verification to origin IPs that vendors already apply to domains? The answer is that not all IP addresses can map cleanly to ownership the way domain names do. In practice, IPs are frequently shared, multiple services behind a load balancer or shared hosting infrastructure, and in cloud environments they rotate constantly as instances scale up and down or elastic IPs are reassigned. Unlike a domain name, an IP address is not a stable, exclusive identifier of a single operator. That said, the picture is not entirely bleak for enterprises. Organizations that own their own IPs do have a stable and exclusive relationship with their IPs. In these cases, ownership validation is technically feasible: a vendor could verify control via a well-known URL served at that IP, or via a PTR record in the reverse DNS zone, both of which require actual control of the address space. This would mirror the HTTP-01 and DNS-01 challenge models already used in certificate issuance. For the broader market, however, where IPs are leased from cloud providers and rotate frequently, this approach does not hold. The asymmetry therefore reflects a genuine structural limitation of IP-based identity for the general case, even if partial solutions exist for enterprises with dedicated address space. Mitigations Available Today Since origin IP ownership validation isn't yet a standard feature across Cloud WAF platforms, the burden falls on origin operators. There are several practical mitigations, ranging from straightforward to architecturally robust. Shared Secret Header Authentication The most common approach is having the WAF inject a secret header on all forwarded requests (for example, X-Origin-Token), which your origin validates on every request. Traffic missing the header, or presenting the wrong value, is rejected. Most Cloud WAF vendors support this through custom header injection rules. The weakness is operational: the secret must be kept out of logs, rotated periodically, and is only as strong as your header validation implementation. Mutual TLS Between WAF and Origin Several vendors support presenting a client certificate when connecting to your origin, allowing your server to cryptographically verify that the connection came from your WAF vendor's infrastructure, not just the right IP range. This is stronger than a shared secret because it's not a value that can be accidentally leaked in a log file. Private Tunneling (Eliminating the Public IP Entirely) The most architecturally sound solution is to remove your origin from the public internet entirely. An outbound-only encrypted tunnel from your origin to the WAF's edge means your server never needs a publicly routable IP. There is no firewall rule to configure, no IP range to whitelist, and the shared-IP problem becomes entirely irrelevant because there is no exposed surface to exploit. This approach is increasingly the recommended baseline for new deployments, not just a hardening option. Host Header Validation at the Origin Your origin should always reject requests where the Host header doesn't match your expected domain. However, this provides no real protection against the shared-IP bypass, as an attacker can trivially rewrite the Host header in their own WAF configuration to match your domain before forwarding traffic to your origin. It remains good hygiene, but should not be counted as a mitigation for this specific threat. A Note on F5 Distributed Cloud Customer Edge F5 Distributed Cloud takes a different architectural approach that sidesteps this problem structurally. Rather than relying on shared cloud-based egress IPs, F5 XC allows you to deploy a Customer Edge (CE) node(s), a dedicated piece of infrastructure that runs within your environment or network perimeter. Traffic flows from the F5 global network through an encrypted tunnel directly to your CE node, rather than from a shared IP pool to a publicly exposed origin. Because the CE node is yours, deployed in your environment and associated exclusively with your tenant, the concept of another tenant "reaching your origin from a shared IP" simply doesn't apply. The origin isn't exposed to a shared egress pool in the first place. This design is also a natural fit for Zero Trust architectures: the origin never implicitly trusts any network-level connection, and access is gated by tenant identity rather than IP address. It's an architectural answer to an architectural problem, rather than a mitigation layered on top of a fundamentally shared infrastructure model. Conclusion The Cloud WAF shared-IP bypass is a genuine blind spot that deserves more attention than it typically receives. The root cause is an asymmetry in how trust is established: vendors carefully validate that you own your domain before proxying it, but apply no equivalent validation to origin IP ownership. Any tenant on the same platform can route traffic to your origin. The good news is that practical mitigations exist, mTLS, secret headers, and private tunneling cover most production scenarios. The better news is that some architectures, like F5 XC with Customer Edge, eliminate the exposure at the design level rather than patching around it. If your current posture is "I've whitelisted the WAF vendor's IP ranges," it's worth asking: which WAF customers, exactly, have you let in? This article was written by the author and formatted with the assistance of AI.168Views1like1Comment