Back to Basics: Health Monitors and Load Balancing

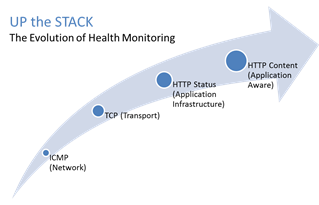

#webperf #ado Because every connection counts One of the truisms of architecting highly available systems is that you never, ever want to load balance a request to a system that is down. Therefore, some sort of health (status) monitoring is required. For applications, that means not just pinging the network interface or opening a TCP connection, it means querying the application and verifying that the response is valid. This, obviously, requires the application to respond. And respond often. Best practices suggest determining availability every 5 seconds or so. That means every X seconds the load balancing service is going to open up a connection to the application and make a request. Just like a user would do. That adds load to the application. It consumes network, transport, application and (possibly) database resources. Resources that cannot be used to service customers. While the impact on a single application may appear trivial, it's not. Remember, as load increases performance decreases. And no matter how trivial it may appear, health monitoring is adding load to what may be an already heavily loaded application. But Lori, you may be thinking, you expound on the importance of monitoring and visibility all the time! Are you saying we shouldn't be monitoring applications? Nope, not at all. Visibility is paramount, providing the actionable data necessary to enable highly dynamic, automated operations such as elasticity. Visibility through health-monitoring is a critical means of ensuring availability at both the local and global level. What we may need to do, however, is move from active to passive monitoring. PASSIVE MONITORING Passive monitoring, as the modifier suggests, is not an active process. The Load balancer does not open up connections nor query an application itself. Instead, it snoops on responses being returned to clients and from that infers the current status of the application. For example, if a request for content results in an HTTP error message, the load balancer can determine whether or not the application is available and capable of processing subsequent requests. If the load balancer is a BIG-IP, it can mark the service as "down" and invoke an active monitor to probe the application status as well as retrying the request to another available instance – insuring end-users do not see an error. Passive (inband) monitors are not binary. That is, they aren't simple "on" or "off" based on HTTP status codes. Such monitors can be configured to track the number of failures and evaluate failure rates against a configurable failure interval. When such thresholds are exceeded, the application can then be marked as "down". Passive monitors aren't restricted to availability status, either. They can also monitor for performance (response time). Failure to meet response time expectations results in a failure, and the application continues to be watched for subsequent failures. Passive monitors are, like most inline/inband technologies, transparent. They quietly monitor traffic and act upon that traffic without adding overhead to the process. Passive monitoring gives operations the visibility necessary to enable predictable performance and to meet or exceed user expectations with respect to uptime, without negatively impacting performance or capacity of the applications it is monitoring.2.8KViews1like2CommentsMonitoring Windows Services from BIG-IP

Community MVP hwidjaja dropped a bomb-sized nugget of wisdom in the forums last week that I would be remiss if I didn’t write up and share with the greater community at large. Zenoss has a WMI executable for linux called wmic, which allows you to query via wmi a windows box. So in the case for Terminal Services, you can use this tool to check if the TermService service is running, and mark the server up/down appropriately. Because and external executable with be utilized to do this, and external monitor will be necessary. Below, I’ll walk you through the steps. Note: Putting an non-F5 executable on an LTM may negate F5's ability to support the unit, and might require the non-native executables to be removed before assisting in troubleshooting efforts. Build & Transfer the wmic Executable The wmic executable isn’t native on LTM, so it is necessary to build it. I downloaded the centOS 6.4 liveCD to build a virtual machine for the wmic build. Note that you need to use the CentOS version appropriate for your version of BIG-IP. After the vm is finalized, I installed gcc and autoconf with yum and then I followed the steps here: wget http://openvas.org/download/wmi/wmi-1.3.14.tar.bz2 tar xfj wmi-1.3.14.tar.bz2 cd wmi-1.3.14 make Once the build was complete, I switched over to my desktop to pull down the executuble, then transferred it to the LTM: C:\Users\jrahm\Downloads>pscp jrahm@172.16.99.128:/home/jrahm/wmi-1.3.14/Samba/source/bin/wmic . C:\Users\jrahm\Downloads>pscp wmic root@10.10.20.5:/var/tmp/ Next, I moved to the LTM and remounted the /usr file system to read-write so I could dump the executable in /usr/local/bin/: mount –o remount,rw /dev/vg-db-had /usr cp /var/tmp/wmic /usr/local/bin/ Note: You’ll need to check your /etc/fstab for the appropriate location of the /usr file system, it varies. Testing wmic Now that I had the executable in place, I tested it from the command line (variables need for script in bold italics): wmic -U testdom/testaccount%testpasswd //192.168.22.31 "select State from Win32_Service where Name='TermService'” This resulted in the following output: CLASS: Win32_Service Name|State TermService|Running Creating the Script After a successful query via wmi to check the service and the string that shows it’s running (TermService|Running), I created a script (/usr/share/monitors/rdpcheck) for the external monitor to reference: #!/bin/bash # remove IPv6/IPv4 compatibility prefix (LTM passes addresses in IPv6 format) IP=`echo ${1} | sed 's/::ffff://'` PORT=${2} PIDFILE="/var/run/`basename ${0}`.${IP}_${PORT}.pid" # kill of the last instance of this monitor if hung and log current pid if [ -f $PIDFILE ] then kill -9 `cat $PIDFILE` > /dev/null 2>&1 fi echo "$$" > $PIDFILE rm -f $PIDFILE # send request & check for expected response wmic -U $3/$4%$5 //$IP "select State from Win32_Service where Name='TermService'" | grep -i "TermService|Running" 2>&1 > /dev/null # mark node UP if expected response was received if [ $? -eq 0 ] then echo "UP" fi exit $1 and $2 are IP and Port with external monitors, and arguments are $3 and beyond. So in this case, I needed to supply domain, username, & password. At the command line, this looked like this: [root@ephesus:Active] monitors # ./rdpcheck ::ffff:192.168.22.31 3389 testdom testaccount testpasswd UP [root@ephesus:Active] monitors # ./rdpcheck ::ffff:192.168.22.31 3389 testdom testaccount testpass NTSTATUS: NT_STATUS_ACCESS_DENIED - Access denied Deb had a tip a while back when checking external monitors to make sure to supply the ::ffff: ipv6 format with the IPs to make sure the script handles it properly. Make sure to make the script executable (chmod +x rdpcheck). Creating the Monitor Now that the script is working at the command line, it’s time to create the monitor. Via the command line with bigpipe first, followed by a screenshot of the GUI. b monitor rdp_mon '{ defaults from external interval 10 timeout 31 args "testdom testaccount testpasswd" run "/usr/share/monitors/rdpcheck" }' That’s it! Now, assign it to a pool and you’re set: 2010-12-28 16:58:00.053110: ID 53 :(_main_loop): rfd selected [ addr=::ffff:192.168.22.31:3389 srcaddr=98bd:8bbf:921e:6107:98c1:4009:4715: f08:0 fd=10 pend=0 ] 2010-12-28 16:58:00.053203: ID 53 :(_recv_external_node_ping): reading [ addr=::ffff:192.168.22.31:3389 ] 2010-12-28 16:58:00.053295: ID 53 :(_kill_external_pinger): killing [ addr=::ffff:192.168.22.31:3389 ] 2010-12-28 16:58:00.053893: ID 53 :(recv_external_node_ping): EAV success [ addr=::ffff:192.168.22.31:3389 ] Conclusion This tech tip was specifically to show support for the terminal service, but wmic could be used for any other windows service as well, or could be used to ensure a bundle of services are running before considering a server is up. Standard disclaimers apply, adding things to the file system can cause unexpected results, so test thoroughly. Also, make sure to back things up as the wmic executable won't survivehotfixes and upgrades.Thanks again to hwidjaja for the excellent resource.2.3KViews1like15CommentsPart 3: Monitoring the health of BIG-IP APM network access PPP connections with a periodic iCall handler

In this part, you monitor the health of PPP connections on the BIG-IP APM system by monitoring the frequency of a particular log message in the/var/log/apmfile. In this log file, when the system is under high CPU load, you may observe different messages indicating users' VPN connections are disconnecting. For example: You observe multiple of the following messages where the PPP tunnel is started and closed immediately: Mar 13 13:57:57 hostname.example notice tmm3[16095]: 01490505:5: /Common/accessPolicy_policy:Common:ee6e0ce7: PPP tunnel 0x5604775f7000 (ID: 2c4507ea) started. Mar 13 13:57:57 hostname.example notice tmm3[16095]: 01490505:5: /Common/accessPolicy_policy:Common:ee6e0ce7: PPP tunnel 0x560478482000 (ID: acf63e47) closed. You observe an unusually high rate of log messages indicating users' APM sessions terminated due to various reasons in a short span of time. Mar 13 14:01:10 hostname.com notice tmm3[16095]: 01490567:5: /Common/AccessPolicy_policy:Common:a7cf25d9: Session deleted due to user inactivity. You observe an unusually high rate of log messages indicating different user apm session IDs are attempting to reconnect to network access, VPN. Mar 13 12:18:13 hostname notice tmm1[16095]: 01490549:5: /Common/AP_policy:Common:2c321803: Assigned PPP Dynamic IPv4: 10.10.1.20 ID: 033d0635 Tunnel Type: VPN_TUNNELTYPE_TLS NA Resource: /Common/AP_policy_na_res Client IP: 172.2.2.2 – Reconnect In this article, you monitor theReconnectmessage in the last example because it indicates that the VPN connection terminated and the client system still wants to reconnect. When this happens for several users and repeatedly, it usually indicates that the issue is not related to the client side. The next question is, what is an unusually high rate of reconnect messages, taking into account the fact that some reconnects may be due to reasons on the client side and not due to high load on the system. You can create a periodic iCall handler to run a script once every minute. Each time the script runs, it uses grep to find the total number of Reconnect log messages that happened over the last 3 minutes for example. When the average number of entries per minute exceeds a configured threshold, the system can take appropriate action. The following describes the procedures: Creating an iCall script to monitor the rate of reconnect messages Creating a periodic iCall handler to run the iCall script once a minute Testing the implementation using logger 1. Creating an iCall script to monitor the rate of reconnect messages The example script uses the following values: Periodic handler interval 1 minute: This runs the script once a minute to grep the number of reconnect messages logged in the last 3.0 minutes. Period 3.0 minutes: The script greps for the Reconnect messages logged in the last three minutes. Critical threshold value 4: When the average number of Reconnect messages per minute exceeds four or a total of 12 in the last three minutes, the system logs a critical message in the/var/log/apmfile. Alert threshold value 7: When the average number of Reconnect messages per minute exceeds seven or a total of 21 in the last three minutes, the system logs an alert message and requires immediate action. Emergency threshold 10: When the BIG-IP system is logging 10 Reconnect messages or more every minute for the last three minutes or a total of 30 messages, the system logs an emergency message, indicating the system is unusable. You should reconfigure these values at the top of the script according to the behavior and set up of your environment. By performing the count over an interval of three minutes or longer, it reduces the possibility of high number of reconnects due to one-off spikes. Procedure Perform the following procedure to create the script to monitor CPU statistics and log an alert message in the/var/log/ltmfile when traffic exceeds a CPU threshold value. To create an iCall script, perform the following procedure: Log in to tmsh. Enter the following command to create the script in the vi editor: create sys icall script vpn_reconnect_script 3. Enter the following script into the definition stanza in the editor: This script counts the average number of entries/min the Reconnect message is logged in /var/log/apm over a period of 3 minutes. definition { #log file to grep errormsg set apm_log "/var/log/apm" #This is the string to grep for. set errormsg "Reconnect" #num of entries = grep $errormsg $apm_log | grep $hourmin | wc -l #When (num of entries < crit_threshold), no action #When (crit_threshold <= num of entries < alert_threshold), log crtitical message. #When (alert_threshold <= num of entries < emerg_threshold), log alert message. #When (emerg_threhold <= num of entries log emerg message set crit_threshold 4 set alert_threshold 7 set emerg_threshold 10 #Number of minutes to take average of. E.g. Every 1.0, 2.0, 3.0, 4.0... minutes set period 3.0 #Set this to 1 to log to /var/tmp/scriptd.out. Set to 0 to disable. set DEBUG 0 set total 0 puts "\n[clock format [clock seconds] -format "%b %e %H:%M:%S"] Running script..." for {set i 1} {$i <= $period} {incr i} { set hourmin [clock format [clock scan "-$i minute"] -format "%b %e %H:%M:"] set errorcode [catch {exec grep $errormsg $apm_log | grep $hourmin | wc -l} num_entries] if{$errorcode} { set num_entries 0 } if {$DEBUG} {puts "DEBUG: $hourmin \"$errormsg\" logged $num_entries times."} set total [expr {$total + $num_entries}] } set average [expr $total / $period] set average [format "%.1f" $average] if{$average < $crit_threshold} { if {$DEBUG} {puts "DEBUG: $hourmin \"$errormsg\" logged $average times on average. Below all threshold. No action."} exit } if{$average < $alert_threshold} { if {$DEBUG} {puts "DEBUG: $hourmin \"$errormsg\" logged $average times on average. Reached critical threshold $crit_threshold. Log Critical msg."} exec logger -p local1.crit "01490266: \"$errormsg\" logged $average times on average in last $period mins. >= critical threshold $crit_threshold." exit } if{$average < $emerg_threshold} { if {$DEBUG} {puts "DEBUG: $hourmin \"$errormsg\" logged $average times on average. Reached alert threshold $alert_threshold. Log Alert msg."} exec logger -p local1.alert "01490266: \"$errormsg\" logged $average times on average in last $period mins. >= alert threshold $alert_threshold." exit } if {$DEBUG} {puts "DEBUG: $hourmin \"$errormsg\" logged $average times on average in last $period mins. Log Emerg msg"} exec logger -p local1.emerg "01490266: \"$errormsg\" logged $average times on average in last $period mins. >= emerg threshold $emerg_threshold." exit } 4. Configure the variables in the script as needed and exit the editor by entering the following command: :wq! y 5. Run the following command to list the contents of the script: list sys icall script vpn_reconnect_script 2. Creating a periodic iCall handler to run the script In this example, you create the iCall handler to run the script once a minute. You can increase this interval to once every two minutes or longer. However, you should consider this value together with theperiodvalue of the script in the previous procedure to ensure that you're notified on any potential issues early. Procedure Perform the following procedure to create the periodic handler that runs the script once a minute. To create an iCall periodic handler, perform the following procedure: Log in to tmsh. Enter the following command to create a periodic handler: create sys icall handler periodic vpn_reconnect_handler interval 60 script vpn_reconnect_script 3. Run the following command to list the handler: list sys icall handler periodic vpn_reconnect_handler 4. You can start and stop the handler by using the following command syntax: <start|stop> sys icall handler periodic vpn_reconnect_handler 3. Testing the implementation using logger You can use theloggercommand to log test messages to the/var/log/apmfile to test your implementation. To do so, run the following command the required number of times to exceed the threshold you set: Note: The following message must contain the keyword that you are searching for in the script. In this case, the keyword is Reconnect. logger -p local1.notice "01490549:5 Assigned PPP Dynamic IPv4: 10.10.1.20 ID: 033d0635 Tunnel Type: VPN_TUNNELTYPE_TLS NA Resource: /Common/AP_policy_na_res Client IP: 172.2.2.2 - Reconnect" Follow the/var/tmp/scriptd.outand/var/log/apm file entries to verify your implementation is working correctly. Conclusion This article lists three use cases for using iCall to monitor the health of your BIG-IP APM system. The examples mainly log the appropriate messages in the log files. You can extend the examples to monitor more parameters and also perform different kinds of actions such as calling another script (Bash, Perl, and so on) to send an email notification or perform remedial action.1.3KViews1like5CommentsUsing iCall to monitor BIG-IP APM network access VPN

Introduction During peak periods, when a large number of users are connected to network access VPN, it is important to monitor your BIG-IP APM system's resource (CPU, memory, and license) usage and performance to ensure that the system is not overloaded and there is no impact on user experience. If you are a BIG-IP administrator, iCall is a tool perfectly suited to do this for you. iCall is a Tcl-based scripting framework that gives you programmability in the control plane, allowing you to script and run Tcl and TMOS Shell (tmsh) commands on your BIG-IP system based on events. For a quick introduction to iCall, refer to iCall - All New Event-Based Automation System. Overview This article is made up of three parts that describe how to use and configure iCall in the following use cases to monitor some important BIG-IP APM system statistics: Part 1: Monitoring access sessions and CCU license usage of the system using a triggered iCall handler Part 2: Monitoring the CPU usage of the system using a periodic iCall handler. Part 3: Monitoring the health of BIG-IP APM network access VPN PPP connections with a periodic iCall handler. In all three cases, the design consists of identifying a specific parameter to monitor. When the value of the parameter exceeds a configured threshold, an iCall script can perform a set of actions such as the following: Log a message to the /var/log/apm file at the appropriate severity: emerg: System is unusable alert: Action must be taken immediately crit: Critical conditions You may then have another monitoring system to pick up these messages and respond to them. Perform a remedial action to ease the load on the BIG-IP system. Run a script (Bash, Perl, Python, or Tcl) to send an email notification to the BIG-IP administrator. Run the tcpdump or qkview commands when you are troubleshooting an issue. When managing or troubleshooting iCall scripts and handler, you should take into consideration the following: You use the Tcl language in the editor in tmsh to edit the contents of scripts and handlers. For example: create sys icall script <name of script> edit sys icall script <name of script> The puts command outputs entries to the /var/tmp/scriptd.out file. For example: puts "\n[clock format [clock seconds] -format "%b %d %H:%M:%S"] Running script..." You can view the statistics for a particular handler using the following command syntax: show sys icall handler <periodic | perpetual | triggered> <name of handler> Series 1: Monitoring access sessions and CCU license usage with a triggered iCall handler You can view the number of currently active sessions and current connectivity sessions usage on your BIG-IP APM system by entering the tmsh show apm license command. You may observe an output similar to the following: -------------------------------------------- Global Access License Details: -------------------------------------------- total access sessions: 10.0M current active sessions: 0 current established sessions: 0 access sessions threshold percent: 75 total connectivity sessions: 2.5K current connectivity sessions: 0 connectivity sessions threshold percent: 75 In the first part of the series, you use iCall to monitor the number of current access sessions and CCU license usage by performing the following procedures: Modifying database DB variables to log a notification when thresholds are exceed. Configuring user_alert.conf to generate an iCall event when the system logs the notification. Creating a script to respond when the license usage reaches its threshold. Creating an iCall triggered handler to handle the event and run an iCall script Testing the implementation using logger 1. Modifying database variables to log a notification when thresholds are exceeded. The tmsh show apm license command displays the access sessions threshold percent and access sessions threshold percent values that you can configure with database variables. The default values are 75. For more information, refer to K62345825: Configuring the BIG-IP APM system to log a notification when APM sessions exceed a configured threshold. When the threshold values are exceeded, you will observe logs similar to the following in /var/log/apm: notice tmm1[<pid>]: 01490564:5: (null):Common:00000000: Global access license usage is 1900 (76%) of 2500 total. Exceeded 75% threshold of total license. notice tmm2[<pid>]: 01490565:5: 00000000: Global concurrent connectivity license usage is 393 (78%) of 500 total. Exceeded 75% threshold of total license. Procedure: Run the following commands to set the threshold to 95% for example: tmsh modify /sys db log.alertapmaccessthreshold value 95 tmsh modify /sys db log.alertapmconnectivitythreshold value 95 Whether to set the alert threshold at 90% or 95%, depends on your specific environment, specifically how fast the usage increases over a period of time. 2. Configuring user_alert.conf to generate an iCall event when the system logs the notification You can configure the /config/user_alert.conf file to run a command or script based on a syslog message. In this step, edit the user_alert.conf file with your favorite editor, so that the file contains the following stanza. alert <name> "<string in syslog to match to trigger event>" { <command to run> } For more information on configuring the /config/user_alert.conf file, refer to K14397: Running a command or custom script based on a syslog message. In particular, it is important to read the bullet points in the Description section of the article first; for example, the system may not process the user_alert.conf file after system upgrades. In addition, BIG-IP APM messages are not processed by the alertd SNMP process by default. So you will also have to perform the steps described in K51341580: Configuring the BIG-IP system to send BIG-IP APM syslog messages to the alertd process as well. Procedure: Perform the following procedure: Edit the /config/user_alert.conf file to match each error code and generate an iCall event named apm_threshold_event. Per K14397 Note: You can create two separate alerts based on both error codes or alternatively use the text description part of the log message common to both log entries to capture both in a single alert. For example "Exceeded 75% threshold of total license" # cat /config/user_alert.conf alert apm_session_threshold "01490564:" { exec command="tmsh generate sys icall event apm_threshold_event" } alert apm_ccu_threshold "01490565:" { exec command="tmsh generate sys icall event apm_threshold_event" } 2. Run the following tmsh command: edit sys syslog all-properties 3. Replace the include none line with the following: Per K51341580 include " filter f_alertd_apm { match (\": 0149[0-9a-fA-F]{4}:\"); }; log { source(s_syslog_pipe); filter(f_alertd_apm); destination(d_alertd); }; " 3. Creating a script to respond when the license usage reaches its threshold. When the apm session or CCU license usage exceeds your configured threshold, you can use a script to perform a list of tasks. For example, if you had followed the earlier steps to configure the threshold values to be 95%, you can write a script to perform the following actions: Log a syslog alert message to the /var/log/apm file. If you have another monitoring system, it can pick this up and respond as well. Optional: Run a tmsh command to modify the Access profile settings. For example, when the threshold exceeds 95%, you may want to limit users to one apm session each, decrease the apm access profile timeout or both. Changes made only affect new users. Users with existing apm sessions are not impacted. If you are making changes to the system in the script, it is advisable to run an additional tmsh command to stop the handler. When you have responded to the alert, you can manually start the handler again. Note: When automating changes to the system, it is advisable to err on the side of safety by making minimal changes each time and only when required. In this case, after the system reaches the license limit, users cannot login and you may need to take immediate action. Procedure: Perform the following procedure to create the iCall script: 1. Log in to tmsh. 2. Run the following command: create sys icall script threshold_alert_script 3. Enter the following in the editor: Note: The tmsh commands to modify the access policy settings have been deliberately commented out. Uncomment them when required. sys icall script threshold_alert_script { app-service none definition { exec logger -p local1.alert "01490266: apm license usage exceeded 95% of threshold set." #tmsh::modify apm profile access exampleNA max-concurrent-sessions 1 #tmsh::modify apm profile access exampleNA generation-action increment #tmsh::stop sys icall handler triggered threshold_alert_handler } description none events none } 4. Creating an iCall triggered handler to handle the event and run an iCall script In this step, you create a triggered iCall handler to handle the event triggered by the tmsh generate sys icall event command from the earlier step to run the script. Procedure: Perform the following: 1. Log in to tmsh. 2. Enter the following command to create the triggered handler. create sys icall handler triggered threshold_alert_handler script threshold_alert_script subscriptions add { apm_threshold_event { event-name apm_threshold_event } } Note: The event-name field must match the name of the event in the generate sys icall command in /config/user_alert.conf you configured in step 2. 3. Enter the following command to verify the configuration of the handler you created. (tmos)# list sys icall handler triggered threshold_alert_handler sys icall handler triggered threshold_alert_handler { script threshold_alert_script subscriptions { apm_threshold_event { event-name apm_threshold_event } } } 5. Testing the implementation using logger You can use theloggercommand to log test messages to the/var/log/apmfile to test your implementation. To do so, run the following command: Note: The message below must contain the keyword that you are searching for in the script. In this example, the keyword is01490564or01490565. logger -p local1.notice "01490564:5: (null):Common:00000000: Global access license usage is 1900 (76%) of 2500 total. Exceeded 75% threshold of total license." logger -p local1.notice "01490565:5: 00000000: Global concurrent connectivity license usage is 393 (78%) of 500 total. Exceeded 75% threshold of total license." Follow the /var/log/apm file to verify your implementation is working correctly.1.7KViews1like0CommentsPart 2: Monitoring the CPU usage of the BIG-IP system using a periodic iCall handler

In this part series, you monitor the CPU usage of the BIG-IP system with a periodic iCall handler. The specific CPU statistics you want to monitor can be retrieved from either Unix or tmsh commands. For example, if you want to monitor the CPU usage of the tmm process, you can monitor the values from the output of the tmsh show sys proc-info tmm.0 command. An iCall script can iterate and retrieve a list of values from the output of a tmsh command. To display the fields available from a tmsh command that you can iterate from an iCall Tcl script, run the tmsh command with thefield-fmtoption. For example: tmsh show sys proc-info tmm.0 field-fmt You can then use a periodic iCall handler which runs an iCall script periodically every interval to check the value of the output of the tmsh command. When the value exceeds a configured threshold, you can have the script perform an action; for example, an alert message can be logged to the/var/log/ltmfile. The following describes the procedures: Creating an iCall script to monitor the required CPU usage values Creating a periodic iCall handler to run the iCall script once a minute 1. Creating an iCall script to monitor the required CPU usage values There are different Unix and tmsh commands available to display CPU usage. To monitor CPU usage, this example uses the following: tmsh show sys performance system detail | grep CPU: This displays the systemCPU Utilization (%). The script monitors CPU usage from theAveragecolumn for each CPU. tmsh show sys proc-info apmd: Monitors the CPU usage System Utilization (%) Last5-minsvalue of the apmd process. tmsh show sys proc-info tmm.0: Monitors the CPU usage System Utilization (%) Last5-minsvalue of the tmm process. This is the sum of the CPU usage of all threads of thetmm.0process divided by the number of CPUs over five minutes. You can display the number of TMM processes and threads started, by running different commands. For example: pstree -a -A -l -p | grep tmm | grep -v grep grep Start /var/log/tmm.start You can also create your own script to monitor the CPU output from other commands, such astmsh show sys cpuortmsh show sys tmm-info. However, a discussion on CPU usage on the BIG-IP system is beyond the scope of this article. For more information, refer toK14358: Overview of Clustered Multiprocessing (11.3.0 and later)andK16739: Understanding 'top' output on the BIG-IP system. You need to set some of the variables in the script, specifically the threshold values:cpu_perf_threshold, tmm.0_threshold, apmd_thresholdrespectively. In this example, all the CPU threshold values are set at 80%. Note that depending on the set up in your specific environment, you have to adjust the threshold accordingly. The threshold values also depend on the action you plan to run in the script. For example, in this case, the script logs an alert message in the/var/log/ltmfile. If you plan to log an emerg message, the threshold values should be higher, for example, 95%. Procedure Perform the following procedure to create the script to monitor CPU statistics and log an alert message in the/var/log/ltmfile when traffic exceeds a CPU threshold value. To create an iCall script, perform the following procedure: Log in to tmsh. Enter the following command to create the script in the vi editor: create sys icall script cpu_script 3. Enter the following script into the definition stanza of the editor. The 3 threshold values are currently set at 80%. You can change it according to the requirements in your environment. definition { set DEBUG 0 set VERBOSE 0 #CPU threshold in % from output of tmsh show sys performance system detail set cpu_perf_threshold 80 #The name of the process from output of tmsh show sys proc-info to check. The name must match exactly. #If you would like to add another process, append the process name to the 'process' variable and add another line for threshold. #E.g. To add tmm.4, "set process apmd tmm.0 tmm.4" and add another line "set tmm.4_threshold 75" set process "apmd tmm.0" #CPU threshold in % for output of tmsh show sys proc-info set tmm.0_threshold 80 set apmd_threshold 80 puts "\n[clock format [clock seconds] -format "%b %d %H:%M:%S"] Running CPU monitoring script..." #Getting average CPU output of tmsh show sys performance set errorcode [catch {exec tmsh show sys performance system detail | grep CPU | grep -v Average | awk {{ print $1, $(NF-4), $(NF-3), $(NF-1) }}} result] if {[lindex $result 0] == "Blade"} { set blade 1 } else { set blade 0 } set result [split $result "\n"] foreach i $result { set cpu_num "[lindex $i 1] [lindex $i 2]" if {$blade} {set cpu_num "Blade $cpu_num"} set cpu_rate [lindex $i 3] if {$DEBUG} {puts "tmsh show sys performance->${cpu_num}: ${cpu_rate}%."} if {$cpu_rate > $cpu_perf_threshold} { if {$DEBUG} {puts "tmsh show sys performance->${cpu_num}: ${cpu_rate}%. Exceeded threshold ${cpu_perf_threshold}%."} exec logger -p local0.alert "\"tmsh show sys performance\"->${cpu_num}: ${cpu_rate}%. Exceeded threshold ${cpu_perf_threshold}%." } } #Getting output of tmsh show sys proc-info foreach obj [tmsh::get_status sys proc-info $process] { if {$VERBOSE} {puts $obj} set proc_name [tmsh::get_field_value $obj proc-name] set cpu [tmsh::get_field_value $obj system-usage-5mins] set pid [tmsh::get_field_value $obj pid] set proc_threshold ${proc_name}_threshold set proc_threshold [set [set proc_threshold]] if {$DEBUG} {puts "tmsh show sys proc-info-> Average CPU Utilization of $proc_name pid $pid is ${cpu}%"} if { $cpu > ${proc_threshold} } { if {$DEBUG} {puts "$proc_name process pid $pid at $cpu% cpu. Exceeded ${proc_threshold}% threshold."} exec logger -p local0.alert "\"tmsh show sys proc-info\" $proc_name process pid $pid at $cpu% cpu. Exceeded ${proc_threshold}% threshold." } } } 4. Configure the variables in the script as needed and exit the editor by entering the following command: :wq! y 5. Run the following command to list the contents of the script: list sys icall script cpu_script 2. Creating a periodic iCall handler to run the iCall script once a minute Procedure Perform the following procedure to create the periodic handler that runs the script once a minute. To create an iCall periodic handler, perform the following procedure: Log in to tmsh Enter the following command to create a periodic handler: create sys icall handler periodic cpu_handler interval 60 script cpu_script 3. Run the following command to list the handler: list sys icall handler periodic cpu_handler 4. You can start and stop the handler by using the following command syntax: <start|stop> sys icall handler periodic cpu_handler Follow the /var/tmp/scriptd.out and /var/log/ltm file entries to verify your implementation is working correctly.2.5KViews1like0CommentsTwo-Factor Authentication With Google Authenticator And LDAP

Introduction Earlier this year Google released their time-based one-time password (TOTP) solution named Google Authenticator. A TOTP is a single-use code with a finite lifetime that can be calculated by two parties (client and server) using a shared secret and a synchronized clock (see RFC 4226 for additional information). In the case of Google Authenticator, the TOTP are generated using a software (soft) token on a mobile device. Google currently offers applications for the Apple iPhone, Android-based devices, and Blackberry handsets. A user authenticating with a Google Authenticator-enabled service will require the possession of this software token. In order for the token to be effective, it must not be able to be duplicated and the shared secret should be closely guarded. Google Authenticator’s soft token solution offer a number of advantages over other commercially available solutions. It is free to use (all applications are free to download), the TOTP algorithm is open source, well-known, and well-tested, and finally it does not require a dedicated server for processing tokens. While certain potential weakness in SHA-1 have been identified, none of them can be exploited within the 30-second timeframe of the TOTP’s usability. For all intents and purposes, SHA-1 is reasonably secure, well-tested, and purpose-appropriate for this application. The algorithm however is only as secure as the users and administrators are at protecting the shared secret used in token processing. Calculating The Google Authenticator TOTP The Google Authenticator TOTP is calculated by generating an HMAC-SHA1 token, which uses a 10-byte base32-encoded shared secret as a key and Unix time (epoch) divided into a 30 second interval as inputs. The resulting 80-byte token is converted to a 40-character hexadecimal string, the least significant (last) hex digit is then used to calculate a 0-15 offset. The offset is then used to read the next 8 hex digits from the offset. The resulting 8 hex digits are then AND’d with 0x7FFFFFFF (2,147,483,647), then the modulo of the resultant integer and 1,000,000 is calculated, which produces the correct code for that 30 seconds period. Base32 encoding and decoding were covered in my previous Tech Tip titled Base32 Encoding And Decoding With iRules . The Tech Tip details the process for decoding a user’s base32-encoded key to binary as well as converting a binary key to base32. The HMAC-SHA256 token calculation iRule was originally submitted by Nat to the Codeshare on DevCentral. The iRule was slightly modified to support the SHA-1 algorithm, but is otherwise taken directly from the pseudocode outlined in RFC 2104. These two pieces of code contribute the bulk of the processing of the Google Authenticator code. The rest is done with simple bitwise and arithmetic functions. Google Authenticator Two-Factor Authentication Process Installing Google Authenticator Two-Factor Authentication The installation of Google Authenticator two-factor authentication on your BIG-IP is divided into six sections: creating an LDAP authentication configuration, configuring an LDAP (Active Directory) authentication profile, testing your authentication profile, adding the Google Authenticator iRule and “user_to_google_auth” mapping data group, attaching iRule to the authentication profile, and finally generating soft tokens for your users. The process is broken out into steps as trying to complete all the sections in tandem can be difficult to troubleshoot. Creating An LDAP (Active Directory) Authentication Configuration The LDAP profile we will configure will be extremely basic: no SSL, no Active Directory, etc. A detailed walkthrough for more advanced deployments can be found in our best practices guide: Configuring LDAP remote authentication for Active Directory . 1. Login to your BIG-IP using administrator credentials 2. Navigate to Local Traffic > Profiles > Authentication > Configurations 3. Click “Create” in the upper right-hand corner 4. Select “LDAP” from the “Type” drop-down menu 5. Now fill in the fields with your environment-specific values: Name: ldap.f5test.local Type: LDAP Remote LDAP Tree: dc=f5test, dc=local Host(s): <IP address(es) of LDAP server(s)> Service Port: 389 (default) LDAP Version: 3 (default) Bind DN: cn=ldap_bind_acct, dc=f5test, dc=local (if your LDAP server allows anonymous binds you may not need this option) Bind Password: <admin password> Confirm Bind Password: <admin password> 6. Click “Finished” to save the configuration Configuring An LDAP (Active Directory) Authentication Profile 1. Navigate to Local Traffic > Profiles > Authentication > Profiles 2. Click “Create” in the upper right-hand corner 3. Select “LDAP” from the “Type” drop-down menu 4. Fill in fields with appropriate values: Name: ldap.f5test.local Type: LDAP Configuation: ldap.f5test.local (select previously named configuration from drop-down) Rule: (leave this unchecked and not enabled for now, but this is where we will enable the Google Authenticator iRule shortly) 5. Click “Finished” Test Your Authentication Profile 1. Create a basic HTTP virtual server with your LDAP authentication profile enabled on the virtual 2. Access your virtual from a web browser and you should be prompted with an HTTP Basic Authentication credential form 3. Test with known-working credentials, if everything works you’re good to go, if not you’ll need to troubleshoot the authentication issue Adding the Google Authenticator iRule 1. Go to the DevCentral Codeshare and download the Google Authenticator iRule 2. Navigate to Local Traffic > iRules > iRule List 3. Click “Create” in the upper right-hand corner 4. Name your iRule “google_authenticator_plus_ldap_two_factor” and paste the iRule into “Definition” section 5. Click “Finished” when you’re done Attaching The Google Authenticator iRule To Your Authentication Profile 1. Go back to the “Authentication Profile” section by browsing to Local Traffic > Profiles > Authentication > Profiles 2. Select your LDAP profile from the list 3. Now attach select the “google_authenticator_plus_ldap_two_factor” iRule from the “Rule” drop-down 4. Click “Finished” Generating Software Tokens For Users In addition to the Google Authenticator iRule we also wrote a Google Authenticator Soft Token Generator iRule that will generate soft tokens for your users. The iRule can be added directly to an HTTP virtual server without a a pool and accessed directly to create tokens. There are a few available fields in the generator: account, pre-defined secret, and a QR code option. The “account” field defines how to label the soft token within the user’s mobile device and can be useful if the user has multiple soft token on the same device (I have 3 and need to label them to keep them straight). A 10-byte string can be used as a pre-defined secret for conversion to a base32-encoded key. We will advise you against using a pre-defined key because a key known to the user is something they know (as opposed to something they have) and could be potentially regenerate out-of-band thereby nullifying the benefits of two-factor authentication. Lastly, there is an option to generate a QR code by sending an HTTPS request to Google and returning the QR code as an image. While this is convenient, this could be seen as insecure since it may wind up in Google’s logs somewhere. You’ll have to decide if that is a risk you’re willing to take for the convenience it provides. Once the token has been generated, it will need to be added to a data group on the BIG-IP: 1. Navigate to Local Traffic > iRules > Data Group Lists 2. Select “Create” from the upper right-hand corner if the data group does not yet exist. If it exists, just select it from the list. 3. Name the data group “user_to_google_auth” (data group name can be changed in the RULE_INIT section of the Google Authenticator iRule) 4. The type of data group will be “string” 5. Type the “username” into the “string” field and paste the “Google Authenticator key” into the “value” field 6. Click “Add” and you the username/key pair should appear in the list as such: user := ONSWG4TFOQYTEMZU 7. Click “Finished” when all your username/key pairs have been added. Your user can scan the QR code or type it into their device manually. After they scan the QR code, the account name should appear along with the TOTP for the account. The image below is how the soft token appears in the Google Authenticator iPhone application: Once again, do not let the user leave with a copy of the plain text key. Knowing their key value will negate the value of having the token in the first place. Once the key has been added to the BIG-IP, the user’s device, and they’ve tested their access, destroy any reference to the key outside the BIG-IPs data group.If you’re worried about having the keys in plain text on the BIG-IP, they can be encrypted with AES or stored off-box in LDAP and only queried via secure connection. This is beyond the scope of this article, but doable with iRules. Testing and Troubleshooting There are a lot of moving pieces in this iRule so troubleshooting can be a bit daunting at first glance, but because all of the pieces can be separated into their constituents the problem is usually identified quickly. There are five pieces that make up this solution: the LDAP service, the BIG-IP LDAP profile, the Google Authenticator iRule, the “user_to_google_auth” mapping data group, and finally the soft token. Try to separate them from each other to expedite the troubleshooting process. Here are a few helpful hints in troubleshooting potential issues: 1. Are all the clocks synchronized? The BIG-IP and LDAP server can be tested from the command line by running ‘ntpdate –q pool.ntp.org’. If the clocks are more than a few milliseconds off, they’ll need to be adjusted. An NTP server should be configured for all devices. Likewise the user’s mobile device must be configured to use network time or else the calculated value will always be wrong. Remember that timezones do not matter when using Unix time. 2. Is basic LDAP working without the iRule attached? Before ever touching any of the Google Authenticator related iRules, data groups, devices, etc. your LDAP configuration should be in working order. If you’re having problems finding the issue, enable “debug logging” at the bottom of the LDAP authentication configuration page on your BIG-IP and tail the logs on your LDAP server. Revisit the best practices guide if you are still unsure about any configuration options. 3. Turn on (or increase) logging for Google Authenticator iRule. In the RULE_INIT section of the Google Authenticator iRule, there is a debug logging option. Set it to ‘2’ and all actions from the iRule will be logged to /var/log/ltm. If you see one particular area that is consistently hanging, investigate it further. Conclusion With every passing day system security becomes a greater concern. Today’s attacks are far more sophisticated and costly than those of days past. With all the stories of stolen laptops and other devices in the field, it is a little easier to sleep as a systems administrator knowing that a tech-aware thief has one more hurdle to surpass in an effort to compromise your infrastructure. The implementation costs of deploying two-factor authentication with Google Authenticator in an existing F5 infrastructure are very low assuming your employees have company-issued mobile devices. The cost can be deduced to the man hours required to install this iRule and generate tokens for your users. The cost is almost certainly less than that of a single incident of a compromise account. Until next time, batten down the hatches and get that two-factor project underway that’s been on the backburner for two years. Code and References Google Authenticator iRule – Documentation and code for the iRule used in this Tech Tip Google Authenticator Soft Token Generator iRule – iRule for generating soft tokens for users RFC 4226 - HOTP: An HMAC-Based One-Time Password Algorithm RFC 2104 - HMAC: Keyed-Hashing for Message Authentication RFC 4648 - The Base16, Base32, and Base64 Data Encodings SOL11072 - Configuring LDAP remote authentication for Active Directory6.9KViews1like12CommentsUnbind your LDAP servers with iRules

LDAP is one of the most widely used authentication protocols around today. There are plenty of others, but LDAP is undeniably one of the big ones. It comes as no surprise then that we often hear different questions about using F5 technology with LDAP servers on the back-end. Whether people are looking for more performance, increased reliability and availability through load-balancing, or just more flexibility, there are many things that we can do to help. A great example is improving performance. This example is driven from a client's requirements to reduce the overhead on their LDAP systems. They wanted to do so in a particular way, however. They were receiving a high-volume of very short-lived connections that all needed to query the back-end LDAP systems for information. Each one of these connections would open a new connection and as it turns out the overhead of setting up and tearing down the TCP connections was creating a fair amount of churn on their server, due to the high volume and short duration of the requests. Seeing this, they turned to their BIG-IP, looking for a solution. As luck would have it, iRules was able to step in to help them accomplish just what they were looking for. Thanks to one of the many bright engineers here at F5, Nat Thirasuttakorn, they were able to leave server-side connections to the LDAP systems open for long periods of time and just manage the handshakes on the client side, thereby greatly reducing the overhead on the LDAP servers. Below is the iRule that Nat was kind enough to share with me so I could pass it along to the DevCentral community. What it does is listen to the LDAP traffic, watching for an unbind to occur. Once the iRule sees an unbind from the client, which would normally be sent to the LDAP server terminating the connection, it simply uses the LB::detach command to detach the back-end connection at the BIG-IP, and tosses the unbind command itself so the LDAP server never sees it. This leaves the server's connection to the BIG-IP open and available for the next request that comes in. It's important to understand that the LB::detach command isn't terminating any connection, even though the name might sound like it. All it's doing is detaching the current session from the connection it's established to the server in question, allowing future sessions to make use of the connection. This will, in essence, makes it look to the LDAP server that there's a single (or several), long-lived connection being held open with many requests flowing through. What's really happening is the BIG-IP brokering requests from the client and using already established back-end connections to keep overhead down to a minimum. This is the beauty of OneConnect (which is required for this solution to work) and iRules on the BIG-IP's TMOS architecture. I don't have any performance numbers to share, but I'm willing to bet the BIG-IP is a fair amount more efficient at managing those large numbers of short-lived connections than an LDAP server is going to be. That means not only are you gaining the overhead back on your auth server, but you’re not really losing much on the BIG-IP. That makes it a win-win. Again, many thanks to Nat for sharing the below code. when CLIENT_ACCEPTED { TCP::collect } when CLIENT_DATA { binary scan [TCP::payload] xc ber_len if { $ber_len < 0 } { set ber_index [expr 2 + 128 + $ber_len] } else { set ber_index 2 } # message id binary scan [TCP::payload] @${ber_index}xcI ber_len ber_len_ext if { $ber_len < 0 } { set ext_len [expr 128 + $ber_len] set ber_len [expr ($ber_len_ext & 0xffffffff) >>(4-$ext_len)*8)] } else { set ext_len 0 } incr ber_index [expr 2 + $ext_len + $ber_len] # ldap message binary scan [TCP::payload] @${ber_index}c ber_type if { [expr $ber_type & 0x1f] == 2 } { log local0. "unbind => detach" TCP::payload replace 0 [TCP::payload length] "" LB::detach } TCP::release TCP::collect } Get the Flash Player to see this player. 20081009-ldap_no_unbind.mp31KViews1like11CommentsSelective Client Cert Authentication

SSL encryption on the web is not a new concept to the general population of the internet. Those of us that frequent many websites per week (day, hour, minute, etc.) are quite used to making use of SSL encryption for security purposes. It's an accepted standard, and we're all fairly used to dealing with it in varied capacities. Whether it's that nifty yellow URL bar in Firefox, or the security warning saying that portions of the site you're going to are unencrypted, we've likely seen it before, and are comfortable with it in day to day operation. What if, however, I wanted to get more out of my certificates? One of the more common, behind the scenes things that gets done with certificates is authentication. Via client-cert authentication users can have a "passwordless" user experience, automatic authentication into multiple apps with different access levels, and a smooth browsing experience with the applications in question. Combine these nice to have features with improved security, as it's much harder to spoof a client-cert than it is a password, and it's not surprising we're seeing a fair amount of companies putting this type of authentication into place. That's all well and good, but what if you don't want your entire site to be authenticated this way? What if you only want users trying to access certain portions of the site to be required to present a valid client-cert? What's more, what if you need to pass along some of the information from the certificate to the back end application? Extracting things like the issuer, subject and version can be necessary in some of these situations. That's a fair amount of application layer overhead to put on your application servers - inspecting every client request, determining the intended location, negotiating client-cert authentication if necessary, passing that info on, etc. etc. Wouldn't it be nice if you could not only offload all of this overhead, but the management overhead of the setup as well? As is often the case, with iRules, you can. With the below example iRule not only can you selectively require a certificate from the inbound users depending on, in this case the requested URI, but you can also extract valuable cert information from the client and insert it into HTTP headers to be passed back to the application servers for whatever processing needs they might have. This allows you to fine-tune the user experience of your application or site for those users who need access via client-cert authentication, but not affect those that don't. You can even custom define the actions for the iRule to take in the case that a user requests a URI that requires authentication, but doesn't have the appropriate cert. There is a little configuration that needs to be done, like setting up a Client SSL profile to decrypt the SSL traffic coming in, but that should be simple enough. The iRule itself is pretty straight-forward. It uses the matchclass command to compare the URI to a list of known URIs that require authentication (class not shown in the example). If it finds a match, it uses the SSL commands to check for and require a certificate. Once this is found it uses the X509 commands to poll cert information and include it in some custom HTTP headers that the back end servers can look for. when CLIENTSSL_CLIENTCERT { HTTP::release if { [SSL::cert count] < 1 } { reject } } when HTTP_REQUEST { if { [matchclass [HTTP::uri] starts_with $::requires_client_cert] } { if { [SSL::cert count] <= 0 } { HTTP::collect SSL::authenticate always SSL::authenticate depth 9 SSL::cert mode require SSL::renegotiate } } } when HTTP_REQUEST_SEND { clientside { if { [SSL::cert count] > 0 } { HTTP::header insert "X-SSL-Session-ID"[SSL::sessionid] HTTP::header insert "X-SSL-Client-Cert-Status"[X509::verify_cert_error_string [SSL::verify_result]] HTTP::header insert "X-SSL-Client-Cert-Subject"[X509::subject [SSL::cert 0]] HTTP::header insert "X-SSL-Client-Cert-Issuer"[X509::issuer [SSL::cert 0]] } } } As you can see there is a fair amount of room for further customization, as was partly mentioned above. Things like dealing with custom error pages or routing for requests that should require authentication but don't provide a cert, allowing different levels of access based on the cert information collected, etc. All in all this iRule represents a relatively simple solution to a more complex problem and does so in a manner that's easy to implement and maintain. That's the power of iRules, in a nutshell. Get the Flash Player to see this player.2.6KViews1like6Comments