Templating Enhanced Kubernetes Load Balancing with a Helm Operator

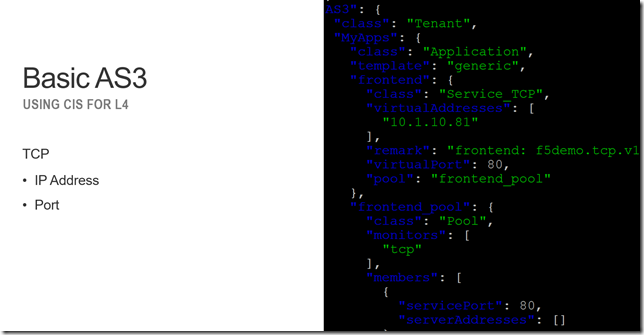

Basic L4 load balancing only requires a few inputs, IP and Port, but how do provide enhanced load balancing and not overwhelm an operator with hundreds of inputs? Using a helm operator, a Kubernetes automation tool, we can unlock the full potential of a F5 BIG-IP and deliver the right level of service. In the following article we’ll take a look at what is a helm operator and how we can use it to create a service catalog of BIG-IP L4-L7 services that can be deployed natively from Kubernetes. Helm Helm is a tool that is used to automate Kubernetes application and infrastructure. You might use it to deploy a simple application with a deployment and service resource or use it to deploy a service mesh like Istio that contains custom resources, cluster roles, mutating webhooks, pilots, ingress gateways, egress gateways, prometheus, etc.. It’s kinda like Ansible, but for Kubernetes Helm Operator It is helpful to be able to automate via helm; but how do you know that the state of your cluster is consistent? Did somebody go in later and modify your deployment from the original template and create a snowflake? A helm operator are part of the Operator Framework; nannies parents for your Kubernetes services. They ensure that your services get started properly, clean-up when they have an accident, and put the resources to bed at the end of the day. Declarative L4-L7 K8S LB w/ AS3 F5 Container Ingress Services (CIS) (the product formerly known as Container Connector) enables an end-user to deploy a control plane process that monitors the Kubernetes API to deploy load balancer (LB) services when needed removing the need for the traditional change request queue. Version 1.9 of CIS introduces the ability to use Application Services Extension 3 (AS3) to deploy both basic and enhanced L4-L7 services. A basic service might be: L4 TCP L7 HTTP/HTTPS This is similar to what was possible with previous versions of CIS. AS3 introduces the ability to enhance these services with capabilities like Visibility of Client IP with Proxy Protocol End-to-end SSL encryption (including mutual TLS with the use of C3D) L4 and L7 DDoS protection using IP threat feeds and advanced WAF For the basic service this can be represented by a Kubernetes ConfigMap resource that contains a JSON file of the desired output. Something like: An enhanced service looks something more like: Ideally we can create a template for both basic and enhanced services to simplify deployment and ensure that both local policy and best practices are being adhered to. Helm Chart We can create a helm chart (template) that represents both a basic and advanced service. The basic template for TCP looks like: The inputs/values for these templates gets boiled down to a few input parameters. This makes it easier to make changes without having to modify JSON in a text editor! Helm Operators We could use helm to generate static AS3 ConfigMaps, but we can optionally use a helm operator to create a new resource (or service catalog) of values that can dynamically generate AS3 ConfigMaps. Following the guide from the helm operator user guide we can import a helm chart to build a new operator (container) that will monitor the Kubernetes API. In the following I created the “f5demo” operator. Once I install the f5demo custom resource I can query for the resource similar to any native Kubernetes resource like a ConfigMap. node1$ kubectl get f5demo -n ingress-bigip NAME AGE example-f5demo 18m The contents of the resource are the helm values that were used previously. node1$ kubectl get f5demo -n ingress-bigip -o yaml apiVersion: v1 items: - apiVersion: charts.helm.k8s.io/v1alpha1 kind: F5Demo ... spec: applications: - frontend: name: frontend template: f5demo.tcp.v1 virtualAddress: 10.1.10.81 virtualPort: 80 ... The helm operator builds a new ConfigMap based on the input values node1$ kubectl get cm -n ingress-bigip NAME DATA AGE example-f5demo-bzr48kbg2peco4p5g4wc0jy32-as3-configmap 1 20m Building Blocks To recap we’ve looked at using helm to build templates of BIG-IP L4-L7 services using AS3. To ensure day-to-day consistency in a cluster we are using an operator to keep track of the state of a service and make updates as appropriate. These patterns could be deployed in other ways, for example my colleague uses Jenkins and Python to templatize or maybe you’d rather just use Ansible with AS3. My recommendation is to: Figure out what L4-L7 services you need Build an AS3 declaration (JSON) of what you want Use a tool like Helm, Ansible, BIG-IQ, Perl etc... to deliver your infrastructure (as code)920Views2likes2CommentsProtect Your Kubernetes Cluster Against The Apache Log4j2 Vulnerability Using BIG-IP

Whenever a high profile vulnerability like Apache Log4j2 is announced, it is often a race to patch and remediate. Luckily, for those of us with BIG-IP's with AWAF (Advanced Web Application Firewall) in our environment, we can take care of some mitigation through updating and applying signatures. When there is a consolidation of duties, or both SecOps and NetOps work together on the same cluster of BIG-IP's then an AWAF policy can simply be applied to a virtual server. However, as we move into a world of modern application architectures, the Kubernetes administrators are very often a different set of individuals falling within DevOps. The DevOps team will work with NetOps to incorporate BIG-IP as the Ingress to the Kubernetes environment through the use of Container Ingress Services. This allows for a declarative configuration and objects can be called upon to incorporate into the Ingress configuration. In Container Ingress Services version 2.7, using the Policy CRD (Custom Resource Definitions) feature, an AWAF policy can be one of these objects incorporated. Here is some example code for defining the Policy CRD and specifying the WAF policy: apiVersion: cis.f5.com/v1 kind: Policy metadata: labels: f5cr: "true" name: policy-mysite namespace: default spec: l7Policies: waf: /Common/WAF_Policy profiles: http: /Common/Custom_HTTP logProfiles: - /Common/Log all requests And here is an example of associating this Policy CRD with the VirtualServer CRD: apiVersion: "cis.f5.com/v1" kind: VirtualServer metadata: name: vs-myapp labels: f5cr: "true" spec: # This is an insecure virtual, Please use TLSProfile to secure the virtual # check out tls examples to understand more. virtualServerAddress: "10.192.75.117" virtualServerHTTPSPort: 443 httpTraffic: redirect tlsProfileName: reencrypt-tls policyName: policy-mysite host: myapp.f5demo.com pools: - path: / service: f5-demo servicePort: 443 Mark Dittmer, Sr. Product Management Engineer here at F5, recently teamed up with Brandon Frelich, Security Solutions Architect, to create a how-to video on this. Mark's associated Github repo: https://github.com/mdditt2000/kubernetes-1-19/blob/master/cis%202.7/log4j/README.md This is going to now allow for the SecOps teams to focus on creating and providing AWAF policies while the DevOps can focus on their domain and incorporate the AWAF policy quickly. As we see microservices sprawl, we need every speed advantage we can get!892Views1like0Comments