Unify Visibility with F5 ACI ServiceCenter in Cisco ACI and F5 BIG-IP Deployments

What is F5 ACI ServiceCenter? F5 ACI ServiceCenter is an application that runs natively on Cisco Application Policy Infrastructure Controller (APIC), which provides administrators a unified way to manage both L2-L3 and L4-L7 infrastructure in F5 BIG-IP and Cisco ACI deployments.Once day-0 activities are performed and BIG-IP is deployed within the ACI fabric, F5 ACI ServiceCenter can then be used to handle day-1 and day-2 operations. F5 ACI ServiceCenter is well suited for both greenfield and brownfield deployments. F5 ACI ServiceCenteris a successful and popular integration between F5 BIG-IP and Cisco Application Centric Infrastructure (ACI).This integration is loosely coupled and can be installed and uninstalled at anytime without any disruption to the APIC and the BIG-IP.F5 ACI ServiceCenter supports REST API and can be easily integrated into your automation workflow: F5 ACI ServiceCenter Supported REST APIs. Where can we download F5 ACI ServiceCenter? F5 ACI ServiceCenter is completely Free of charge and it is available to download from Cisco DC App Center. F5 ACI ServiceCenter is fully supported by F5. If you run into any issues and/or would like to see a new feature or an enhancement integrated into future F5 ACI ServiceCenter releases, you can open a support tickethere. Why should we use F5 ACI ServiceCenter? F5 ACI ServiceCenter has three main independent use cases and you have the flexibility to use them all or to pick and choose to use whichever ones that fit your requirements: Visibility F5 ACI ServiceCenter provides enhanced visibility into your F5 BIG-IP and Cisco ACI deployment. It has the capability to correlate BIG-IP and APIC information. For example, you can easily find out the correlated APIC Endpoint information for a BIG-IP VIP, and you can also easily determine the APIC Virtual Routing and Forwarding (VRF) to BIG-IP Route Domain (RD) mapping from F5 ACI ServiceCenter as well. You can efficiently gather the correlated information from both the APIC and the BIG-IP on F5 ACI ServiceCenter without the need to hop between BIG-IP and APIC. Besides, you can also gather the health status, the logs, statistics etc. on F5 ACI ServiceCenter as well. L2-L3 Network Configuration After BIG-IP is inserted into ACI fabric using APIC service graph, F5 ACI ServiceCenter has the capability to extract the APIC service graph VLANs from the APIC and then deployed the VLANs on the BIG-IP. This capability allows you to always have the single source of truth for network configuration between BIG-IP and APIC. L4-L7 Application Services F5 ACI ServiceCenter leverages F5 Automation Toolchain for application services: Advanced mode, which uses AS3 (Application Services 3 Extension) Basic mode, which uses FAST (F5 Application Services Templates) F5 ACI ServiceCenter also has the ability to dynamically add or remove pool members from a pool on the BIG-IP based on the endpoints discovered by the APIC, which helps to reduce configuration overhead. Other Features F5 ACI ServiceCenter can manage multiple BIG-IPs - physical as well as virtual BIG-IPs. If Link Layer Discovery Protocol (LLDP) is enabled on the interfaces between Cisco ACI and F5 BIG-IP,F5 ACI ServiceCenter can discover the BIG-IP and add it to the device list as well. F5 ACI Service can also categorize the BIG-IP accordingly, for example, if it is a standalone or in a high availability (HA) cluster. Starting from version 2.11, F5 ACI ServiceCenter supports multi-tenant design too. These are just some of the features and to find out more, check out F5 ACI ServiceCenter User and Deployment Guide. F5 ACI ServiceCenter Resources Webinar: Unify Your Deployment for Visibility with Cisco and the F5 ACI ServiceCenter Learn: F5 DevCentral Youtube Videos: F5 ACI ServiceCenter Playlist Cisco Learning Video:Configuring F5 BIG-IP from APIC using F5 ACI ServiceCenter Cisco ACI and F5 BIG-IP Design Guide White Paper Hands-on: F5 ACI ServieCenter Interactive Demo Cisco dCloud Lab -Cisco ACI with F5 ServiceCenter Lab v3 Get Started: Download F5 ACI ServiceCenter F5 ACI ServiceCenter User and Deployment Guide1.6KViews1like0CommentsDeploying F5 Distributed Cloud (XC) Services in Cisco ACI - Layer Three Attached Deployment

Introduction F5 Distributed Cloud (XC) Services are SaaS-based security, networking, and application management services that can be deployed across multi-cloud, on-premises, and edge locations. This article will show you how you can deploy F5 Distributed Cloud Customer Edge (CE) site in Cisco Application Centric Infrastructure (ACI) so that you can securely connect your application in Hybrid Multi-Cloud environment. XC Layer Three Attached CE in Cisco ACI A F5 Distributed Cloud Customer Edge (CE) site can be deployed with Layer Three Attached in Cisco ACI environment using Cisco ACI L3Out. As a reminder, Layer Three Attached is one of the deployment models to get traffic to/from a F5 Distributed Cloud CE site, where the CE can be a single node or a three nodes cluster. Static routing and BGP are both supported in the Layer Three Attached deployment model. When a Layer Three Attached CE site is deployed in Cisco ACI environment using Cisco ACI L3Out, routes can be exchanged between them via static routing or BGP. In this article, we will focus on BGP peering between Layer Three Attached CE site and Cisco ACI Fabric. XC BGP Configuration BGP configuration on XC is simple and it only takes a couple steps to complete: 1) Go to "Multi-Cloud Network Connect" -> "Networking" -> "BGPs". *Note: XC homepage is role based, and to be able to configure BGP, "Advanced User" is required. 2) "Add BGP" to fill out the site specific info, such as which CE Site to run BGP, its BGP AS number etc., and "Add Peers" to include its BGP peers’ info. *Note: XC supports direct connection for BGP peering IP reachability only. XC Layer Three Attached CE in ACI Example In this section, we will use an example to show you how to successfully bring up BGP peering between a F5 XC Layer Three Attached CE site and a Cisco ACI Fabric so that you can securely connect your application in Hybrid Multi-Cloud environment. Topology In our example, CE is a three nodes cluster(Master-0, Master-1 and Master-2) that has a VIP 10.10.122.122/32 with workloads, 10.131.111.66 and 10.131.111.77, in the cloud (AWS): The CE connects to the ACI Fabricvia a virtual port channel (vPC) that spans across two ACI boarder leaf switches. CE and ACI Fabric are eBGP peers via an ACI L3Out SVI for routes exchange. CE is eBGP peered to both ACI boarder leaf switches, so that in case one of them is down (expectedly or unexpectedly), CE can still continue to exchange routes with the ACI boarder leaf switch that remains up and VIP reachability will not be affected. XC BGP Configuration First, let us look at the XC BGP configuration ("Multi-Cloud Network Connect" -> "Networking" -> "BGPs"): We"Add BGP" of "jy-site2-cluster" with site specific BGP info along with a total of six eBGP peers (each CE node has two eBGP peers; one to each ACI boarder leaf switch): We "Add Item" to specify each of the six eBPG peers’ info: Example reference - ACI BGP configuration: XC BGP Peering Status There are a couple of ways to check the BGP peering status on the F5 Distributed Cloud Console: Option 1 Go to "Multi-Cloud Network Connect" -> "Networking" -> "BGPs" -> "Show Status" from the selected CE site to bring up the "Status Objects" page. The "Status Objects" page provides a summary of the BGP status from each of the CE nodes. In our example, all three CE nodes from "jy-site2-cluster" are cleared with "0 Failed Conditions" (Green): We can simply click on a CE node UID to further look into the BGP status from the selected CE node with all of its BGP peers. Here, we clicked on the UID of CE node Master-2 (172.18.128.14) and we can see it has two eBGP peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), and both of them are Up: Here is the BGP status from the other two CE nodes - Master-0 (172.18.128.6) and Master-1 (172.18.128.10): For reference, here is an example of a CE node with "Failed Conditions" (Red) due to one of its BGP peers is down: Option 2 Go to "Multi-Cloud Network Connect" -> "Overview" -> "Sites" -> "Tools" -> "Show BGP peers" to bring up the BGP peers status info from all CE nodes from the selected site. Here, we can see the same BGP status of CE node master-2 (172.18.128.14) which has two eBGP peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), and both of them are Up: Here is the output of the other two CE nodes - Master-0 (172.18.128.6) and Master-1 (172.18.128.10): Example reference - ACI BGP peering status: XC BGP Routes Status To check the BGP routes, both received and advertised routes, go to "Multi-Cloud Network Connect" -> "Overview" -> "Sites" -> "Tools" -> "Show BGP routes" from the selected CE sites: In our example, we see all three CE nodes (Master-0, Master-1 and Master-2) advertised (exported) 10.10.122.122/32 to both of its BPG peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), while received (imported) 172.18.188.0/24 from them: Now, if we check the ACI Fabric, we should see both 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2) advertised 172.18.188.0/24 to all three CE nodes, while received 10.10.122.122/32 from all three of them (note "|" for multipath in the output): XC Routes Status To view the routing table of a CE node (or all CE nodes at once), we can simply select "Show routes": Based on the BGP routing table in our example (shown earlier), we should see each CE node has two Equal Cost Multi-Path (ECMP) installed in the routing table for 172.18.188.0/24: one to 172.18.128.11 (ACI boarder leaf switch 1) and one to 172.18.128.12 (ACI boarder leaf switch 2) as the next-hop, and we do (note "ECMP" for multipath in the output): Now, if we check the ACI Fabric, each of the ACI boarder leaf switch should have three ECMP installed in the routing table for 10.10.122.122: one to each CE node (172.18.128.6, 172.18.128.10 and 172.18.128.14) as the next-hop, and we do: Validation We can now securely connect our application in Hybrid Multi-Cloud environment: *Note: After F5 XC is deployed, we also use F5 XC DNS as our primary nameserver: To check the requests on the F5 Distributed Cloud Console, go to"Multi-Cloud Network Connect" -> "Sites" -> "Requests" from the selected CE site: Summary A F5 Distributed Cloud Customer Edge (CE) site can be deployed with Layer Three Attached deployment model in Cisco ACI environment. Both static routing and BGP are supported in the Layer Three Attached deployment model and can be easily configured on F5 Distributed Cloud Console with just a few clicks. With F5 Distributed Cloud Customer Edge (CE) site deployment, you can securely connect your application in Hybrid Multi-Cloud environment quickly and efficiently. Next Check out this video for some examples of Layer Three Attached CE use cases in Cisco ACI: Related Resources *On-Demand Webinar*Deploying F5 Distributed Cloud Services in Cisco ACI Deploying F5 Distributed Cloud (XC) Services in Cisco ACI - Layer Two Attached Deployment Customer Edge Site - Deployment & Routing Options Cisco ACI L3Out White Paper1.1KViews4likes0CommentsUnder the hood of F5 BIG-IP LTM and Cisco ACI integration – Role of the device package [End of Life]

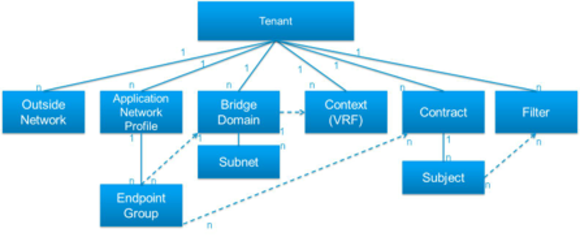

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. Since the FCS of F5 device package for Cisco APIC last month, we have seen a lot of interest and excitement from customers and the field alike, to understand how the combined open ecosystem value between Cisco ACI and F5 BIG-IP gets enabled. One of the critical components from F5 for this solution is F5 device package, which serves abstracting the L4-L7 service device in a way to allow the Cisco APIC to automate and provision a network service that attaches to the ACI fabric. As described in a previous article Accelerate and automate your application deployments with Cisco ACI and F5, traditional network service insertion imposes challenges with L4-L7 service device configuration, which is time-consuming, error prone and very difficult to track and how F5 and Cisco ACI addresses those challenges through service automation. The concept of Service graph In addition to network service device configuration, deployments come with the need for subjecting traffic to flow through a sequence of L4-L7 service instances depending on the policies configured. In other words, there is also a need for representing this sequence or chain of L4-L7 service functions for easier service provisioning. Cisco APIC provides the user with the ability to define a service graph with a chain of service functions such as Web application Firewall (WAF), Load balancer or network firewall including the sequence with which the service functions need to be applied. The graph defines these functions based on a user-defined policy for a particular application. One or more service appliances might be needed to render the services required by the service graph. Device Package Cisco APIC offers a centralized touch point for configuration management and automation of L4-L7 services, while the F5 device package makes that possible so APIC can interface with the service appliances (Physical or virtual) using southbound APIs. For example, in order to allow configuration of L4-L7 services on BIG-IP by Cisco APIC, the F5 device package would need to contain the XML schema of the F5 device model which defines parameters such as software version, SSL termination, Layer 4 SLB, network connectivity details, etc. It also includes a python script that maps APIC events to function calls for F5 BIG-IP LTM. Nuts and bolts of a Device Package The F5 device package – which is engineered to define, configure and monitor BIG-IP - allows customers to add, modify, remove, and monitor any F5 BIG-IP LTM services using Cisco APIC. A device package is a zip file containing two important files: Device Specification Device Script Device Specification The Device specification is an XML file that provides a hierarchical description of the device, including the configuration of each function, and is mapped to a set of managed objects on the APIC. The Device specification defines the following: Model: Model of the device - (BIG-IP LTM) Vendor: Vendor of the device - (F5) Version: Software version of the device - (1.0.1) Functions provided by a device, such as L4-7 load balancing, Microsoft Sharepoint, and SSL termination Device configuration parameters Interfaces and network connectivity information for each function Configuration parameters for each function Device Script The Device script, written in Python, manages communication between the APIC and the F5 device. It defines the mapping between Cisco APIC events and the function calls representing F5 device interactions, and converts a generic API to F5 device-specific calls. This is where the device script written in Python comes into picture. When a tenant admin uploads a device package to APIC, the APIC creates a hierarchy of managed objects representing the device and validates the device script. Device Package integration workflow with Cisco APIC In order to manage BIG-IP LTM service node through APIC, the tenant administrator must explicitly register the BIG-IP LTM. Device registration occurs when admin adds a new device to the network; the registration process informs the APIC of the device type, management, interfaces, and credentials so that the APIC can add the device to the fabric. Fig.1 shows the high level workflow Figure 1 – Device Package integration Workflow The tenant admin uploads the F5 device package to Cisco APIC using northbound APIs or the APIC user interface. The package upload operation installs the F5 device package in the Cisco APIC repository or managed object data model Tenant admin must also define the out-of-band management connectivity of BIG-IP LTM along with credentials. If the network needs traffic steering through F5 BIG-IP, the tenant administrator configures the service graph under the Layer 4-7 profile for tenant and adds service functions predefined in the F5 device package using device modification and service modification python function calls. The device package sends iControl calls (southbound integration) to configure required service graph parameters on F5 BIG-IP LTM using management connectivity established prior to uploading the device package F5 BIG-IP LTM Device Package Version 1.0.1 Below is a list of key functionalities and attributes of the F5 BIG-IP LTM device package version 1.0.1 Supports any BIG-IP LTM physical and virtual form factor running version 11.4.1 and above. Does not require any new module installation on the F5 BIG-IP LTM BIG-IQ integration with BIG-IP can co-exist with APIC – BIG-IP integration iRules (both F5 verified and custom defined) that resides in common partition can be referenced by Cisco APIC BIG-IP is licensed and OOB management configured prior to APIC integration Supports Active / Standby High Availability model Supports Multi-tenancy where every APIC tenant is represented by separate BIG-IP partition prefixed with “apic_paritition_number” L3/VRF separation through route domains in each BIG-IP partition Virtual server configuration (including but not limited to) pools, Self IPs, interfaces, VLANs, VIPs, algorithms etc are configured through Cisco APIC using service graph BIG-IP LTM-VE is integrated through Virtual infrastructure where vNIC placement is automated through Cisco APIC The F5 Device Package for Cisco Application Policy Infrastructure Controller ™ (APIC) is now available. To download at no cost, please go to https://downloads.f5.com/esd/productlines.jsp Further Resources: F5 and Cisco ACI Solution Blog on Dev central https://devcentral.f5.com/s/articles/accelerate-and-automate-your-application-deployments-with-cisco-aci-and-f5 Cisco Alliance page - https://f5.com/partners/product-technology-alliances/cisco Cisco page on DevCentral - https://devcentral.f5.com/s/cisco Cisco Blog on Device Package – http://blogs.cisco.com/datacenter/f5-device-package-for-cisco-apic-goes-fcs/ Technical Solution White paper -http://www.cisco.com/c/dam/en/us/solutions/collateral/data-center-virtualization/application-centric-infrastructure/white-paper-c11-732413.pdf Device Package integration demo - https://www.youtube.com/watch?v=5Nw2vtid7Zs752Views0likes0CommentsOpflex: Cisco Flexing its SDN Open Credentials [End of Life]

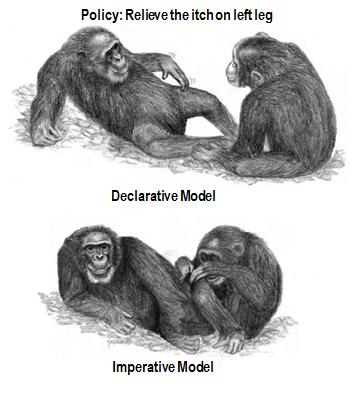

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. There has been a lot of buzz this past few month about OpFlex. As an avid weight lifter, it sounded to me like Cisco was branching out into fitness products with its Internet of Things marketing drive. But nope...OpFlex is actually a new policy protocol for its Application Centric Infrastructure (ACI) architecture. Just as Cisco engineered its own SDN implementation with ACI and the Application Policy Infrastructure Controller (APIC), it engineered Opflex as its Open SouthBound protocol in order to relay in XML or JSONthe application policy from a network policy controller like the APIC to any End Points such as switches, hypervisors and Application Delivery Controllers (ADC). It is important to note that Cisco is pushing OpFlex an as a standard in the IETF forum in partnership with Microsoft, IBM, Redhat, F5, Canonical and Citrix. Cisco is also contributing to the OpenDayLight project by delivering a completely open-sourced OpFlex architecture for the APIC and other SDN controllers with the OpenDayLight Group (ODL) Group Policy plugin. Simply put, this ODL group policy would just state the application policy intent instead of comunication every policy implementation details and commands. Quoting the IETF Draft: The OpFlex architecture provides a distributed control system based on a declarative policy information model. The policies are defined at a logically centralized policy repository (PR) and enforced within a set of distributed policy elements (PE). The PR communicates with the subordinate PEs using the OpFlex Control protocol. This protocol allows for bidirectional communication of policy, events, statistics, and faults. This document defines the OpFlex Control Protocol. " This was a smart move from Cisco to silence its competitors labelling its ACI SDN architecture as another closed and proprietary architecture. The APIC actually exposes its NorthBound interfaces with Open APIs for Orchestrators such as OpenStack and Applications. The APIC also supports plugins for other open protocols such as JSON and Python. However, the APIC pushes its application policy SouthBound to the ACI fabric End Points via a device package. Cisco affirms the openness of the device package as it is composed of files written with open languages such as XML and python which can be programmed by anyone. As discussed in my previous ACI blogs, the APIC pushes the Application Network Profile and its policy to the F5 Synthesis Fabric and its Big-IP devices via an F5 device package. This worked well for F5 as it allowed it to preserve its Synthesis fabric programmable model with iApps/iRules and the richness of its L4-L7 features. SDN controllers have been using OpenFlow and OVSDB as open SouthBound protocols to push the controller policy unto the network devices.OpenFlow and OVSDB are IETF standards and supports the OpenDayLight project as a SouthBound interfaces. However, OpenFlow has been targeting L2 and L3 flow programmability which has limited its use case for L4-L7 network service insertion with SDN controllers. OpenFlow and OVSDB are used by Cisco ACI's nemesis.. VMware NSX. OpenFlow is used for flow programmability and OVSDB for device configuration. One of the big difference between OpFlex and OpenFlow lies with their respective SDN model. Opflex uses a Declarative SDN model while OpenFlow uses an Imperative SDN model.As the name entails, an OpenFlow SDN controller takes a dictatorial approach with a centralized controller (or clustered controllers) by delivering downstream detailed and complex instructions to the network devices control plane in order to show them how to deploy the application policy unto their dataplane. With OpFlex, the APIC or network policy controller take a more collaborative approach by distributing the intelligence to the network devices. The APIC declares its intent via its defined application policy and relays the instructions downstream to the network devices while trusting them to deploy the application policy requirements unto their dataplane based on their control plane intelligence. With Opflex declarative model, the network devices are co-operators with the APIC by retaining control of their control plane while with OpenFlow and the SDN imperative model, the network devices will be mere extensions of the SDN controller. According to Cisco, there are merits with OpFlex and its declarative model: 1- The APIC declarative model would be more redundant and less dependent on the APIC availability as an APIC or APIC cluster failure will not impact the ACI switch fabric operation. Traffic will still be forwarded and F5 L4-L7 services applied until the APIC comes back online and a new application policy is pushed. In the event that an OpenFlow SDN controller and controller cluster was to fail, the network will no longer be able to forward traffic and apply services based on the application policy requirement. 2- A declarative model would be a more scalable and distributed architecture as it allows the network devices to determine the method to implement the application policy and extend of the list of its supported features without requiring additional resources from the controller. On a light note, it is good to assume that the rise of the planet of network devices against their SDN controller Overlords is not likely to happen any time soon and that the controller instructions will be implemented in both instances in order to meet the application policy requirements. Ultimately, this is not an OpFlex vs OpenFlow battle and the choice between OpenFlow and OpFlex protocols will be in the hands of customers and their application policies. We all just need to keep in mind that the goal of SDN is to keep a centralized and open control plane to deploy applications. F5 welcomes OpFlex and is working to implement it as an agent for its Big-IP physical and virtual devices. With OpFlex, the F5 Big-IP physical or virtual devices will be responsible for the implementation of the L4-L7 network services defined by the APIC Application Network Profile unto the ACI switch fabric. The OpFlex protocol will enable F5 to extend its Synthesis Stateful L4-L7 fabricarchitecture to the Cisco ACI Stateless network fabric. To Quote Soni Jiandani, a founding member of Cisco ACI: The declarative model assumes the controller is not the centralized brain of the entire system. It assumes the centralized policy manager will help you in the definition of policy, then push out the intelligence to the edges of the network and within the infrastructure so you can continue to innovate at the endpoint. Let’s take an example. If I am an F5 or a Citrix or a Palo Alto Networks or a hypervisor company, I want to continue to add value. I don’t want a centralized controller to limit innovation at the endpoint. So a declarative model basically says that, using a centralized policy controller, you can define the policy centrally and push it out and the endpoint will have the intelligence to abide by that policy. They don’t become dumb devices that stop functioning the normal way because the intelligence solely resides in the controller.516Views0likes0CommentsF5 iWorkflow and Cisco ACI : True application centric approach in application deployment (End Of Life)

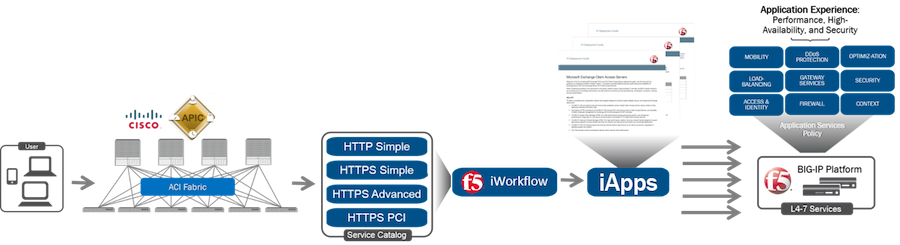

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. On June 15 th , 2016, F5 released iWorkflow version 2.0, a virtual appliance platform designed to deploy application with greater agility and consistency. F5 iWorkflow Cisco APIC cloud connector provides a conduit allowing APIC to deploy F5 iApps on BIG-IP. By leveraging iWorkflow, administrator has the capability to customize application template and expose it to Cisco APIC thru iWorkflow dynamic device package. F5 iWorkflow also support Cisco APIC Chassis and Device Manager features. Administrator can now build Cisco ACI L4-L7 devices using a pair of F5 BIG-IP vCMP HA guest with a iWorkflow HA cluster. The following 2-part video demo shows: (1) How to deploy iApps virtual server in BIG-IP thru APIC and iWorkflow (2) How to build Cisco ACI L4-L7 devices using F5 vCMP guests HA and iWorkflow HA cluster F5 iWorkflow, BIG-IP and Cisco APIC software compatibility matrix can be found under: https://support.f5.com/kb/en-us/solutions/public/k/11/sol11198324.html Check out iWorkflow DevCentral page for more iWorkflow info: https://devcentral.f5.com/s/wiki/iworkflow.homepage.ashx You can download iWorkflow from https://downloads.f5.com458Views1like1CommentCisco Partners with F5 to Accelerate SDN Adoption [End of Life]

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. SDN, like every new technology, has begun maturing from its singular focus on standardizing networks to embrace a broader vision focused on addressing real challenges in modern data centers. SDN today aims to provide an automated, policy-driven data center capable of adapting to the rapid shifts in technology driven by cloud, mobility and massive growth in applications. Customers require a comprehensive approach to deploying applications that includes automating and orchestrating both network and application services. Realizing this vision requires programmability and choices. For over ten years F5 has been delivering both to its customers with open APIs: iRules for the data plane, iControl for the control plane and iApps to capture and apply service definition policies. Last year we introduced Synthesis, which added orchestration via BIG-IQ and extended the reach of application services into the cloud. These industry leading innovations further enabled customers with choices for application deployment and orchestration through a robust partner ecosystem. In November 2013 that ecosystem was expanded to include Cisco and its Application Centric Infrastructure (ACI). ACI addresses network challenges stemming from years of siloed network topology such as the inability to rapidly provision services and respond in real-time to conditions impacting application performance. While strides had been made to address those challenges, Cisco took a major step forward with ACI. Its focus on programmability as a way to automate and orchestrate the entire data center stack aligned well with F5’s vision and capabilities, making support for ACI a natural extension of our architecture. Partnering with F5 brings a sophisticated set of programmable L4-7 services to Cisco ACI and offers customers another choice in moving confidently toward adopting an SDN architecture that addresses the entire data center stack. Customers can choose to orchestrate F5’s catalog of L4-7 services directly through our control plane API, iControl, via our orchestration product, BIG-IQ, or through ecosystem partner orchestration systems like Cisco APIC. Once adopted, customers will be able to use Cisco ACI to orchestrate differentiated L4-7 services from F5 to address a variety of challenges and directly support business requirements related to application performance, security and reliability. Customers will be able to orchestrate L4-7 services with Cisco ACI that steer application requests based on device, user identity, location and application. Customers desiring to attach application security polices to existing applications will be able to use Cisco ACI to orchestrate insertion of the appropriate F5 services to reduce risk. This week, Cisco announced an architecture and supporting control protocol to further expand customer choices in orchestrating the data center. The OpFlex architecture is a policy-based model that centralizes control and locally enforces policies using an open southbound API – the OpFlex Control Protocol. This complements F5’s existing architectural vision and though OpFlex is still in the definition phase, F5 is committed to supporting it. F5 is delighted that this Cisco partnership offers customers expanded choices to move forward with SDN initiatives to automate and orchestrate the entire data center stack.424Views0likes1CommentF5 Release Device Package 1.2.0 for Cisco ACI [End of Life]

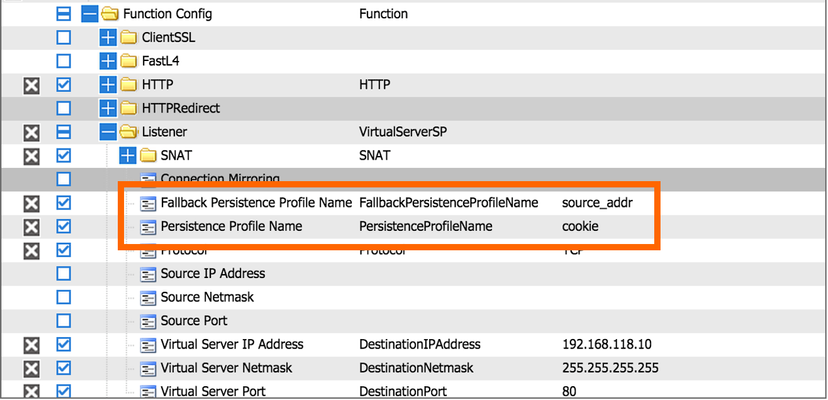

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. On July 1 st , 2015, F5 released Device Package version 1.2.0 for Cisco ACI. What is new in this device package? PERSISTNACE PROFILE We added persistence profiles (default and fallback) support in this device package. Thru APIC, you can reference persistence profiles (default or custom built) reside in common partition. F5 sees the referencing approach provides customers greater flexibility in managing profiles than configuring profiles within APIC -> you can modify the profiles parameters out-of-band. FULL ADDRESS TRANSLATION SUPPORT We also added full address translation capability to our device package 1.2.0. You can now choose SNAT Pool / Automap / None options: We also made feature enhancements on allowing customers to tune additional parameters: Connection Mirroring (Enable / Disable) Source Port (Change / Preserve / Preserve-Strict) Port Lockdown (All / None / Default) F5 continues to strive for improvements in our Cisco ACI integrations to meet customers demand in ACi service insertion environment. You can download the latest device package from downloads.f5.com.344Views0likes0CommentsRSA 2015 - F5 BIG-IP, Cisco ASA and Cisco ACI Service Insertation Presentation [End of Life]

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. Come to RSA 2015 in San Francisco and check out the F5 booth this week. We have a presentation and demo that goes over how F5 has teamed up with Cisco ACI and ASA to provide more secure and avaliable Applications using service integration in the ACI fabric. This solution automates the deployment of a firewall (ASA) and load balancer (BIG-IP LTM) using an ACI 2-node service graph. Additional security is also avaliable with BIG-IP through the use of partitions, route domains, and iRules. Come, check it out, and stay for a quick chat. The presentation schedule is posted at the booth. Presentation Session: Secure And Available Application Services Through F5 BIG-IP, Cisco ASA and Cisco ACI339Views0likes1CommentHarnessing the Full Power of F5 BIG-IP in Cisco ACI using BIG-IQ [End of Life]

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. Extending F5’s integration with Cisco ACI, this demo shows how F5 iApps are utilized to expose application services into Cisco APIC via F5’s BIG-IQ management platform. A true application centric approach, leveraging iApps to configure almost anything (iRules, Profiles, etc) on the BIG-IP platform based on application requirements.334Views1like0CommentsMeet F5 Networks @ CiscoLive Berlin 2016

CiscoLive Berlin 2016 has started! Come and meet F5 Networks, Feb 15 – 19, stand P2. Visit our stand with the F5 tag display and you can win prizes and walk away with goodies. While you are at our stand, check out many demos we have prepared for you on F5 + Cisco solutions, such as ACI, programmability, SP and Security. Don’t forget to come to our Breakout session: A Technical Deep Dive on F5 BIG-IQ, BIG-IP and Cisco ACI for Applications Deployment Tuesday, 16 February: 11:15am – 12:45pm We look forward to meeting you at CiscoLive Berlin 2016!331Views0likes0Comments