cisco aci

15 TopicsDeploying F5 Distributed Cloud (XC) Services in Cisco ACI - Layer Three Attached Deployment

Introduction F5 Distributed Cloud (XC) Services are SaaS-based security, networking, and application management services that can be deployed across multi-cloud, on-premises, and edge locations. This article will show you how you can deploy F5 Distributed Cloud Customer Edge (CE) site in Cisco Application Centric Infrastructure (ACI) so that you can securely connect your application in Hybrid Multi-Cloud environment. XC Layer Three Attached CE in Cisco ACI A F5 Distributed Cloud Customer Edge (CE) site can be deployed with Layer Three Attached in Cisco ACI environment using Cisco ACI L3Out. As a reminder, Layer Three Attached is one of the deployment models to get traffic to/from a F5 Distributed Cloud CE site, where the CE can be a single node or a three nodes cluster. Static routing and BGP are both supported in the Layer Three Attached deployment model. When a Layer Three Attached CE site is deployed in Cisco ACI environment using Cisco ACI L3Out, routes can be exchanged between them via static routing or BGP. In this article, we will focus on BGP peering between Layer Three Attached CE site and Cisco ACI Fabric. XC BGP Configuration BGP configuration on XC is simple and it only takes a couple steps to complete: 1) Go to "Multi-Cloud Network Connect" -> "Networking" -> "BGPs". *Note: XC homepage is role based, and to be able to configure BGP, "Advanced User" is required. 2) "Add BGP" to fill out the site specific info, such as which CE Site to run BGP, its BGP AS number etc., and "Add Peers" to include its BGP peers’ info. *Note: XC supports direct connection for BGP peering IP reachability only. XC Layer Three Attached CE in ACI Example In this section, we will use an example to show you how to successfully bring up BGP peering between a F5 XC Layer Three Attached CE site and a Cisco ACI Fabric so that you can securely connect your application in Hybrid Multi-Cloud environment. Topology In our example, CE is a three nodes cluster (Master-0, Master-1 and Master-2) that has a VIP 10.10.122.122/32 with workloads, 10.131.111.66 and 10.131.111.77, in the cloud (AWS): The CE connects to the ACI Fabric via a virtual port channel (vPC) that spans across two ACI boarder leaf switches. CE and ACI Fabric are eBGP peers via an ACI L3Out SVI for routes exchange. CE is eBGP peered to both ACI boarder leaf switches, so that in case one of them is down (expectedly or unexpectedly), CE can still continue to exchange routes with the ACI boarder leaf switch that remains up and VIP reachability will not be affected. XC BGP Configuration First, let us look at the XC BGP configuration ("Multi-Cloud Network Connect" -> "Networking" -> "BGPs"): We "Add BGP" of "jy-site2-cluster" with site specific BGP info along with a total of six eBGP peers (each CE node has two eBGP peers; one to each ACI boarder leaf switch): We "Add Item" to specify each of the six eBPG peers’ info: Example reference - ACI BGP configuration: XC BGP Peering Status There are a couple of ways to check the BGP peering status on the F5 Distributed Cloud Console: Option 1 Go to "Multi-Cloud Network Connect" -> "Networking" -> "BGPs" -> "Show Status" from the selected CE site to bring up the "Status Objects" page. The "Status Objects" page provides a summary of the BGP status from each of the CE nodes. In our example, all three CE nodes from "jy-site2-cluster" are cleared with "0 Failed Conditions" (Green): We can simply click on a CE node UID to further look into the BGP status from the selected CE node with all of its BGP peers. Here, we clicked on the UID of CE node Master-2 (172.18.128.14) and we can see it has two eBGP peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), and both of them are Up: Here is the BGP status from the other two CE nodes - Master-0 (172.18.128.6) and Master-1 (172.18.128.10): For reference, here is an example of a CE node with "Failed Conditions" (Red) due to one of its BGP peers is down: Option 2 Go to "Multi-Cloud Network Connect" -> "Overview" -> "Sites" -> "Tools" -> "Show BGP peers" to bring up the BGP peers status info from all CE nodes from the selected site. Here, we can see the same BGP status of CE node master-2 (172.18.128.14) which has two eBGP peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), and both of them are Up: Here is the output of the other two CE nodes - Master-0 (172.18.128.6) and Master-1 (172.18.128.10): Example reference - ACI BGP peering status: XC BGP Routes Status To check the BGP routes, both received and advertised routes, go to "Multi-Cloud Network Connect" -> "Overview" -> "Sites" -> "Tools" -> "Show BGP routes" from the selected CE sites: In our example, we see all three CE nodes (Master-0, Master-1 and Master-2) advertised (exported) 10.10.122.122/32 to both of its BPG peers: 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2), while received (imported) 172.18.188.0/24 from them: Now, if we check the ACI Fabric, we should see both 172.18.128.11 (ACI boarder leaf switch 1) and 172.18.128.12 (ACI boarder leaf switch 2) advertised 172.18.188.0/24 to all three CE nodes, while received 10.10.122.122/32 from all three of them (note "|" for multipath in the output): XC Routes Status To view the routing table of a CE node (or all CE nodes at once), we can simply select "Show routes": Based on the BGP routing table in our example (shown earlier), we should see each CE node has two Equal Cost Multi-Path (ECMP) installed in the routing table for 172.18.188.0/24: one to 172.18.128.11 (ACI boarder leaf switch 1) and one to 172.18.128.12 (ACI boarder leaf switch 2) as the next-hop, and we do (note "ECMP" for multipath in the output): Now, if we check the ACI Fabric, each of the ACI boarder leaf switch should have three ECMP installed in the routing table for 10.10.122.122: one to each CE node (172.18.128.6, 172.18.128.10 and 172.18.128.14) as the next-hop, and we do: Validation We can now securely connect our application in Hybrid Multi-Cloud environment: *Note: After F5 XC is deployed, we also use F5 XC DNS as our primary nameserver: To check the requests on the F5 Distributed Cloud Console, go to "Multi-Cloud Network Connect" -> "Sites" -> "Requests" from the selected CE site: Summary A F5 Distributed Cloud Customer Edge (CE) site can be deployed with Layer Three Attached deployment model in Cisco ACI environment. Both static routing and BGP are supported in the Layer Three Attached deployment model and can be easily configured on F5 Distributed Cloud Console with just a few clicks. With F5 Distributed Cloud Customer Edge (CE) site deployment, you can securely connect your application in Hybrid Multi-Cloud environment quickly and efficiently. Next Check out this video for some examples of Layer Three Attached CE use cases in Cisco ACI: Related Resources *On-Demand Webinar* Deploying F5 Distributed Cloud Services in Cisco ACI F5 Distributed Cloud (XC) Global Applications Load Balancing in Cisco ACI Deploying F5 Distributed Cloud (XC) Services in Cisco ACI - Layer Two Attached Deployment Customer Edge Site - Deployment & Routing Options Cisco ACI L3Out White Paper1.6KViews4likes1CommentUnify Visibility with F5 ACI ServiceCenter in Cisco ACI and F5 BIG-IP Deployments

What is F5 ACI ServiceCenter? F5 ACI ServiceCenter is an application that runs natively on Cisco Application Policy Infrastructure Controller (APIC), which provides administrators a unified way to manage both L2-L3 and L4-L7 infrastructure in F5 BIG-IP and Cisco ACI deployments. Once day-0 activities are performed and BIG-IP is deployed within the ACI fabric, F5 ACI ServiceCenter can then be used to handle day-1 and day-2 operations. F5 ACI ServiceCenter is well suited for both greenfield and brownfield deployments. F5 ACI ServiceCenter is a successful and popular integration between F5 BIG-IP and Cisco Application Centric Infrastructure (ACI). This integration is loosely coupled and can be installed and uninstalled at anytime without any disruption to the APIC and the BIG-IP. F5 ACI ServiceCenter supports REST API and can be easily integrated into your automation workflow: F5 ACI ServiceCenter Supported REST APIs. Where can we download F5 ACI ServiceCenter? F5 ACI ServiceCenter is completely Free of charge and it is available to download from Cisco DC App Center. F5 ACI ServiceCenter is fully supported by F5. If you run into any issues and/or would like to see a new feature or an enhancement integrated into future F5 ACI ServiceCenter releases, you can open a support ticket here. Why should we use F5 ACI ServiceCenter? F5 ACI ServiceCenter has three main independent use cases and you have the flexibility to use them all or to pick and choose to use whichever ones that fit your requirements: Visibility F5 ACI ServiceCenter provides enhanced visibility into your F5 BIG-IP and Cisco ACI deployment. It has the capability to correlate BIG-IP and APIC information. For example, you can easily find out the correlated APIC Endpoint information for a BIG-IP VIP, and you can also easily determine the APIC Virtual Routing and Forwarding (VRF) to BIG-IP Route Domain (RD) mapping from F5 ACI ServiceCenter as well. You can efficiently gather the correlated information from both the APIC and the BIG-IP on F5 ACI ServiceCenter without the need to hop between BIG-IP and APIC. Besides, you can also gather the health status, the logs, statistics etc. on F5 ACI ServiceCenter as well. L2-L3 Network Configuration After BIG-IP is inserted into ACI fabric using APIC service graph, F5 ACI ServiceCenter has the capability to extract the APIC service graph VLANs from the APIC and then deployed the VLANs on the BIG-IP. This capability allows you to always have the single source of truth for network configuration between BIG-IP and APIC. L4-L7 Application Services F5 ACI ServiceCenter leverages F5 Automation Toolchain for application services: Advanced mode, which uses AS3 (Application Services 3 Extension) Basic mode, which uses FAST (F5 Application Services Templates) F5 ACI ServiceCenter also has the ability to dynamically add or remove pool members from a pool on the BIG-IP based on the endpoints discovered by the APIC, which helps to reduce configuration overhead. Other Features F5 ACI ServiceCenter can manage multiple BIG-IPs - physical as well as virtual BIG-IPs. If Link Layer Discovery Protocol (LLDP) is enabled on the interfaces between Cisco ACI and F5 BIG-IP, F5 ACI ServiceCenter can discover the BIG-IP and add it to the device list as well. F5 ACI Service can also categorize the BIG-IP accordingly, for example, if it is a standalone or in a high availability (HA) cluster. Starting from version 2.11, F5 ACI ServiceCenter supports multi-tenant design too. These are just some of the features and to find out more, check out F5 ACI ServiceCenter User and Deployment Guide. F5 ACI ServiceCenter Resources Webinar: Unify Your Deployment for Visibility with Cisco and the F5 ACI ServiceCenter Learn: F5 DevCentral Youtube Videos: F5 ACI ServiceCenter Playlist Cisco Learning Video: Configuring F5 BIG-IP from APIC using F5 ACI ServiceCenter Cisco ACI and F5 BIG-IP Design Guide White Paper Hands-on: F5 ACI ServieCenter Interactive Demo Cisco dCloud Lab - Cisco ACI with F5 ServiceCenter Lab v3 Get Started: Download F5 ACI ServiceCenter F5 ACI ServiceCenter User and Deployment Guide1.8KViews1like0CommentsF5 iWorkflow and Cisco ACI : True application centric approach in application deployment (End Of Life)

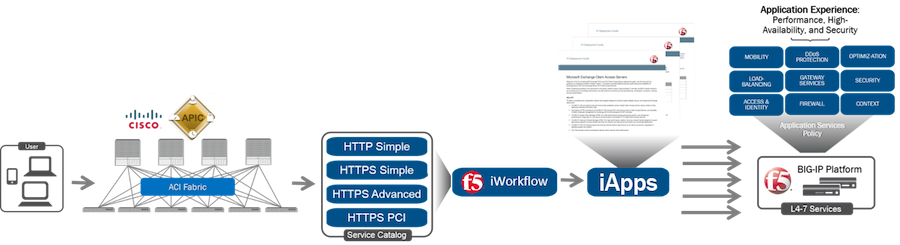

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. On June 15 th , 2016, F5 released iWorkflow version 2.0, a virtual appliance platform designed to deploy application with greater agility and consistency. F5 iWorkflow Cisco APIC cloud connector provides a conduit allowing APIC to deploy F5 iApps on BIG-IP. By leveraging iWorkflow, administrator has the capability to customize application template and expose it to Cisco APIC thru iWorkflow dynamic device package. F5 iWorkflow also support Cisco APIC Chassis and Device Manager features. Administrator can now build Cisco ACI L4-L7 devices using a pair of F5 BIG-IP vCMP HA guest with a iWorkflow HA cluster. The following 2-part video demo shows: (1) How to deploy iApps virtual server in BIG-IP thru APIC and iWorkflow (2) How to build Cisco ACI L4-L7 devices using F5 vCMP guests HA and iWorkflow HA cluster F5 iWorkflow, BIG-IP and Cisco APIC software compatibility matrix can be found under: https://support.f5.com/kb/en-us/solutions/public/k/11/sol11198324.html Check out iWorkflow DevCentral page for more iWorkflow info: https://devcentral.f5.com/s/wiki/iworkflow.homepage.ashx You can download iWorkflow from https://downloads.f5.com477Views1like1CommentHarnessing the Full Power of F5 BIG-IP in Cisco ACI using BIG-IQ [End of Life]

The F5 and Cisco APIC integration based on the device package and iWorkflow is End Of Life. The latest integration is based on the Cisco AppCenter named ‘F5 ACI ServiceCenter’. Visit https://f5.com/cisco for updated information on the integration. Extending F5’s integration with Cisco ACI, this demo shows how F5 iApps are utilized to expose application services into Cisco APIC via F5’s BIG-IQ management platform. A true application centric approach, leveraging iApps to configure almost anything (iRules, Profiles, etc) on the BIG-IP platform based on application requirements.338Views1like0Comments