LTM

398 TopicsL2 Deployment of BIG-IP with Gigamon

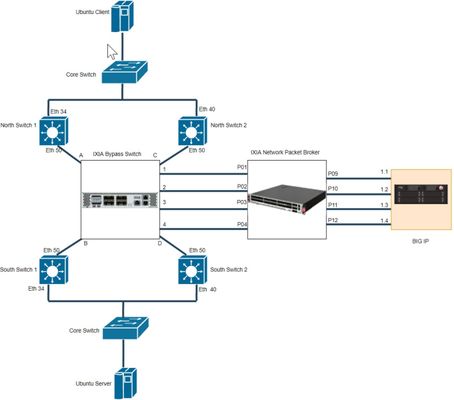

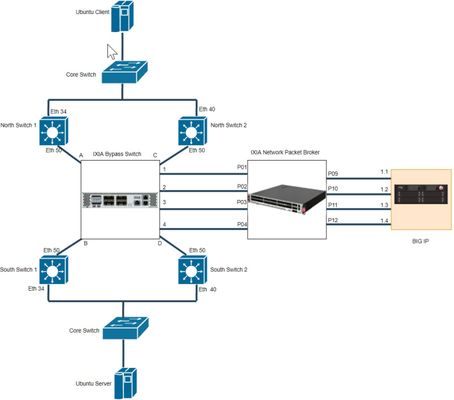

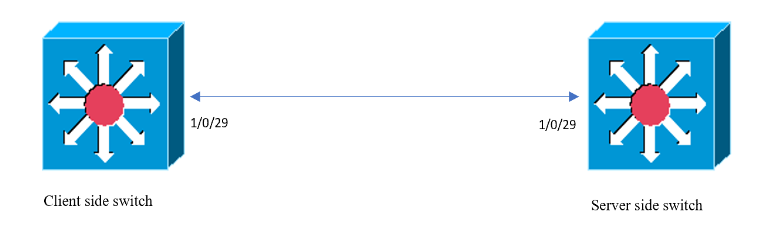

Introduction This article is part of a series on deploying BIG-IPs with bypass switches and network packet brokers. These devices allow for the transparent integration of network security tools with little to no network redesign and configuration change. For more information about bypass switch devices refer to https://en.wikipedia.org/wiki/Bypass_switch; for network packet brokers, refer to https://www.ixiacom.com/company/blog/network-packet-brokers-abcs-network-visibility and https://www.gigamon.com/campaigns/next-generation-network-packet-broker.html. The article series introduces network designs to forward traffic to the inline tools at layer 2 (L2). F5’s BIG-IP hardware appliances can be inserted in L2 networks. This can be achieved using either virtual Wire (vWire) or by bridging 2 Virtual LANs using a VLAN Groups. This document covers the design and implementation of the Gigamon Bypass Switch/Network Packet Broker in conjunction with the BIG-IP i5800 appliance and Virtual Wire (vWire). This document focuses on Gigamon Bypass Switch / Network Packet Broker. For more information about architecture overview of bypass switch and network packet broker refer to https://devcentral.f5.com/s/articles/L2-Deployment-of-vCMP-guest-with-Ixia-network-packet-broker?tab=series&page=1. Gigamon provides internal bypass switch within network packet broker device whereas Ixia has external bypass switch. Network Topology Below diagram is a representation of the actual lab network. This shows deployment of BIG-IP with Gigamon. Figure 1 - Topology before deployment of Gigamon and BIG-IP Figure 2 - Topology after deployment of Gigamon and BIG-IP Figure 3 - Connection between Gigamon and BIG-IP Hardware Specification Hardware used in this article are BIG-IP i5800 GigaVUE-HC1 Arista DCS-7010T-48 (all the four switches) Note: All the Interfaces/Ports are 1G speed Software Specification Software used in this article are BIG-IP 16.1.0 GigaVUE-OS 5.7.01 Arista 4.21.3F (North Switches) Arista 4.19.2F (South Switches) Gigamon Configuration In this lab, the Gigamon is configured with two type of ports, Inline Network and Inline Tool. Steps Summary Step 1 : Configure Port Type Step 2 : Configure Inline Network Bypass Pair Step 3 : Configure Inline Network Group (if applicable) Step 4 : Configure Inline Tool Pair Step 5 : Configure Inline Tool Group (if applicable) Step 6 : Configure Inline Traffic Flow Maps Step 1 : Configure Port Type First and Foremost step is to configure Ports. Figure 2 shows all the ports that are connected between Switches and Gigamon. Ports that are connected to switch should be configured as Inline Network Ports. As per Figure 2, find below Inline Network ports Inline Network ports: 1/1/x1, 1/1/x2, 1/1/x3, 1/1/x4, 1/1/x5. 1/1/x6, 1/1/x7, 1/1/x8 Figure 3 shows all the ports that are connected between BIG-IP and Gigamon. Ports that are connected to BIG-IP should be configured as Inline Tool Ports. As per Figure 3, find below Inline Tool ports Inline Tool ports: 1/1/x9, 1/1/x10, 1/1/x11, 1/1/x12, 1/1/g1, 1/1/g2, 1/1/g3, 1/1/g4 To configure Port Type, do the following Log into GigaVUE-HC1 GUI Select Ports -> Go to specific port and modify Port Type as Inline Network or Inline Tool Figure 4 - GUI configuration of Port Types Equivalent command for configuring Inline Network port and other port configuration port 1/1/x1 type inline-net port 1/1/x1 alias N-SW1-36 port 1/1/x1 params admin enable autoneg enable Equivalent command for configuring Inline Tool Port and other port configuration port 1/1/x9 type inline-tool port 1/1/x9 alias BIGIP-1.1 port 1/1/x9 params admin enable autoneg enable Step 2 : Configure Inline Network Bypass Pair Figure 1 shows direct connections between switches. An inline network bypass pair will ensure the same connections through Gigamon. An inline network is an arrangement of two ports of the inline-network type. The arrangement facilitates access to a bidirectional link between two networks (two far-end network devices) that need to be linked through an inline tool. As per Figure 2, find below Inline Network bypass pairs Inline Network bypass pair 1 : 1/1/x1 -> 1/1/x2 Inline Network bypass pair 2 : 1/1/x3 -> 1/1/x4 Inline Network bypass pair 3 : 1/1/x5 -> 1/1/x6 Inline Network bypass pair 4 : 1/1/x7 -> 1/1/x8 To configure the inline network bypass pair, do the following Log into GigaVUE-HC1 GUI Select Inline Bypass -> Inline Networks Figure 5 - Example GUI configuration of Inline Network Bypass Pair Equivalent command for configuring Inline Network Bypass Pair inline-network alias Bypass1 pair net-a 1/1/x1 and net-b 1/1/x2 physical-bypass disable traffic-path to-inline-tool Step 3 : Configure Inline Network Group An inline network group is an arrangement of multiple inline networks that share the same inline tool. To configure the inline network bypass group, do the following Log into GigaVUE-HC1 GUI Select Inline Bypass -> Inline Networks Groups Figure 6 - Example GUI configuration of Inline Network Bypass Group Equivalent command for configuring Inline Network Bypass Group inline-network-group alias Bypassgroup network-list Bypass1,Bypass2,Bypass3,Bypass4 Step 4 : Configure Inline Tool Pair Figure 3 shows connection between BIG-IP and Gigamon which will be in pairs. An inline tool consists of inline tool ports, always in pairs, running at the same speed, on the same medium. As per Figure 3, find below Inline Tool pairs. Inline Network bypass pair 1 : 1/1/x9 -> 1/1/x10 Inline Network bypass pair 2 : 1/1/x11 -> 1/1/x12 Inline Network bypass pair 3 : 1/1/g1 -> 1/1/g2 Inline Network bypass pair 4 : 1/1/g3 -> 1/1/g4 To configure the inline tool pair, do the following Log into GigaVUE-HC1 GUI Select Inline Bypass -> Inline Tools Figure 7 - Example GUI configuration of Inline Tool Pair Equivalent command for configuring Inline Tool pair inline-tool alias BIGIP1 pair tool-a 1/1/x9 and tool-b 1/1/x10 enable shared true Step 5 : Configure Inline Tool Group (if applicable) An inline tool group is an arrangement of multiple inline tools to which traffic is distributed to the inline tools based on hardware-calculated hash values. For example, if one tool goes down, traffic is redistributed to other tools in the group using hashing. To configure the inline tool group, do the following Log into GigaVUE-HC1 GUI Select Inline Bypass -> Inline Tool Groups Figure 8 - Example GUI configuration of Inline Tool Group Equivalent command for configuring Inline Tool Group inline-tool-group alias BIGIPgroup tool-list BIGIP1,BIGIP2,BIGIP3,BIGIP4 enable Step 6 : Configure Inline Traffic Flow Maps Flow mapping takes traffic from a network TAP or a SPAN/mirror port and sends it through a set of user-defined map rules to the tools and applications that secure, monitor and analyze IT infrastructure. As per Figure 2, it is the high-level process for configuring traffic to flow from the inline network links to the inline tool group, allowing you to test the deployment functionality of the BIG-IP appliances within the group. To configure the inline tool group, do the following Log into GigaVUE-HC1 GUI Select Maps -> New Figure 9 - Example GUI configuration of Flow Maps Note: Above configuration allows all traffic from Inline Network Group to flow through Inline Tool Group Equivalent command for configuring PASS ALL Flow Map map-passall alias Map1 to BIGIPgroup from Bypassgroup Flow Maps can be configured specific to certain traffic. For example, If LACP traffic should bypass BIG-IP and all other traffic should pass through BIG-IP. Find below command to achieve mentioned condition map alias inMap type inline byRule roles replace admin to owner_roles comment " " rule add pass ethertype 8809 to bypass from Bypassgroup exit map-scollector alias SCollector roles replace admin to owner_roles from Bypassgroup collector BIGIPgroup exit Note: For more details on Gigamon, refer https://docs.gigamon.com/pdfs/Content/Shared/5700-doclist.html BIG-IP Configuration In series of BIG-IP and Gigamon deployment, BIG-IP configured in L2 mode with Virtual Wire (vWire) Step Summary Step 1 : Configure interfaces to support vWire Step 2 : Configure trunk in LACP mode or passthrough mode Step 3 : Configure Virtual Wire Note: Steps mentioned above are specific to topology in Figure 2. For more details on Virtual Wire (vWire), refer https://devcentral.f5.com/s/articles/BIG-IP-vWire-Configuration?tab=series&page=1 and https://devcentral.f5.com/s/articles/vWire-Deployment-Configuration-and-Troubleshooting?tab=series&page=1 Step 1 : Configure interfaces to support vWire To configure interfaces to support vWire, do the following Log into BIG-IP GUI Select Network -> Interfaces -> Interface List Select Specific Interface and in vWire configuration, select Virtual Wire as Forwarding Mode Figure 10 - Example GUI configuration of interface to support vWire Step 2 : Configure trunk in LACP mode or passthrough mode To configure trunk, do the following Log into BIG-IP GUI Select Network -> Trunks Click Create to configure new Trunk. Enable LACP for LACP mode and disable LACP for LACP passthrough mode Figure 11 - Example GUI configuration of Trunk in LACP Mode Figure 12 - Example GUI configuration of Trunk in LACP Passthrough Mode As per Figure 2, when configured in LACP Mode, LACP will be established between BIG-IP and switches. When configured in LACP passthrough mode, LACP will be established between North and South Switches. As per Figure 2 and 3 , there will be four trunk configured as below, Left_Trunk 1 : Interfaces 1.1 and 2.3 Left_Trunk 2 : Interfaces 1.3 and 2.1 Right_Trunk 1 : Interfaces 1.2 and 2.4 Right_Trunk 2 : Interfaces 1.4 and 2.2 Left_Trunk ensure connectivity between BIG-IP and North Switches. Right_Trunk ensure connectivity between BIG-IP and South Switches. Note: Trunks can be configured for individual interfaces, if LACP passthrough configured as LACP frames not getting terminated at BIG-IP Step 3 : Configure Virtual Wire To configure trunk, do the following Log into BIG-IP GUI Select Network -> Virtual Wire Click Create to configure Virtual Wire Figure 13 - Example GUI configuration of Virtual Wire Above Virtual Wire configuration will work for both Tagged and Untagged traffic. Figure 2 and 3, requires both the Virtual Wire configured. This configuration works for both LACP mode and LACP passthrough mode. If each interface configured with specific trunk in passthrough deployment, then there will be 4 specific Virtual Wires configured. Note: In this series, all the mentioned scenarios and configuration will be covered in upcoming articles. Conclusion This deployment ensures transparent integration of network security tools with little to no network redesign and configuration change. The Merits of above network deployment are Increases reliability of Production link Inline devices can be upgraded or replaced without loss of the link Traffic can be shared between multiple tools Specific Traffic can be forwarded to customized tools Trusted Traffic can be Bypassed un-inspected2.2KViews9likes5CommentsBIG-IP L2 Virtual Wire LACP Passthrough Deployment with IXIA Bypass Switch and Network Packet Broker (Single Service Chain - Active / Active)

Introduction This article is part of a series on deploying BIG-IPs with bypass switches and network packet brokers. These devices allow for the transparent integration of network security tools with little to no network redesign and configuration change. For more information about bypass switch devices refer to https://en.wikipedia.org/wiki/Bypass_switch; for network packet brokers, refer to https://www.ixiacom.com/company/blog/network-packet-brokers-abcs-network-visibility and https://www.gigamon.com/campaigns/next-generation-network-packet-broker.html. The article series introduces network designs to forward traffic to the inline tools at layer 2 (L2). F5’s BIG-IP hardware appliances can be inserted in L2 networks. This can be achieved using either virtual Wire (vWire) or by bridging 2 Virtual LANs using a VLAN Groups. This document covers the design and implementation of the IXIA Bypass Switch/Network Packet Broker in conjunction with the BIG-IP i5800 appliance and Virtual Wire (vWire). This document focus on IXIA Bypass Switch / Network Packet Broker. For more information about architecture overview of bypass switch and network packet broker refer to https://devcentral.f5.com/s/articles/L2-Deployment-of-vCMP-guest-with-Ixia-network-packet-broker?tab=series&page=1. This article is continuation of https://devcentral.f5.com/s/articles/BIG-IP-L2-Deployment-with-Bypasss-Network-Packet-Broker-and-LACP?tab=series&page=1 with latest versions of BIG-IP and IXIA Devices. Also focused on various combination of configurations in BIG-IP and IXIA devices. Network Topology Below diagram is a representation of the actual lab network. This shows deployment of BIG-IP with IXIA Bypass Switch and Network Packet Broker. Figure 1 - Deployment of BIG-IP with IXIA Bypass Switch and Network Packet Broker Please refer Lab Overview section in https://devcentral.f5.com/s/articles/BIG-IP-L2-Deployment-with-Bypasss-Network-Packet-Broker-and-LACP?tab=series&page=1 for more insights on lab topology and connections. Hardware Specification Hardware used in this article are IXIA iBypass DUO ( Bypass Switch) IXIA Vision E40 (Network Packet Broker) BIG-IP Arista DCS-7010T-48 (all the four switches) Software Specification Software used in this article are BIG-IP 16.1.0 IXIA iBypass DUO 1.4.1 IXIA Vision E40 5.9.1.8 Arista 4.21.3F (North Switches) Arista 4.19.2F (South Switches) Switch Configuration LAG or link aggregation is a way of bonding multiple physical links into a combined logical link. MLAG or multi-chassis link aggregation extends this capability allowing a downstream switch or host to connect to two switches configured as an MLAG domain. This provides redundancy by giving the downstream switch or host two uplink paths as well as full bandwidth utilization since the MLAG domain appears to be a single switch to Spanning Tree (STP). Lab Overview section in https://devcentral.f5.com/s/articles/BIG-IP-L2-Deployment-with-Bypasss-Network-Packet-Broker-and-LACP?tab=series&page=1 shows MLAG configuring in both the switches. This article focus on LACP deployment for tagged packets. For more details on MLAG configuration, refer to https://eos.arista.com/mlag-basic-configuration/#Verify_MLAG_operation Step Summary Step 1 : Configuration of MLAG peering between both the North Switches Step 2 : Verify MLAG Peering in North Switches Step 3 : Configuration of MLAG Port-Channels in North Switches Step 4 : Configuration of MLAG peering between both the South Switches Step 5 : Verify MLAG Peering in South Switches Step 6 : Configuration of MLAG Port-Channels in South Switches Step 7 : Verify Port-Channel Status Step 1 : Configuration of MLAG peering between both the North Switches MLAG Configuration in North Switch1 and North Switch2 are as follows North Switch 1: Configure Port-Channel interface Port-Channel10 switchport mode trunk switchport trunk group m1peer Configure VLAN interface Vlan4094 ip address 172.16.0.1/30 Configure MLAG mlag configuration domain-id mlag1 heartbeat-interval 2500 local-interface Vlan4094 peer-address 172.16.0.2 peer-link Port-Channel10 reload-delay 150 North Switch 2: Configure Port-Channel interface Port-Channel10 switchport mode trunk switchport trunk group m1peer Configure VLAN interface Vlan4094 ip address 172.16.0.2/30 Configure MLAG mlag configuration domain-id mlag1 heartbeat-interval 2500 local-interface Vlan4094 peer-address 172.16.0.1 peer-link Port-Channel10 reload-delay 150 Step 2 : Verify MLAG Peering in North Switches North Switch 1: North-1#show mlag MLAG Configuration: domain-id : mlag1 local-interface : Vlan4094 peer-address : 172.16.0.2 peer-link : Port-Channel10 peer-config : consistent MLAG Status: state : Active negotiation status : Connected peer-link status : Up local-int status : Up system-id : 2a:99:3a:23:94:c7 dual-primary detection : Disabled MLAG Ports: Disabled : 0 Configured : 0 Inactive : 6 Active-partial : 0 Active-full : 2 North Switch 2: North-2#show mlag MLAG Configuration: domain-id : mlag1 local-interface : Vlan4094 peer-address : 172.16.0.1 peer-link : Port-Channel10 peer-config : consistent MLAG Status: state : Active negotiation status : Connected peer-link status : Up local-int status : Up system-id : 2a:99:3a:23:94:c7 dual-primary detection : Disabled MLAG Ports: Disabled : 0 Configured : 0 Inactive : 6 Active-partial : 0 Active-full : 2 Step 3 : Configuration of MLAG Port-Channels in North Switches North Switch 1: interface Port-Channel513 switchport trunk allowed vlan 513 switchport mode trunk mlag 513 interface Ethernet50 channel-group 513 mode active North Switch 2: interface Port-Channel513 switchport trunk allowed vlan 513 switchport mode trunk mlag 513 interface Ethernet50 channel-group 513 mode active Step 4 : Configuration of MLAG peering between both the South Switches MLAG Configuration in South Switch1 and South Switch2 are as follows South Switch 1: Configure Port-Channel interface Port-Channel10 switchport mode trunk switchport trunk group m1peer Configure VLAN interface Vlan4094 ip address 172.16.1.1/30 Configure MLAG mlag configuration domain-id mlag1 heartbeat-interval 2500 local-interface Vlan4094 peer-address 172.16.1.2 peer-link Port-Channel10 reload-delay 150 South Switch 2: Configure Port-Channel interface Port-Channel10 switchport mode trunk switchport trunk group m1peer Configure VLAN interface Vlan4094 ip address 172.16.1.2/30 Configure MLAG mlag configuration domain-id mlag1 heartbeat-interval 2500 local-interface Vlan4094 peer-address 172.16.1.1 peer-link Port-Channel10 reload-delay 150 Step 5 : Verify MLAG Peering in South Switches South Switch 1: South-1#show mlag MLAG Configuration: domain-id : mlag1 local-interface : Vlan4094 peer-address : 172.16.1.2 peer-link : Port-Channel10 peer-config : consistent MLAG Status: state : Active negotiation status : Connected peer-link status : Up local-int status : Up system-id : 2a:99:3a:48:78:d7 MLAG Ports: Disabled : 0 Configured : 0 Inactive : 6 Active-partial : 0 Active-full : 2 South Switch 2: South-2#show mlag MLAG Configuration: domain-id : mlag1 local-interface : Vlan4094 peer-address : 172.16.1.1 peer-link : Port-Channel10 peer-config : consistent MLAG Status: state : Active negotiation status : Connected peer-link status : Up local-int status : Up system-id : 2a:99:3a:48:78:d7 MLAG Ports: Disabled : 0 Configured : 0 Inactive : 6 Active-partial : 0 Active-full : 2 Step 6 : Configuration of MLAG Port-Channels in South Switches South Switch 1: interface Port-Channel513 switchport trunk allowed vlan 513 switchport mode trunk mlag 513 interface Ethernet50 channel-group 513 mode active South Switch 2: interface Port-Channel513 switchport trunk allowed vlan 513 switchport mode trunk mlag 513 interface Ethernet50 channel-group 513 mode active LACP modes are as follows On Active Passive LACP Connection establishment will occur only for below configurations Active in both North and South Switch Active in North or South Switch and Passive in other switch On in both North and South Switch Note: In this case, all the interfaces of both North and South Switches are configured with LACP mode as Active. Step 7 : Verify Port-Channel Status North Switch 1: North-1#show mlag interfaces detail local/remote mlag state local remote oper config last change changes ---------- ----------------- ----------- ------------ --------------- ------------- --------------------------- ------- 513 active-full Po513 Po513 up/up ena/ena 4 days, 0:34:28 ago 198 North Switch 2: North-2#show mlag interfaces detail local/remote mlag state local remote oper config last change changes ---------- ----------------- ----------- ------------ --------------- ------------- --------------------------- ------- 513 active-full Po513 Po513 up/up ena/ena 4 days, 0:35:58 ago 198 South Switch 1: South-1#show mlag interfaces detail local/remote mlag state local remote oper config last change changes ---------- ----------------- ----------- ------------ --------------- ------------- --------------------------- ------- 513 active-full Po513 Po513 up/up ena/ena 4 days, 0:36:04 ago 190 South Switch 2: South-2#show mlag interfaces detail local/remote mlag state local remote oper config last change changes ---------- ----------------- ----------- ------------ --------------- ------------- --------------------------- ------- 513 active-full Po513 Po513 up/up ena/ena 4 days, 0:36:02 ago 192 Ixia iBypass Duo Configuration For detailed insight, refer to IXIA iBypass Duo Configuration section in https://devcentral.f5.com/s/articles/L2-Deployment-of-vCMP-guest-with-Ixia-network-packet-broker?page=1 Figure 2 - Configuration of iBypass Duo (Bypass Switch) Heartbeat Configuration Heartbeats are configured on both bypass switches to monitor tools in their primary path and secondary paths. If a tool failure is detected, the bypass switch forwards traffic to the secondary path. Heartbeat can be configured using multiple protocols, here Bypass switch 1 uses DNS and Bypass Switch 2 uses IPX for Heartbeat. Figure 3 - Heartbeat Configuration of Bypass Switch 1 ( DNS Heartbeat ) In this infrastructure, the VLAN ID is 513 and represented as hex 0201. Figure 4 - VLAN Representation in Heartbeat Figure 5 - Heartbeat Configuration of Bypass Switch 1 ( B Side ) Figure 6 - Heartbeat Configuration of Bypass Switch 2 ( IPX Heartbeat ) Figure 7 - Heartbeat Configuration of Bypass Switch 2 ( B Side ) IXIA Vision E40 Configuration Create the following resources with the information provided. Bypass Port Pairs Inline Tool Pair Service Chains Figure 8 - Configuration of Vision E40 ( NPB ) This articles focus on deployment of Network Packet Broker with single service chain whereas previous article is based on 2 service chain. Figure 9 - Configuration of Tool Resources In Single Tool Resource, 2 Inline Tool Pairs configured which allows to configure both the Bypass Port pair with single Service Chain. Figure 10 - Configuration of VLAN Translation From Switch Configuration, Source VLAN is 513 and it will be translated to 2001 and 2002 for Bypass 1 and Bypass 2 respectively. For more insights with respect to VLAN translation, refer https://devcentral.f5.com/s/articles/L2-Deployment-of-vCMP-guest-with-Ixia-network-packet-broker?page=1 For Tagged Packets, VLAN translation should be enabled. LACP frames will be untagged which should be bypassed and routed to other Port-Channel. In this case LACP traffic will not reach BIG-IP, instead it will get routed directly from NPB to other pair of switches. LACP bypass Configuration The network packet broker is configured to forward (or bypass) the LACP frames directly from the north to the south switch and vice versa. LACP frames bear the ethertype 8809 (in hex). This filter is configured during the Bypass Port Pair configuration. Note: There are methods to configure this filter, with the use of service chains and filters but this is the simplest for this deployment. Figure 11 - Configuration to redirect LACP BIG-IP Configuration Step Summary Step 1 : Configure interfaces to support vWire Step 2 : Configure trunk in passthrough mode Step 3 : Configure Virtual Wire Note: Steps mentioned above are specific to topology in Figure 2. For more details on Virtual Wire (vWire), refer https://devcentral.f5.com/s/articles/BIG-IP-vWire-Configuration?tab=series&page=1 and https://devcentral.f5.com/s/articles/vWire-Deployment-Configuration-and-Troubleshooting?tab=series&page=1 Step 1 : Configure interfaces to support vWire To configure interfaces to support vWire, do the following Log into BIG-IP GUI Select Network -> Interfaces -> Interface List Select Specific Interface and in vWire configuration, select Virtual Wire as Forwarding Mode Figure 12 - Example GUI configuration of interface to support vWire Step 2 : Configure trunk in passthrough mode To configure trunk, do the following Log into BIG-IP GUI Select Network -> Trunks Click Create to configure new Trunk. Disable LACP for LACP passthrough mode Figure 13 - Configuration of North Trunk in Passthrough Mode Figure 14 - Configuration of South Trunk in Passthrough Mode Step 3 : Configure Virtual Wire To configure trunk, do the following Log into BIG-IP GUI Select Network -> Virtual Wire Click Create to configure Virtual Wire Figure 15 - Configuration of Virtual Wire As VLAN 513 is translated into 2001 and 2002, vWire configured with explicit tagged VLANs. It is also recommended to have untagged VLAN in vWire to allow any untagged traffic. Enable multicast bridging sys db variable as below for LACP passthrough mode modify sys db l2.virtualwire.multicast.bridging value enable Note: Make sure sys db variable enabled after reboot and upgrade. For LACP mode, multicast bridging sys db variable should be disabled. Scenarios As LACP passthrough mode configured in BIG-IP, LACP frames will passthrough BIG-IP. LACP will be established between North and South Switches. ICMP traffic is used to represent network traffic from the north switches to the south switches. Scenario 1: Traffic flow through BIG-IP with North and South Switches configured in LACP active mode Above configurations shows that all the four switches are configured with LACP active mode. Figure 16 - MLAG after deployment of BIG-IP and IXIA with Switches configured in LACP ACTIVE mode Figure 16 shows that port-channels 513 is active at both North Switches and South Switches. Figure 17 - ICMP traffic flow from client to server through BIG-IP Figure 17 shows ICMP is reachable from client to server through BIG-IP. This verifies test case 1, LACP getting established between Switches and traffic passthrough BIG-IP successfully. Scenario 2: Active BIG-IP link goes down with link state propagation enabled in BIG-IP Figure 15 shows Propagate Virtual Wire Link Status enabled in BIG-IP. Figure 17 shows that interface 1.1 of BIG-IP is active incoming interface and interface 1.4 of BIG-IP is active outgoing interface. Disabling BIG-IP interface 1.1 will make active link down as below Figure 18 - BIG-IP interface 1.1 disabled Figure 19 - Trunk state after BIG-IP interface 1.1 disabled Figure 19 shows that the trunks are up even though interface 1.1 is down. As per configuration, North_Trunk has 2 interfaces connected to it 1.1 and 1.3 and one of the interface is still up, so North_Trunk status is active. Figure 20 - MLAG status with interface 1.1 down and Link State Propagation enabled Figure 20 shows that port-channel 513 is active at both North Switches and South Switches. This shows that switches are not aware of link failure and it is been handled by IXIA configuration. Figure 21 - IXIA Bypass Switch after 1.1 interface of BIG-IP goes down As shown in Figure 8 , Single Service Chain is configured and which will be down only if both Inline Tool Port pairs are down in NPB. So Bypass will be enabled only if Service Chain goes down in NPB. Figure 21 shows that still Bypass is not enabled in IXIA Bypass Switch. Figure 22 - Service Chain and Inline Tool Port Pair status in IXIA Vision E40 ( NPB ) Figure 22 shows that Service Chain is still up as BIG IP2 ( Inline Tool Port Pair ) is up whereas BIG IP1 is down. Figure 1 shows that P09 of NPB is connected 1.1 of BIG-IP which is down. Figure 23 - ICMP traffic flow from client to server through BIG-IP Figure 23 shows that still traffic flows through BIG-IP even though 1.1 interface of BIG-IP is down. Now active incoming interface is 1.3 and active outgoing interface is 1.4. Low bandwidth traffic is still allowed through BIG-IP as bypass not enabled and IXIA handles rate limit process. Scenario 3: When North_Trunk goes down with link state propagation enabled in BIG-IP Figure 24 - BIG-IP interface 1.1 and 1.3 disabled Figure 25 - Trunk state after BIG-IP interface 1.1 and 1.3 disabled Figure 15 shows that Propagate Virtual Wire Link State enabled and thus both the trunks are down. Figure 26 - IXIA Bypass Switch after 1.1 and 1.3 interfaces of BIG-IP goes down Figure 27 - ICMP traffic flow from client to server bypassing BIG-IP Conclusion This article covers BIG-IP L2 Virtual Wire Passthrough deployment with IXIA. IXIA configured using Single Service Chain. Observations of this deployment are as below VLAN Translation in IXIA NPB will convert real VLAN ID (513) to Translated VLAN ID (2001 and 2002) BIG-IP will receive packets with translated VLAN ID (2001 and 2002) VLAN Translation needs all packets to be tagged, untagged packets will be dropped. LACP frames are untagged and thus bypass configured in NPB for LACP. Tool Sharing needs to be enabled for allowing untagged packet which will add extra tag. This type of configuration and testing will be covered in upcoming articles. With Single Service Chain, If any one of the Inline Tool Port Pairs goes down, low bandwidth traffic will be still allowed to pass through BIG-IP (tool) If any of the Inline Tool link goes down, IXIA handles whether to bypass or rate limit. Switches will be still unaware of the changes. With Single Service Chain, if Tool resource configured with both Inline Tool Port pair in Active - Active state then load balancing will happen and both path will be active at a point of time. Multiple Service Chains in IXIA NPB can be used instead of Single Service Chain to remove rate limit process. This type of configuration and testing will be covered in upcoming articles. If BIG-IP goes down, IXIA enables bypass and ensures there is no packet drop.1.4KViews9likes0CommentsConfigure the F5 BIG-IP as an Explicit Forward Web Proxy Using LTM

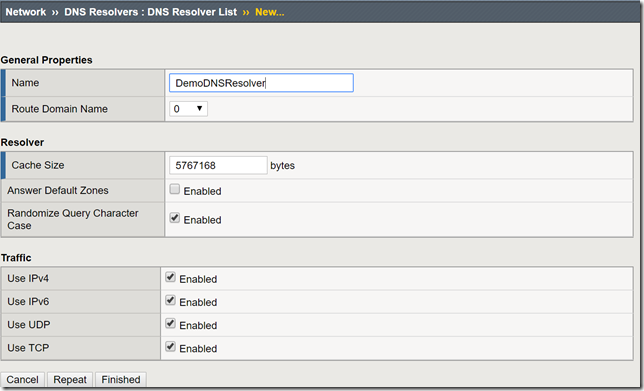

In a previous article, I provided a guide on using F5's Access Policy Manager (APM) and Secure Web Gateway (SWG) to provide forward web proxy services. While that guide was for organizations that are looking to provide secure internet access for their internal users, URL filtering as well as securing against both inbound and outbound malware, this guide will use only F5's Local Traffic Manager to allow internal clients external internet access. This week I was working with F5's very talented professional services team and we were presented with a requirement to allow workstation agents internet access to known secure sites to provide logs and analytics. Of course, this capability can be used to meet a number of other use cases, this was a real-world use case I wanted to share. So with that, let's get to it! Creating a DNS Resolver Navigate to Network > DNS Resolvers > click Create Name: DemoDNSResolver Leave all other settings at their defaults and click Finished Click the newly created DNS resolver object Click Forward Zones Click Add In this use case, we will be forwarding all requests to this DNS resolver. Name: . Address: 8.8.8.8 Note: Please use the correct DNS server for your use case. Service Port: 53 Click Add and Finished Creating a Network Tunnel Navigate to Network > Tunnels > Tunnel List > click Create Name: DemoTunnel Profile: tcp-forward Leave all other settings default and click Finished Create an http Profile Navigate to Local Traffic > Profiles > Services > HTTP > click Create Name: DemoExplicitHTTP Proxy Mode: Explicit Parent Profile: http-explict Scroll until you reach Explicit Proxy settings. DNS Resolver: DemoDNSResolver Tunnel Name: DemoTunnel Leave all other settings default and click Finish Create an Explicit Proxy Virtual Server Navigate to Local Traffic > Virtual Servers > click Create Name: explicit_proxy_vs Type: Standard Destination Address/Mask: 10.1.20.254 Note: This must be an IP address the internal clients can reach. Service Port: 8080 Protocol: TCP Note: This use case was for TCP traffic directed at known hosts on the internet. If you require other protocols or all, select the correct option for your use case from the drop-down menu. Protocol Profile (Client): f5-tcp-progressive Protocol Profile (Server): f5-tcp-wan HTTP Profile: DemoExplicitHTTP VLAN and Tunnel Traffic Enabled on: Internal Source Address Translation: Auto Map Leave all other settings at their defaults and click Finish. Create a Fast L4 Profile Navigate to Local Traffic > Profiles: Protocol: Fast L4 > click Create Name: demo_fastl4 Parent Profile: fastL4 Enable Loose Initiation and Loose Close as shown in the screenshot below. Click Finished Create a Wild Card Virtual Server In order to catch and forward all traffic to the BIG-IP's default gateway, we will create a virtual server to accept traffic from our explicit proxy virtual server created in the previous steps. Navigate to Local Traffic > Virtual Servers > Virtual Server List > click Create Name: wildcard_VS Type: Forwarding (IP) Source Address: 0.0.0.0/0 Destination Address: 0.0.0.0/0 Protocol: *All Protocols Service Port: 0 *All Ports Protocol Profile: demo_fastl4 VLAN and Tunnel Traffic: Enabled on...DemoTunnel Source Address Translation: Auto Map Leave all other settings at their defaults and click Finished. Testing and Validation Navigate to a workstation on your internal network. Launch Internet Explorer or the browser of your preference. Modify the proxy settings to reflect the explicit_proxy_VS created in previous steps. Attempt to access several sites and validate you are able to reach them. Whether successful or unsuccessful, navigate to Local Traffic > Virtual Servers > Virtual Server List > click the Statistics tab. Validate traffic is hitting both of the virtual servers created above. If it is not, for troubleshooting purposes only configure to the virtual servers to accept traffic on All VLANs and Tunnels as well as useful tools such as curl and tcpdump. You have now successfully configured your F5 BIG-IP to act as an explicit forward web proxy using LTM only. As stated above, this use case is not meant to fulfill all forward proxy use cases. If URL filtering and malware protection are required, APM and SWG integration should be considered. Until next time!38KViews8likes34CommentsHow to setup DSR in Kubernetes with BIG-IP

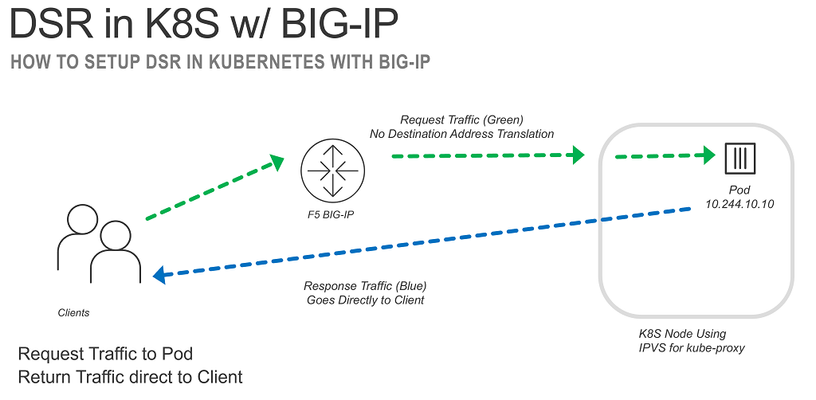

Using Direct Server Return (DSR) in Kubernetes can have benefits when you have workloads that require low latency, high throughput, and/or you want to preserve the source IP address of the connection. The following will guide you through how to configure Kubernetes and BIG-IP to use DSR for traffic to a Kubernetes Pod. Why DSR? I’m not a huge fan of DSR. It’s a weird way of having a client send traffic to a Load Balancer (LB), the LB forwards to a backend server WITHOUT rewriting the destination address, and the backend server responds directly back to the client. It looks WEIRD! But there are some benefits, the backend server sees the original client IP address without the need for the LB to be in the return path of traffic and the LB only has to handle one side of the connection. This is also the downside because it’s not straightforward to do any type of intelligent LB if you only see half the conversation. It also involves doing weird things on your backend servers to configure loopback devices so that it will answer for the traffic when it is received, but not create an IP conflict on the network. DSR in Kubernetes The following uses IP Virtual Server (IPVS) to setup DSR in Kubernetes. IPVS has been supported in Kubernetes since 1.11. When using IPVS it replaces IP Tables for the kube-proxy (internal LB). When you provision a LoadBalancer or NodePort service (method to expose traffic outside the cluster) you can add “externalTrafficPolicy: Local” to enable DSR. This is mentioned in the Kubernetes documentation for GCP and Azure environments. DSR in BIG-IP On the BIG-IP DSR is referred to as “nPath”. K11116 discusses the steps involved in getting it setup. The steps create a profile that will disable destination address translation and allow the BIG-IP to not maintain the state of TCP connections (since it will only see half the conversation). Putting the Pieces Together To enable DSR from Kubernetes the first step is to create a LoadBalancer service where you define the external LB IP address. apiVersion: v1 kind: Service metadata: name: my-frontend spec: ports: - port: 80 protocol: TCP targetPort: 80 type: LoadBalancer loadBalancerIP: 10.1.10.10 externalTrafficPolicy: Local selector: run: my-frontend After you create the service you need to update Service to add the following status (example in YAML format, this needs to be done via the API vs. kubectl): status: loadBalancer: ingress: - ip: 10.1.10.10 Once this is done you run “ipvsadm -ln” to verify that you now have an IPVS rule to rewrite the destination address to the Pod IP Address. IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn .. TCP 10.1.10.10:80 rr -> 10.233.90.25:80 Masq 1 0 0 -> 10.233.90.28:80 Masq 1 0 0 … You can verify that DSR is working by connecting to the external IP address and observing that the MAC address that the traffic is sent to is different than the MAC address that the reply is sent from. $ sudo tcpdump -i eth1 -nnn -e host 10.1.10.10 … 01:30:02.579765 06:ba:49:38:53:f0 > 06:1f:8a:6c:8e:d2, ethertype IPv4 (0x0800), length 143: 10.1.10.100.37664 > 10.1.10.10.80: Flags [P.], seq 1:78, ack 1, win 229, options [nop,nop,TS val 3625903493 ecr 3191715024], length 77: HTTP: GET /txt HTTP/1.1 01:30:02.582457 06:d2:0a:b1:14:20 > 06:ba:49:38:53:f0, ethertype IPv4 (0x0800), length 66: 10.1.10.10.80 > 10.1.10.100.37664: Flags [.], ack 78, win 227, options [nop,nop,TS val 3191715027 ecr 3625903493], length 0 01:30:02.584176 06:d2:0a:b1:14:20 > 06:ba:49:38:53:f0, ethertype IPv4 (0x0800), length 692: 10.1.10.10.80 > 10.1.10.100.37664: Flags [P.], seq 1:627, ack 78, win 227, options [nop,nop,TS val 3191715028 ecr 3625903493], length 626: HTTP: HTTP/1.1 200 OK ... Automate it Using Container Ingress Services we can automate this setup with the following AS3 declaration (note the formatting is off and this will not copy-and-paste cleanly, only provided for illustrative purposes). kind: ConfigMap apiVersion: v1 metadata: name: f5demo-as3-configmap namespace: default labels: f5type: virtual-server as3: "true" data: template: | { "class": "AS3", "action": "deploy", "declaration": { "class": "ADC", "schemaVersion": "3.10.0", "id": "DSR Demo", "AS3": { "class": "Tenant", "MyApps": { "class": "Application", "template": "shared", "frontend_pool": { "members": [ { "servicePort": 80, "serverAddresses": [] } ], "monitors": [ "http" ], "class": "Pool" }, "l2dsr_http": { "layer4": "tcp", "pool": "frontend_pool", "persistenceMethods": [], "sourcePortAction": "preserve-strict", "translateServerAddress": false, "translateServerPort": false, "class": "Service_L4", "profileL4": { "use": "fastl4_dsr" }, "virtualAddresses": [ "10.1.10.10" ], "virtualPort": 80, "snat": "none" }, "dsrhash": { "hashAlgorithm": "carp", "class": "Persist", "timeout": "indefinite", "persistenceMethod": "source-address" }, "fastl4_dsr": { "looseClose": true, "looseInitialization": true, "resetOnTimeout": false, "class": "L4_Profile" } } } } } You can then have the BIG-IP automatically pick-up the location of the pods by annotating the service. apiVersion: v1 kind: Service metadata: name: my-frontend labels: run: my-frontend cis.f5.com/as3-tenant: AS3 cis.f5.com/as3-app: MyApps cis.f5.com/as3-pool: frontend_pool ... Not so weird? DSR is a weird way to load balance traffic, but it can have some benefits. For a more exhaustive list of the reasons not to do DSR; we can reach back to 2008 for the following gem from Lori MacVittie. What is old is new again!2.5KViews8likes1CommentHTTP Brute Force Mitigation Playbook: BIG-IP LTM Mitigation Options for HTTP Brute Force Attacks - Chapter 3

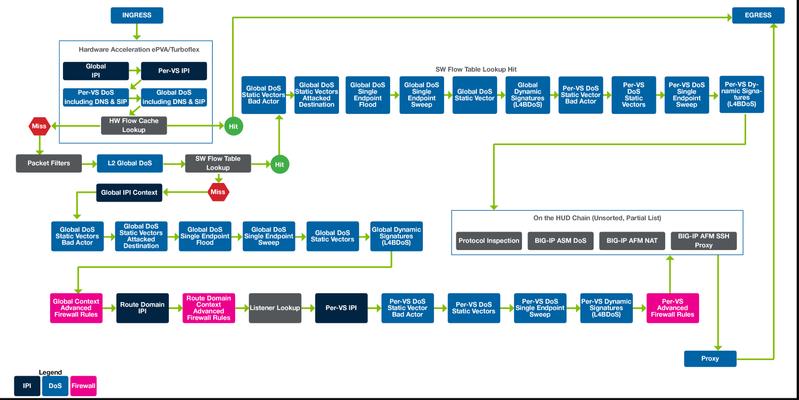

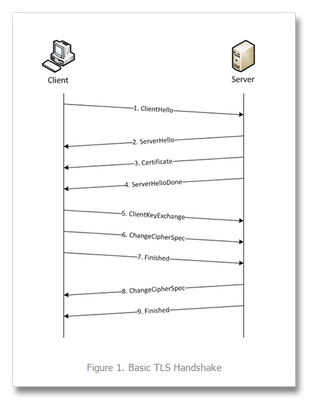

HTTP Brute Force Attacks can be mitigated using BIG-IP LTM features. It could be a straightforward rejection of traffic from a specific source IP, network, geolocation, HTTP request properties or monitoring the number requests from a certain source and unique characteristic and rate limiting by dropping/rejecting requests exceeding a defined threshold. Prerequisites Managing the BIGIP configuration requires Administrator access. Ensure access to Configuration Utility (Web GUI) and SSH is available. These management interfaces will be helpful in configuring, verifying and troubleshooting on the BIGIP. Having access to serial console output of the BIGIP is also helpful. Local Traffic Manager (LTM) and Application Visibility and Reporting (AVR) license are required to use the related features. Prevent traffic from a Source IP or Network As demonstrated on the Data gathering chapter for iRules, LTM Policy and of F5 AVR, it is possible that a specific IP address or a specific network may be sending suspicious and malicious traffic. One of the common way to limit access to a HTTP Virtual Server is to either define a whitelist or blacklist of IP addresses. HTTP Brute Force Attacks on a Virtual Server can be mitigated by blocking a suspicious IP address or network. These can be done thru iRules, LTM Policy or Network Packet filter. Note that when blocking source IPs or networks, it is possible that the source IP is a proxy server and proxies request from internal clients and blocking it may have unintentional blocking of legitimate clients. Monitor traffic that are getting blocked and make necessary adjustments to the related configuration. The diagram below shows the packet processing path on a BIG-IP. Notice it also shows reference to Advance Firewall Manager (AFM) packet path. https://techdocs.f5.com/content/dam/f5/kb/global/solutions/K31591013_images.html/2018-0613%20AFM%20Packet%20Flow.jpg Mitigation: LTM Packet Filter On the left side of the BIG-IP packet processing path diagram, we can see the Ingress section and if the packet information is not in the Hardware Acceleration ePVA (Packet Velocity Asic) of the BIG-IP, it will be checked against the packet filter. Thus, after determining that an IP address or a Network is suspicious and/or malicious based on gathered data from either the LTM Policy/iRules or AVR or external monitoring tools, a packet filter can be created to block these suspected malicious traffic sources. Packet Filter(ing) can be enabled in the Configuration Utility, Network ›› Packet Filters : General Packet filter rules can be configured at Network ›› Packet Filters : Rules Sample Packet Filter Configuration: Packet filter configuration to block a specific IP address with the reject action and have logging enabled Packet filter configuration to block a Network with the reject action An Existing Packet filter rule Packet Filter generated logs can be reviewed at System ›› Logs : Packet Filter This log shows an IP address was rejected by a packet filter rule Mitigation: LTM Policy LTM Policy can be configured to block a specified IP address or from a iRule Datagroup. Sample LTM Policy: LTM policy tmsh output: Tip: TMOS shell (tmsh) command 'tmsh load sys config from-terminal merge' can be used to quickly load the configuration. Sample: root@(sec8)(cfg-sync Standalone)(Active)(/Common)(tmos)# load sys config from-terminal merge Enter configuration. Press CTRL-D to submit or CTRL-C to cancel. LTM policy will block specified IP address: ltm policy block_source_ip { last-modified 2019-02-20:22:55:25 requires { http tcp } rules { block_source_ip { actions { 0 { shutdown connection } 1 { log write facility local0 message "tcl:IP [IP::client_addr] is blocked by LTM Policy" priority info } } conditions { 0 { tcp address matches values { 172.16.7.31 } } } } } status published strategy first-match } LTM policy will block specified IP address in defined iRule Datagroup: root@(sec8)(cfg-sync Standalone)(Active)(/Common)(tmos)# list ltm policy block_source_ip ltm policy block_source_ip { last-modified 2019-12-01:14:40:57 requires { http tcp } rules { block_source_ip { actions { 0 { shutdown connection } 1 { log write facility local0 message "tcl:IP [IP::client_addr] is blocked by LTM Policy" priority info } } conditions { 0 { tcp address matches datagroup malicious_ip_dg } } } } status published strategy first-match } malicious_ip_dg is an iRule Datagroup where the IP address is defined Apply the LTM Policy to the Virtual Server that needs to be protected. Mitigation: iRule to block an IP address Using iRule to block an IP address can be done in different stages of the BIG-IP packet processing. The sample iRule will block the matched IP address during the FLOW_INIT event. FLOW_INIT definition: This event is triggered (once for TCP and unique UDP/IP flows) after packet filters, but before any AFM and TMM work occurs. https://clouddocs.f5.com/api/irules/FLOW_INIT.html Diagram Snippet from 2.1.9. iRules HTTPS Events. FLOW_INIT event happens after packet filter events. If an IP address is identified as malicious, blocking it earlier before further processing would save CPU resource as iRules processing are resource intensive. Additionally, if the blocking of an IP address can be done using LTM packet filter, or LTM policy, use it instead of iRules approach. https://f5-agility-labs-irules.readthedocs.io/en/latest/class1/module1/iRuleEventsFlowHTTPS.html Sample iRule: when FLOW_INIT { set ipaddr [IP::client_addr] if { [class match $clientip equals malicious_ip_dg] } { log local0. "Attacker IP [IP::client_addr] blocked" # logging can be removed/commented out if not required drop } } malicious_ip_dg is an iRule Datagroup where the IP address is defined Sample iRule is from K43383890: Blocking IP addresses using the IP geolocation database and iRules. there are more sample iRules in the referenced F5 Knowledge Article. https://support.f5.com/csp/article/K43383890 Apply the iRule to the Virtual Server that needs to be protected. Mitigation: Rate Limit based on IP address using iRules Common scenario during increase of connection when a suspected brute force attack on a Virtual Server with HTTP application is looking for options to rate limit connections to it. Using iRule to rate limit connection based IP address is possible. It also offers levels of control and additional logic should it be needed. Here is a sample iRule to Rate limit IP addresses. when RULE_INIT { # Default rate to limit requests set static::maxRate 15 # Default rate to set static::warnRate 12 # During this many seconds set static::timeout 1 } when CLIENT_ACCEPTED { # Increment and Get the current request count bucket set epoch [clock seconds] set currentCount [table incr -mustexist "Count_[IP::client_addr]_${epoch}"] if { $currentCount eq "" } then { # Initialize a new request count bucket table set "Count_[IP::client_addr]_${epoch}" 1 indef $static::timeout set currentCount 1 } # Actually check for being over limit if { $currentCount >= $static::maxRate } then { log local0. "ERROR: IP:[IP::client_addr] exceeded ${static::maxRate} requests per second. Rejecting request. Current requests: ${currentCount}." event disable all drop } elseif { $currentCount > $static::warnRate } then { log local0. "WARNING: IP:[IP::client_addr] exceeded ${static::warnRate} requests per second. Will reject at ${static::maxRate}. Current requests: ${currentCount}." } log local0. "IP:[IP::client_addr]: currentCount: ${currentCount}" } Attach the iRule to Virtual Server that needs to be protected. HTTP information from sample requests In the previous chapter "Bad Actor Behavior and Gathering Statistics using BIG-IP LTM Policies and iRules and BIG-IP AVR", some HTTP information will be available via AVR statistics and some may be gathered thru LTM policy or iRules where logs were generated when HTTP requests are received on a F5 Virtual Server which has the iRule or LTM Policy or the HTTP Analytics profile is applied to. These logs are typically logged in /var/log/ltm as normally configured in the irule "log local0." statements or in LTM policy, by default. In the course of troubleshooting and investigation, a customer/incident analyst may decide on what HTTP related information they will consider as malicious or undesirable. In the following sample iRule and LTM Policy mitigation, HTTP related elements were used. Typical HTTP information from the sample request that are used are the HTTP User-Agent header or a HTTP parameter. Other HTTP information can be used as well such as other HTTP headers. Mitigation: Prevent a specific HTTP header value During HTTP Brute Force attacks, HTTP header User-Agent value is often what an incident analyst will review and prevent traffic based on its value, where, a certain user-agent value will be used by automated bots that launches the attack. Sample Rule and LTM Policy to block a specific User-Agent root@(asm6)(cfg-sync Standalone)(Active)(/Common)(tmos)# list ltm policy Malicious_User_Agent ltm policy Malicious_User_Agent { last-modified 2019-12-04:17:30:38 requires { http } rules { block_UA { actions { 0 { shutdown connection } 1 { log write facility local0 message "tcl:the user agent [HTTP::header User-Agent] from [IP::client_addr] is blocked" priority info } } conditions { 0 { http-header name User-Agent values { "Mozilla/5.0 (A-malicious-UA)" } } } } } status published strategy first-match } logs generated by LTM Policy in /var/log/ltm Jan 16 13:11:06 sec8 info tmm3[11305]: [/Common/Malicious_User_Agent/block_UA]: the user agent Mozilla/5.0 (A-malicious-UA) from 172.16.10.31 is blocked Jan 16 13:11:06 sec8 info tmm5[11305]: [/Common/Malicious_User_Agent/block_UA]: the user agent Mozilla/5.0 (A-malicious-UA) from 172.16.10.31 is blocked Jan 16 13:11:06 sec8 info tmm7[11305]: [/Common/Malicious_User_Agent/block_UA]: the user agent Mozilla/5.0 (A-malicious-UA) from 172.16.10.31 is blocked Mitigation: Rate Limit a HTTP Header with a unique value During a HTTP Brute Force Attack, there may be instances in the attack traffic that a HTTP Header may have a certain value. If the HTTP Header value is being repeatedly used and appears to be an automated request, an iRule can be used to monitor the value of the HTTP header and be rate limited. Example: when HTTP_REQUEST { if { [HTTP::header exists ApplicationSpecificHTTPHeader] } { set DEBUG 0 set REQ_TIMEOUT 60 set MAX_REQ 3 ## set ASHH_ID [HTTP::header ApplicationSpecificHTTPHeader] set requestCnt [table lookup -notouch -subtable myTable $ASHH_ID] if { $requestCnt >= $MAX_REQ } { set remtime [table timeout -subtable myTable -remaining $ASHH_ID] if { $DEBUG > 0 } { log local0. "Dropped! wait for another $remtime seconds" } reject #this could also be changed to "drop" instead of "reject" to be more stealthy } elseif { $requestCnt == "" } { table set -subtable myTable [HTTP::header ApplicationSpecificHTTPHeader] 1 $REQ_TIMEOUT if { $DEBUG > 0 } { log local0. "Hit 1: Passed!" } } elseif { $requestCnt < $MAX_REQ } { table incr -notouch -subtable myTable [HTTP::header ApplicationSpecificHTTPHeader] if { $DEBUG > 0 } { log local0. "Hit [expr {$requestCnt + 1}]: Passed!" } } } } In this example iRule, the variable MAX_REQ has a value of 3 and means will limit the request from the HTTP Header - ApplicationSpecificHTTPHeader - with specific value to 3 requests. irule logs generated in /var/log/ltm Jan 16 13:04:39 sec8 info tmm3[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Hit 1: Passed! Jan 16 13:04:39 sec8 info tmm5[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Hit 2: Passed! Jan 16 13:04:39 sec8 info tmm7[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Hit 3: Passed! Jan 16 13:04:39 sec8 info tmm6[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Dropped! wait for another 60 seconds Jan 16 13:04:39 sec8 info tmm[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Dropped! wait for another 60 seconds Jan 16 13:04:39 sec8 info tmm2[11305]: Rule /Common/rate-limit-specific-http-header <HTTP_REQUEST>: Dropped! wait for another 60 seconds Sample curl command to test the iRule. Notice the value of the ApplicationSpecificHTTPHeader HTTP header. for i in {1..50}; do curl http://172.16.8.86 -H "ApplicationSpecificHTTPHeader: couldbemaliciousvalue"; done Mitigation: Rate Limit a username parameter from HTTP payload Common HTTP Brute Force attack scenario involves credentials being tried repeatedly. In this sample iRule, the username parameter from a HTTP POST request payload can be observed for a HTTP login url and if the username is used multiple times and exceed the defined maximum requests in a defined time frame, the connection will be dropped. when RULE_INIT { # The max requests served within the timing interval per the static::timeout variable set static::maxReqs 4 # Timer Interval in seconds within which only static::maxReqs Requests are allowed. # (i.e: 10 req per 2 sec == 5 req per sec) # If this timer expires, it means that the limit was not reached for this interval and # the request counting starts over. Making this timeout large increases memory usage. # Making it too small negatively affects performance. set static::timeout 2 } when HTTP_REQUEST { if { ( [string tolower [HTTP::uri]] equals "/wackopicko/users/login.php" ) and ( [HTTP::method] equals "POST" ) } { HTTP::collect [HTTP::header Content-Length] } } when HTTP_REQUEST_DATA { set username "unknown" foreach x [split [string tolower [HTTP::payload]] "&"] { if { [string tolower $x] starts_with "token=" } { log local0. "login parameters are $x" set username [lindex [split $x "="] 1] set getcount [table lookup -notouch $username] if { $getcount equals "" } { table set $username "1" $static::timeout $static::timeout # Record of this session does not exist, starting new record # Request is allowed. } elseif { $getcount < $static::maxReqs } { log local0. "Request Count for $username is $getcount" table incr -notouch $username # record of this session exists but request is allowed. } elseif { $getcount >= $static::maxReqs } { drop log local0. "User $username exceeded login limit current count:$getcount from [IP::client_addr]:[TCP::client_port]" } else { #log local0. "User $username attempted login from [IP::client_addr]:[TCP::client_port]" } } } } logs generated in /var/log/ltm Jan 16 12:34:05 sec8 info tmm7[11305]: Rule /Common/post_request_username <HTTP_REQUEST_DATA>: login parameters are username=!@%23$%25 Jan 16 12:34:05 sec8 info tmm7[11305]: Rule /Common/post_request_username <HTTP_REQUEST_DATA>: User !@%23$%25 exceeded login limit current count:5 from 172.16.10.31:57128 Jan 16 12:34:05 sec8 info tmm1[11305]: Rule /Common/post_request_username <HTTP_REQUEST_DATA>: login parameters are username=!@%23$%25 Jan 16 12:34:05 sec8 info tmm1[11305]: Rule /Common/post_request_username <HTTP_REQUEST_DATA>: User !@%23$%25 exceeded login limit current count:5 from 172.16.10.31:57130 Additional reference: lindex - Retrieve an element from a list https://www.tcl.tk/man/tcl8.4/TclCmd/lindex.htm Prevent traffic source based on Behavior Mitigation: TLS Fingerprint In the reference Devcentral Article, https://devcentral.f5.com/s/articles/tls-fingerprinting-a-method-for-identifying-a-tls-client-without-decrypting-24598, it was demonstrated that clients using certain TLS fingerprints can be identified. In a HTTP brute force attack, attacking clients may have certain TLS fingerprint that can be observed and be later on, rate limited or dropped. TLS fingerprint can be gathered and used to manually or dynamically prevent malicious and suspicious clients coming from certain source IPs from accessing the iRule protected Virtual Server. The sample TLS Fingerprint Rate Limiting and TLS Fingerprint proc iRules (see HTTP Brute Force Mitigation: Appendix for sample iRule and other related configuration) works to identify, observe and block TLS fingerprints that are considered malicious based on the amount of traffic it sent. The TLS Fingerprinting Proc iRule extracts the TLS fingerprint from the client hello packet of the incoming client traffic which is unique for certain client devices. The TLS Fingerprint Rate Limiting iRule checks a TLS fingerprint if it is an expected TLS fingerprint or is considered malicious or is suspicious. The classification of an expected or malicious TLS fingerprint is done thru LTM rule Data Group. Example: Malicious TLS Fingerprint Data Group ltm data-group internal malicious_fingerprintdb { records { 0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102 { data curl-bot } } type string } In this example Malicious TLS Fingerprint Data Group, the defined fingerprint may be included manually as decided by a customer/analyst as the TLS fingerprint may have been observed to be sending abnormal amount of traffic during a HTTP brute force event. Expected / Good TLS Fingerprint Data Group ltm data-group external fingerprint_db { external-file-name fingerprint_db type string } System ›› File Management : Data Group File List ›› fingerprint_db Properties Namefingerprint_dbPartition / PathCommonData Group Name fingerprint_db TypeStringKey / Value Pair Separator:= sample TLS signature: signatures:#"0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102" := "User-Agent: curl-bot", Taking the scenario where a TLS fingerprint is defined in the malicious fingerprint data group, it will be actioned as defined in the TLS fingerprint Rate Limiting iRule. If a TLS fingerprint is neither malicious or expected, the TLS fingerprint Rate Limiting iRule will consider it suspicious and be rate limited should certain number of request is exceeded from this particular TLS fingerprint and IP address combination. Here are example logs generated by the TLS Fingerprint Rate Limiting iRule. Monitor the number of request sent from the suspicious TLS fingerprint and IP address combination. from the generated log, review the "currentCount" Dec 16 16:36:58 sec8 info tmm1[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: currentCount: 14 Dec 16 16:36:58 sec8 info tmm7[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: currentCount: 15 The HTTP User-Agent header value is included in the log to have a record of the TLS fingerprint and the HTTP User-Agent sending the suspicious traffic. This can later be used to define the suspicious TLS fingerprint and the HTTP User-Agent as a malicious fingerprint. Dec 16 16:36:58 sec8 info tmm1[11545]: Rule /Common/fingerprintTLS-irule <HTTP_REQUEST>: WARNING: suspicious_fingerprint: 172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: User-Agent:curl/7.47.1 exceeded 12 requests per second. Will reject at 15. Current requests: 14. Dec 16 16:36:58 sec8 info tmm7[11545]: Rule /Common/fingerprintTLS-irule <HTTP_REQUEST>: WARNING: suspicious_fingerprint: 172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: User-Agent:curl/7.47.1 exceeded 12 requests per second. Will reject at 15. Current requests: 15. The specific TLS fingerprint and IP combination is monitored and as it exceeds the defined request per second threshold in the TLS Fingerprint Rate Limiting iRule, further attempt to initiate a TLS handshake with the protected Virtual Server will fail. The iRule action in this instance is "drop". This will cause the connection to stall on the client side as the BIG-IP will not be sending any further traffic back to the suspicious client. Dec 16 16:36:58 sec8 info tmm1[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: ERROR: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102 exceeded 15 requests per second. Rejecting request. Current requests: 16. Dec 16 16:36:58 sec8 info tmm1[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: currentCount: 16 Dec 16 16:40:04 sec8 warning tmm7[11545]: 01260013:4: SSL Handshake failed for TCP 172.16.7.31:24814 -> 172.16.8.84:443 Dec 16 16:40:04 sec8 info tmm7[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: ERROR: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102 exceeded 15 requests per second. Rejecting request. Current requests: 17. Dec 16 16:40:04 sec8 info tmm7[11545]: Rule /Common/fingerprintTLS-irule <CLIENT_DATA>: fingerprint:172.16.7.31_0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102: currentCount: 17 Dec 16 16:40:04 sec8 warning tmm7[11545]: 01260013:4: SSL Handshake failed for TCP 172.16.7.31:35509 -> 172.16.8.84:443 If a TLS fingerprint is observed to be sending abnormal amount of traffic during a HTTP brute force event, this TLS fingerprint may be included manually as decided by a customer/analyst in the Malicious TLS Fingerprint Data Group. In our example, this is the malicious_fingerprintdb Data group. from the reference observed TLS fingerprint, an entry in the data group can be added. String: 0301+0303+0076+C030C02CC028C024C014C00A00A3009F006B006A0039003800880087C032C02EC02AC026C00FC005009D003D00350084C02FC02BC027C023C013C00900A2009E0067004000330032009A009900450044C031C02DC029C025C00EC004009C003C002F00960041C012C00800160013C00DC003000A00FF+1+00+000B000A000D000F3374+00190018001600170014001500120013000F00100011+060106020603050105020503040104020403030103020303020102020203+000102 Value: malicious-client Sample Data group in edit mode to add an entry: Mitigation: Prevent based on Geolocation It is possible during a HTTP Brute Force Attack that the source of the attack traffic is from a certain Geolocation. Attack traffic can be easily dropped from unexpected Geolocation thru an irule. The FLOW_INIT event is triggered when a packet initially hits a Virtual Server. be it UDP or TCP traffic. During an attack the source IP and geolocation information can be observed using the sample iRule and manually update the reference Data Group with country code where the attack traffic is sourcing from. Example: Unexpected Geolocation (Blacklist) iRule: when FLOW_INIT { set ipaddr [IP::client_addr] set clientip [whereis $ipaddr country] #logging can be removed/commented out if not required log local0. "Source IP $ipaddr from $clientip" if { [class match $clientip equals unexpected_geolocations] } { log local0. "Attacker IP detected $ipaddr from $clientip: Drop!" #logging can be removed/commented out if not required drop } } Data Group: root@(sec8)(cfg-sync Standalone)(Active)(/Common)(tmos)# list ltm data-group internal unexpected_geolocations ltm data-group internal unexpected_geolocations { records { KZ { data Kazakhstan } } type string } Generated log in /var/log/ltm: Dec 16 21:21:03 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Source IP 5.188.153.248 from KZ Dec 16 21:21:03 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Attacker IP detected 5.188.153.248 from KZ: Drop! Dec 16 21:21:04 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Source IP 5.188.153.248 from KZ Dec 16 21:21:04 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Attacker IP detected 5.188.153.248 from KZ: Drop! Dec 16 21:21:06 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Source IP 5.188.153.248 from KZ Dec 16 21:21:06 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Attacker IP detected 5.188.153.248 from KZ: Drop! Dec 16 21:21:11 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Source IP 5.188.153.248 from KZ Dec 16 21:21:11 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Attacker IP detected 5.188.153.248 from KZ: Drop! Dec 16 21:21:15 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Source IP 5.188.153.248 from KZ Dec 16 21:21:15 sec8 info tmm7[11545]: Rule /Common/block_unexpected_geolocation <FLOW_INIT>: Attacker IP detected 5.188.153.248 from KZ: Drop! Similarly, it is sometime easier to whitelist or allow only specific Geolocation to access the protected Virtual Server. Here is a sample iRule and its Data Group as a possible option. Expected Geolocation (Whitelist) iRule: when FLOW_INIT { set ipaddr [IP::client_addr] set clientip [whereis $ipaddr country] #logging can be removed/commented out if not required log local0. "Source IP $ipaddr from $clientip" if { not [class match $clientip equals expected_geolocations] } { log local0. "Attacker IP detected $ipaddr from $clientip: Drop!" #logging can be removed/commented out if not required drop } } Data Group: root@(sec8)(cfg-sync Standalone)(Active)(/Common)(tmos)# list ltm data-group internal expected_geolocations ltm data-group internal unexpected_geolocations { records { US { data US } } type string } sample curl command which will source the specified interface IP address [root@asm6:Active:Standalone] config # ip add | grep 5.188.153.248 inet 5.188.153.248/32 brd 5.188.153.248 scope global fop-lan [root@asm6:Active:Standalone] config # curl --interface 5.188.153.248 -k https://172.16.8.84 curl: (7) Failed to connect to 172.16.8.84 port 443: Connection refused Mitigation: Prevent based on IP Reputation IP Reputation can be used along with many features in the BIG-IP. IP reputation is enabled thru an add-on license and when licensed, the BIG-IP downloads an IP reputation database and is checked against the IP traffic, usually done during connection establishment and matches the IP's category . If a condition to block a category is set, depending on the BIG-IP feature being used, the connection can be dropped or TCP reset or even, return a HTTP custom response page. It is possible that IPs with bad reputation will send the attack traffic during a HTTP Brute Force attack and blocking these categorised bad IP will help in lessening the traffic that a website needs to process. Example: Using LTM Policy Using a LTM Policy, IP reputation can be checked and be TCP Reset if the IP matches a defined category [root@sec8:Active:Standalone] config # tmsh list ltm policy IP_reputation_bad ltm policy IP_reputation_bad { draft-copy Drafts/IP_reputation_bad last-modified 2019-12-17:15:08:52 rules { IP_reputation_bad_reset { actions { 0 { shutdown client-accepted connection } } conditions { 0 { iprep client-accepted values { BotNets "Windows Exploits" "Web Attacks" Proxy } } } } } status published strategy first-match } Verifying the connection was TCP Reset after the Three Way Handshake via tcpdump tcpdump -nni 0.0:nnn host 72.52.179.174 15:15:12.905996 IP 72.52.179.174.8500 > 172.16.8.84.443: Flags [S], seq 4061893880, win 29200, options [mss 1460,sackOK,TS val 313915334 ecr 0,nop,wscale 7], length 0 in slot1/tmm0 lis= flowtype=0 flowid=0 peerid=0 conflags=0 inslot=63 inport=23 haunit=0 priority=0 peerremote=00000000:00000000:00000000:00000000 peerlocal=00000000:00000000:00000000:00000000 remoteport=0 localport=0 proto=0 vlan=0 15:15:12.906077 IP 172.16.8.84.443 > 72.52.179.174.8500: Flags [S.], seq 2839531704, ack 4061893881, win 14600, options [mss 1460,nop,wscale 0,sackOK,TS val 321071554 ecr 313915334], length 0 out slot1/tmm0 lis=/Common/vs-172.16.8.84 flowtype=64 flowid=56000151BD00 peerid=0 conflags=100200004000024 inslot=63 inport=23 haunit=1 priority=3 peerremote=00000000:00000000:00000000:00000000 peerlocal=00000000:00000000:00000000:00000000 remoteport=0 localport=0 proto=0 vlan=0 15:15:12.907573 IP 72.52.179.174.8500 > 172.16.8.84.443: Flags [.], ack 1, win 229, options [nop,nop,TS val 313915335 ecr 321071554], length 0 in slot1/tmm0 lis=/Common/vs-172.16.8.84 flowtype=64 flowid=56000151BD00 peerid=0 conflags=100200004000024 inslot=63 inport=23 haunit=0 priority=0 peerremote=00000000:00000000:00000000:00000000 peerlocal=00000000:00000000:00000000:00000000 remoteport=0 localport=0 proto=0 vlan=0 15:15:12.907674 IP 172.16.8.84.443 > 72.52.179.174.8500: Flags [R.], seq 1, ack 1, win 0, length 0 out slot1/tmm0 lis=/Common/vs-172.16.8.84 flowtype=64 flowid=56000151BD00 peerid=0 conflags=100200004808024 inslot=63 inport=23 haunit=1 priority=3 rst_cause="[0x273e3e7:998] reset by policy" peerremote=00000000:00000000:00000000:00000000 peerlocal=00000000:00000000:00000000:00000000 remoteport=0 localport=0 proto=0 vlan=0 Using an iRule Using an iRule, IP reputation can be checked and if the client IP matches a defined category, traffic can be dropped See reference article https://clouddocs.f5.com/api/irules/IP-reputation.html In this example iRule, if a source IP address matches any of IP reputation categories, it will be dropped. #Drop the packet at initial packet received if the client has a bad reputation when FLOW_INIT { # Check if the IP reputation list for the client IP is not 0 if {[llength [IP::reputation [IP::client_addr]]] != 0}{ log local0. "[IP::client_addr]: category: \"[IP::reputation [IP::client_addr]]\"" #remove/comment log if not needed # Drop the connection drop } } Generated log for blocked IP with bad reputation Dec 17 16:22:49 sec8 info tmm6[11427]: Rule /Common/ip_reputation_block <FLOW_INIT>: 72.52.179.174: category: "Proxy {Mobile Threats}" Final Thoughts on LTM based iRule and LTM Policy Mitigations The usage of iRule and LTM policy for mitigating HTTP Brute Force Attacks are great if there is only LTM module provisioned in the BIGIP and situation requires quick mitigation. iRules are community supported and are not officially supported by F5 Support. The sample iRules here are tested in a lab environment and will work based on lab scenario which are closely modeled on actual observed attacks. iRules are best configured and implemented by F5 Professional Services which works closely with customer and scope the functionality of the iRule as per customer requirement. Some of the mitigation can be done thru LTM Policy. LTM Policy is a native feature of BIGIP and unlike iRules, does not need "on the fly compilation", and thus will be faster and is the preferred configuration over iRules. LTM Policy configuration are straightforward while iRules can be complicated but also flexible and its advantage over LTM Policies. Rate limiting requests during HTTP Brute Force attack may be a way to preserve some of the legal requests and using iRules, flexible approaches can be done. There are more advanced mitigation for HTTP Brute Force attacks using the Application Security Manager (ASM) Module and is preferred over iRules. Example in BIGIP version 14 for ASM, TLS Fingerprinting is a functionality included in the ASM Protection Profiles. TPS based mitigation can also be configured using the ASM protection profiles - example, if request from a source IP is exceeding the defined request threshold, it can be action-ed as configured - example, blocked or challenged using CAPTCHA. Using an ASM Security Policy, attacks such as Credential Stuffing can be mitigated using the Brute Force Protection configuration. Bots can also be categorized and be allowed or challenged or blocked using Bot Defense Profile and Bot Signatures.2.5KViews7likes0CommentsBIG-IP L2 Virtual Wire LACP Passthrough Deployment with IXIA Bypass Switch and Network Packet Broker (Single Service Chain - Active / Standby)