L7 DoS Protection with NGINX App Protect DoS

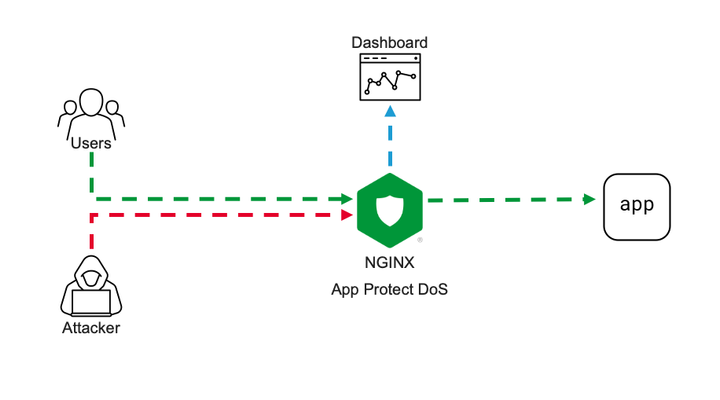

Intro NGINX security modules ecosystem becomes more and more solid. Current App Protect WAF offering is now extended by App Protect DoS protection module. App Protect DoS inherits and extends the state-of-the-art behavioral L7 DoS protection that was initially implemented on BIG-IPand now protects thousands of workloads around the world. In this article, I’ll give a brief explanation of underlying ML-based DDoS prevention technology and demonstrate few examples of how precisely it stops various L7 DoS attacks. Technology It is important to emphasize the difference between the general volumetric-based protection approach that most of the market uses and ML-based technology that powers App Protect DoS. Volumetric-based DDoS protection is an old and well-known mechanism to prevent DDoS attacks. As the name says, such a mechanism counts the number of requests sharing the same source or destination, then simply drops or applies rate-limiting after some threshold crossed. For instance, requests sourcing the same IP are dropped after 100 RPS, requests going to the same URL after 200 RPS, and rate-limiting kicks in after 500 RPS for the entire site. Obviously, the major drawback of such an approach is that the selection criterion is too rough. It can causeerroneous drops of valid user requests and overall service degradation. The phenomenon when a security measure blocks good requests is called a “false positive”. App Protect DoS implements much more intelligent techniques to detect and fight off DDoS attacks. At a high level, it monitors all ongoing traffic and builds a statistical model in other words a baseline in aprocesscalled “learning”. The learning process almost never stops, thereforea baseline automatically adjusts to the current web application layout, a pattern of use, and traffic intensity. This is important because it drastically reduces maintenance cost and reaction speed for the solution. There is no more need to manually customize protection configuration for every application or traffic change. Infinite learning produces a legitimate question. Why can’t the system learn attack traffic as a baseline and how does it detect an attack then? To answer this question let us define what a DDoS attack is. A DDoS attack is a traffic stream that intends to deny or degrade access to a service. Note, the definition above doesn’t focus on the amount of traffic. ‘Low and slow’ DDoS attacks can hurt a service as severely as volumetric do. Traffic is only considered malicious when a service level degrades. So, this means that attack traffic can become a baseline, but it is not a big deal since protected service doesn’t suffer. Now only the “service degradation” term separates us from the answer. How does the App Protect DoS measure a service degradation? As humans, we usually measure the quality of a web service in delays. The longer it takes to get a response the more we swear. App Protect DoS mimics human behavior by measuring latency for every single transaction and calculates the level of stress for a service. If overall stress crosses a threshold App Protect DoS declares an attack. Think of it; a service degradation triggers an attack signal, not a traffic volume. Volume is harmless if an application servermanages to respond quickly. Nice! The attack is detected for a solid reason. What happens next? First of all, the learning process stops and rolls back to a moment when the stress level was low. The statistical model of the traffic that was collected during peacetime becomes a baseline for anomaly detection. App Protect DoS keeps monitoring the traffic during an attack and uses machine learning to identify the exact request pattern that causes a service degradation. Opposed to old-school volumetric techniques it doesn’t use just a single parameter like source IP or URL, but actually builds as accurate as possible signature of entire request that causes harm. The overall number of parameters that App Protect DoS extracts from every request is in the dozens. A signature usually contains about a dozen including source IP, method, path, headers, payload content structure, and others. Now you can see that App Protect DoS accuracy level is insane comparing to volumetric vectors. The last part is mitigation. App Protect DoS has a whole inventory of mitigation tools including accurate signatures, bad actor detection, rate-limiting or even slowing down traffic across the board, which it usesto return service. The strategy of using those is convoluted but the main objective is to be as accurate as possible and make no harm to valid users. In most cases, App Protect DoS only mitigates requests that match specific signatures and only when the stress threshold for a service is crossed. Therefore, the probability of false positives is vanishingly low. The additional beauty of this technology is that it almost doesn’t require any configuration. Once enabled on a virtual server it does all the job "automagically" and reports back to your security operation center. The following lines present a couple of usage examples. Demo Demo topology is straightforward. On one end I have a couple of VMs. One of them continuously generates steady traffic flow simulating legitimate users. The second one is supposed to generate various L7 DoS attacks pretending to be an attacker. On the other end, one VM hosts a demo application and another one hosts NGINX with App Protect DoS as a protection tool. VM on a side runs ELK cluster to visualize App Protect DoS activity. Workflow of a demo aims to showcase a basic deployment example and overall App Protect DoS protection technology. First, I’ll configure NGINX to forward traffic to a demo application and App Protect DoS to apply for DDoS protection. Then a VM that simulates good users will send continuous traffic flow to App Protect DoS to let it learn a baseline. Once a baseline is established attacker VM will hit a demo app with various DoS attacks. While all this battle is going on our objective is to learn how App Protect DoS behaves, and that good user's experience remains unaffected. Similar to App Protect WAF App Protect DoS is implemented as a separate module for NGINX. It installs to a system as an apt/yum package. Then hooks into NGINX configuration via standard “load_module” directive. load_module modules/ngx_http_app_protect_dos_module.so; Once loaded protection enables under either HTTP, server, or location sections. Depending on what would you like to protect. app_protect_dos_enable [on|off] By default, App Protect DoS takes a protection configuration from a local policy file “/etc/nginx/BADOSDefaultPolicy.json” { "mitigation_mode" : "standard", "use_automation_tools_detection": "on", "signatures" : "on", "bad_actors" : "on" } As I mentioned before App Protect DoS doesn’t require complex config and only takes four parameters. Moreover, default policy covers most of the use cases therefore, a user only needs to enable App Protect DoS on a protected object. The next step is to simulate good users’ traffic to let App Protect DoS learn a good traffic pattern. I use a custom bash script that generates about 6-8 requests per second like an average surfing activity. While inspecting traffic and building a statistical model of good traffic App Protect DoS sends logs and metrics to Elasticsearch so we can monitor all its activity. The dashboard above represents traffic before/after App Protect DoS, degree of application stress, and mitigations in place. Note that the rate of client-side transactions matches the rate of server-side transactions. Meaning that all requests are passing through App Protect DoS and there are not any mitigations applied. Stress value remains steady since the backend easily handles the current rate and latency does not increase. Now I am launching an HTTP flood attack. It generates several thousands of requests per second that can easily overwhelm an unprotected web server. My server has App Protect DoS in front applying all its’ intelligence to fight off the DoS attack. After a few minutes of running the attack traffic, the dashboard shows the following situation. The attack tool generated roughly 1000RPS. Two charts on the left-hand side show that all transactions went through App Protect DoS and were reaching a demo app for a couple of minutes causing service degradation. Right after service stress has reached a threshold an attack was declared (vertical red line on all charts). As soon as the attack has been declared App Protect DoS starts to apply mitigations to resume the service back to life. As I mentioned before App Protect DoS tries its best not to harm legitimate traffic. Therefore, it iterates from less invasive mitigations to more invasive. During the first several seconds when App Protect DoS just detected an attack and specific anomaly signature is not calculated yet. App Protect DoS applies an HTTP redirect to all requests across the board. Such measure only adds a tiny bit of latency for a web browser but allows it to quickly filter out all not-so-intelligent attack tools that can’t follow redirects. In less than a minute specific anomaly signature gets generated. Note how detailed it is. The signature contains 11 attributes that cover all aspects: method, path, headers, and a payload. Such a level of granularity and reaction time is not feasible neither for volumetric vectors nor a SOC operator armed with a regex engine. Once a signature is generated App Protect DoS reduces the scope of mitigation to only requests that match the signature. It eliminates a chance to affect good traffic at all. Matching traffic receives a redirect and then a challenge in case if an attacker is smart enough to follow redirects. After few minutes of observation App Protect DoS identifies bad actors since most of the requests come from the same IP addresses (right-bottom chart). Then switches mitigation to bad actor challenge. Despite this measure hits all the same traffic it allows App Protect DoS to protect itself. It takes much fewer CPU cycles to identify a target by IP address than match requests against the signature with 11 attributes. From now on App Protect DoS continues with the most efficient protection until attack traffic stops and server stress goes away. The technology overview and the demo above expose only a tiny bit of App Protect DoS protection logic. A whole lot of it engages for more complicated attacks. However, the results look impressive. None of the volumetric protection mechanisms or even a human SOC operator can provide such accurate mitigation within such a short reaction time. It is only possible when a machine fights a machine.4.2KViews1like3CommentsSecuring APIs with BigIP

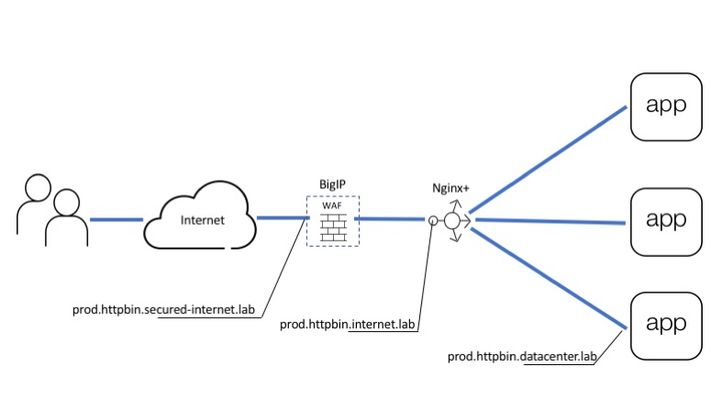

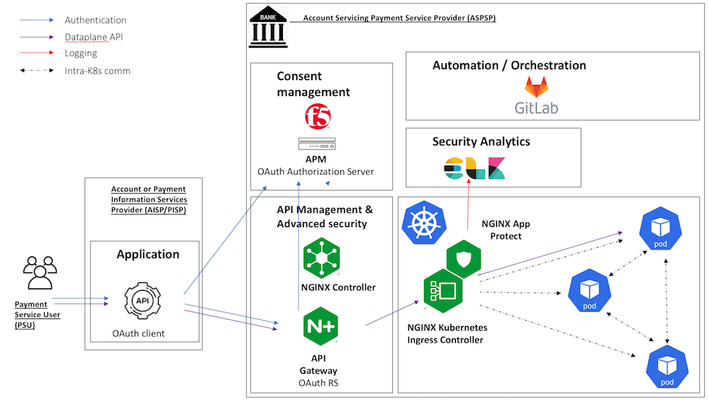

Introduction API servers respond to requests using the HTTP protocol, much like Web Servers. Therefore, API servers are susceptible to HTTP attacks in ways similar to Web Servers. Previous articles covered how to publish an API using the NGINX platform as an API management gateway. These APIs are still exposed to web attacks and defensive mechanisms are needed to defend the API against web attacks, denial of service, and Bots.The diagram below shows all the layers needed to deliver and defend APIs. BIGIP provides the protection and NGINX Plus provides API management. Picture 1. This article covers Advanced Web Application Firewall (AWAF) to protect against HTTP vulnerabilities Unified Bot Defense to protect against bots Behavioral Anomaly DoS Defense to prevent DoS attacks As shown in Picture 1, above BIG-IP goes in front of the API management gateway as an additional security gateway. The beauty of this approach is that BIG-IP can be initially deployed on the side while the API is being delivered to users through the NGINX Plus gateway directly. Once BIG-IP is configured to forward good requests to NGINX Plus and security policies are in place, BIG-IP can be brought into the traffic flow by simply changing the DNS records for "prod.httpbin.internet.lab" to point to BIG-IP instead of NGINX Plus. From this point on all calls will automatically arrive at BIG-IP for inspection and only those that pass all verifications will be forwarded to the next layer. Configuring Data Path Data path configuration for this use case is pretty common for BIG-IP which is historically a load balancer. It includes: Virtual Server (listens for API calls) SSL profile (defines SSL settings) SSL certificate and key (cryptographically identifies virtual server) Pool (destination for passed calls) Picture below shows how all of the configuration pieces work together. Picture 3. At first upload server certificate and key Setup SSL profile to use a certificate from the previous step Finally, create a virtual server and a pool to accept API calls and forward them to the backend From this point IG-IP accepts all requests which go to "prod.httpbin.secured-internet.lab" hostname and forwards them to API management gateway powered by NGINX Plus. Setting up WAF policy As you may already know every API starts from the OpenAPI file which describes all available endpoints, parameters, authentication methods, etc. This file contains all details related to API definition and it is widely used by most tools including F5's WAF for self-configuration. Imported OpenAPI file automatically configures policy with all API specific parameters as a list of allowed URLs, parameters, methods, and so on. Therefore WAF configuration narrows down to importing OpenAPI file and using policy template for API security. Create a policy Specify policy name, template, swagger file, virtual server and logging profile. API security template pre-configures WAF policy with all necessary violations and signatures to protect API backend. OpenAPI file introduces application-specific configuration to a policy as a list of allowed URLs, parameters, and methods. That is it. WAF policy is configured and assigned to the virtual server. Now we can test that only legitimate requests to allowed resources go through. For example request to URL which does not exist in the policy will be blocked: ubuntu@ip-10-1-1-7:~$ http -v https://prod.httpbin.secured-internet.lab/urldoesntexist GET /urldoesntexist HTTP/1.1 Accept: */* Accept-Encoding: gzip, deflate Connection: keep-alive Host: prod.httpbin.secured-internet.lab User-Agent: HTTPie/0.9.2 HTTP/1.1 403 Forbidden Cache-Control: no-cache Connection: close Content-Length: 38 Content-Type: application/json; charset=utf-8 Pragma: no-cache { "supportID": "1656927099224588298" } Request with SQL injection also blocked: Configuring Bot Defense Starting from BIG-IP release 14.1 proactive bot defense, web scraping, and bot detection features are combined under Bot Defense profile. Therefore current bot defense forms a unified tool to prevent all types of bots from accessing your web asset. Bot detection and mitigation mechanisms heavily rely on signatures and javascript (JS) based challenges. JS challenges run in a client browser and help to identify client type/malicious activities or apply mitigation by injecting a CAPTCHA or slowing down a client by making a browser perform a heavy calculation. Since this article is focused on protecting APIs it is important to note that JS challenges need to be used with caution in this case. Keep in mind that robots might be legitimate users for an API. However, robots similar to bots can not execute javascript. So when a robot receives JS it considers JS as API response. Such response does not align with what a robot expects and the application may break. If you know an API is serving automated processes avoid the use of JS-based challenges or test every JS-based feature in the staging environment first. Configuration to detect and handle many different types of Bots can be simplified by using any of the three pre-configured security modes: Relaxed (Challenge free, mitigates only 100% bad bots based on signatures ) Balanced (Let suspicious clients prove good behavior by executing JS challenges or solving CAPTCHA) Strict (Blocks all kinds of bots, verifies browsers, and collects device id from all clients) It is best to start with the relaxed template and tighten up the configuration as familiarity grows with the traffic that the API endpoints see. Once the profile is created assign it to the same virtual server at "Local Traffic ›› Virtual Servers : Virtual Server List ›› prod.httpbin.secured-internet.lab ›› Security" page. Such configuration performs bot detection based on data that is available in requests such as URL, user-agent, or header order. This mode is safe for all kinds of API users (browser-based or code-based robots) and you can see transaction outcome on "Security ›› Event Logs : Bot Defense : Bot Requests" page. If there are false positives you can adjust bot status or create a new trusted one for your robots through "Security ›› Bot Defense : Bot Signatures : Bot Signatures List". Setting Up DDoS Defense WAF and BOT defenses can detect requests with attack signatures or requests that are generated by malicious clients. However, attackers can send attacks composed of legitimate requests at a high scale, that can bring down an API endpoint. The following features present in the BIG-IP can be used to defeat Denial of Service attacks against API endpoints. Transaction per second (TPS) Based DoS Defense Stress Based DoS Defense Behavioral Anomaly Based DoS Defense Eviction Policies TPS-based DoS defense is the most straightforward protection mechanism. In this mode, BigIP measures requests rate for parameters such as Source IP, URL, Site, etc. In case the per minute rate becomes higher than the configured threshold then the attack gets triggered, and selected mitigation modes are applied to ‘all’ requests identified by the parameter. The stress-based mode works similarly to TPS, but instead of applying mitigation right after the threshold is crossed it only mitigates when the protected asset is under stress. This approach significantly reduces false positives. Behavioral anomaly detection (BADoS) mode offers the most advanced security and accuracy. This mode does not require the administrator to perform any configuration, other than turning the feature on. A machine learning algorithm is used to detect whether the protected asset is under attack or not. Another machine learning algorithm is used to baseline the traffic in peacetime. When the ‘attack detector algorithm’ identifies that the protected asset is under stress and non-responsive, then the second algorithm stops learning and looks for anomalies. Signatures matching these anomalies are automatically created. Anomalies discovered during attack time are likely nefarious and are eliminated from the traffic by application of dynamically discovered signatures. BADoS will automatically build a good traffic baseline, detect anomalies and stop them if the API endpoint is under stress. Conclusion F5 offers a multi-layered solution for protecting APIs, which is easy to configure.Please connect with me via comments and keep an eye on more articles in this series. Good luck!3.4KViews2likes2CommentsA basic OWASP 2017 Top 10-compliant declarative WAF policy

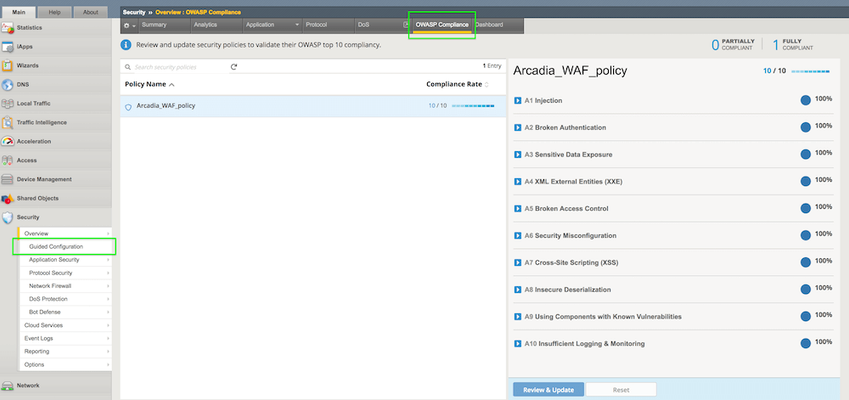

As OWASP Application Security Risks Top 10 is the most recognized report outlining the top security concerns for web application security, it is important to see how to configure F5's declarative Advanced WAF policy to protect against those threats. This article describes an example of a basic declarative WAF policy that is OWASP 2017 Top 10-compliant. Note that there are policy entities that are customized for the application being protected, in this case a demo application named Arcadia Finance so they will need to be adapted for each particular application to be protected. The policy was configured following the pattern described in K52596282: Securing against the OWASP Top 10 guide and its conformance with OWASP Top 10 is being verified by consulting the OWASP Compliance Dashboard bundled with F5's Advanced WAF. Introduction As described in the above K52596282: Securing against the OWASP Top 10, the current OWASP Top 10 vulnerabilities are: Injection attacks (A1) Broken authentication attacks (A2) Sensitive data exposure attacks (A3) XML external entity attacks (A4) Broken access control attacks (A5) Security misconfiguration attacks (A6) Cross-site scripting attacks (A7) Insecure deserialization attacks (A8) Components with known vulnerabilities attacks (A9) Insufficient logging and monitoring attacks (A10) Most of these vulnerabilities can be mitigated with a properly configured WAF policy while, for the few of them that depend on security measures implemented in the application itself, there are recommended guidelines on application security which will prevent the exploitation of OWASP 10 vulnerabilities. The declarative Advanced WAF policies are security policies defined using the declarative JSON format, which facilitates integration with source control systems and CI/CD pipelines. The documentation of the declarative WAF policy (v16.0) can be found here while its schema can be consulted here. One of the most basic declarative Advanced WAF policies that can be applied is as follows: { "policy": { "name": "Complete_OWASP_Top_Ten", "description": "A basic, OWASP Top 10 protection items v1.0", "template": { "name": "POLICY_TEMPLATE_RAPID_DEPLOYMENT" } } As you can see from the OWASP Compliance Dashboard screenshot, this policy is far from being OWASP-compliant but we will use it as a starting point to build a fully compliant configuration. This article will go through each vulnerability class and show an example of declarative WAF policy configuration that would mitigate that respective vulnerability. Injection attacks (A1) As K51836239: Securing against the OWASP Top 10 | Chapter 2: Injection attacks (A1) states: "An injection attack occurs when: An attacker injects a command, query, or code into a vulnerable element of the application. The web application server executes the injection. The attacker gains unauthorized access, causes a denial of service, steals sensitive data, or causes a similarly nefarious outcome." Securing against injection attacks entails configuring attack signatures and evasion techniques as well as restricting meta characters and parameters. The following configuration, added to the above basic declarative policy, will meet those requirements: "signature-settings":{ "signatureStaging": false, "minimumAccuracyForAutoAddedSignatures": "high" }, "blocking-settings": { "evasions": [ { "description": "Bad unescape", "enabled": true, "learn": true }, { "description": "Apache whitespace", "enabled": true, "learn": true }, { "description": "Bare byte decoding", "enabled": true, "learn": true }, { "description": "IIS Unicode codepoints", "enabled": true, "learn": true }, { "description": "IIS backslashes", "enabled": true, "learn": true }, { "description": "%u decoding", "enabled": true, "learn": true }, { "description": "Multiple decoding", "enabled": true, "learn": true, "maxDecodingPasses": 3 }, { "description": "Directory traversals", "enabled": true, "learn": true } ] } Broken authentication attacks (A2) According to K35371357: Securing against the OWASP Top 10 | Chapter 3: Broken authentication (A2): "The Open Web Application Security Project (OWASP) defines broken authentication as incorrect implementation of application functions related to authentication and session management. Using broken authentication, attackers can compromise passwords, keys, or session tokens, or exploit other implementation flaws to assume other users' identities, either temporarily or permanently." Mitigations include session hijacking protection and tracking user sessions, brute force protection, credential stuffing protection and CSRF protection. Here is an example of configuration that will enable those features: "blocking-settings": { "violations": [ { "alarm": true, "block": true, "description": "ASM Cookie Hijacking", "learn": false, "name": "VIOL_ASM_COOKIE_HIJACKING" }, { "alarm": true, "block": true, "description": "Access from disallowed User/Session/IP/Device ID", "name": "VIOL_SESSION_AWARENESS" }, { "alarm": true, "block": true, "description": "Modified ASM cookie", "learn": true, "name": "VIOL_ASM_COOKIE_MODIFIED" }, { "name": "VIOL_LOGIN_URL_BYPASSED", "alarm": true, "block": true, "learn": false } ], "evasions": [ <...> ] }, "session-tracking": { "sessionTrackingConfiguration": { "enableTrackingSessionHijackingByDeviceId": true } }, "urls": [ { "name": "/trading/auth.php", "method": "POST", "protocol": "https", "type": "explicit" } ], "login-pages": [ { "accessValidation": { "headerContains": "302 Found" }, "authenticationType": "form", "passwordParameterName": "password", "usernameParameterName": "username", "url": { "name": "/trading/auth.php", "method": "POST", "protocol": "https", "type": "explicit" } } ], "login-enforcement": { "authenticatedUrls": [ "/trading/index.php" ] }, "brute-force-attack-preventions": [ { "bruteForceProtectionForAllLoginPages": true, "leakedCredentialsCriteria": { "action": "alarm-and-blocking-page", "enabled": true } } ], "csrf-protection": { "enabled": true }, "csrf-urls": [ { "enforcementAction": "verify-csrf-token", "method": "GET", "url": "/trading/index.php" } ] Note: this is a configuration example customized for Arcadia Finance demo application. The URLs specified in your configuration might be different. Sensitive data exposure (A3) According to K05354050: Securing against the OWASP Top 10 | Chapter 4: Sensitive data exposure (A3): "A sensitive data exposure flaw can occur when you: Store or transit data in clear text (most common). Protect data with an old or weak encryption. Do not properly filter or mask data in transit." BIG-IP Advanced WAF can protect sensitive data from being transmitted using Data Guard response scrubbing and from being logged with request log masking. Note: masking sensitive data in the request log is enabled by default. You can enable Data Guard by adding the following configuration to the policy: "data-guard": { "enabled": true } XML external entity attacks (A4) As per K50262217: Securing against the OWASP Top 10 | Chapter 5: XML external entity attacks (A4): "According to the Open Web Application Security Project (OWASP), an XXE attack is an attack against an application that parses XML input. The attack occurs when a weakly configured XML parser processes XML input containing a reference to an external entity. XXE attacks exploit Document Type Definitions (DTDs), which are considered obsolete; however, they are still enabled in many XML parsers." Securing against XXE can be done through disallowing DTDs and enabling XXE attack signatures. The following configuration achieves that: "blocking-settings": { "violations": [ <...> { "alarm": true, "block": true, "description": "XML data does not comply with format settings", "learn": true, "name": "VIOL_XML_FORMAT" } ], "evasions": [ <...> ] }, "xml-profiles": [ { "name": "Default", "defenseAttributes": { "allowDTDs": false, "allowExternalReferences": false } } ] Note: XXE attack signatures are enabled by default. Broken access control (A5) According to K36157617: Securing against the OWASP Top 10 | Chapter 6: Broken access control (A5): "Access control, also called authorization, allows or denies access to your application's features and resources. Misuse of access control enables: Unauthorized access to sensitive information. Inappropriate creation or deletion of resources. User impersonation. Privilege escalation." Preventing attacks exploiting broken access control can be done by login enforcement, enabling path traversal or authentication/authorization attack signatures and disallowing file types and URLs. For example, the following configuration will enable the disallowed file type and URL violations and add a disallowed URL: "blocking-settings": { <...> { "name": "VIOL_FILETYPE", "alarm": true, "block": true, "learn": true }, { "name": "VIOL_URL", "alarm": true, "block": true, "learn": true } ], "evasions": [ <...> ] }, "urls": [ <...> { "name": "/internal/test.php", "method": "GET", "protocol": "https", "type": "explicit", "isAllowed": false } ] Note: the policy already has a list of disallowed file types configured by default: Security misconfiguration attacks (A6) As per K10622005: Securing against the OWASP Top 10 | Chapter 7: Security misconfiguration attacks (A6): "Misconfiguration vulnerabilities are configuration weaknesses that may exist in software components or subsystems. For example, web server software may ship with default user accounts that an attacker can use to access the system, or the software may contain sample files, such as configuration files, and scripts that an attacker can exploit. In addition, software may have unneeded services enabled, such as remote administration functionality." Since these vulnerabilities depend on the way the application has been configured, F5 has a number of recommendations for the system administrators describing some techniques used to mitigate this class of attacks - please see the referenced article. You can then check the relevant items under the OWASP Compliance Dashboard: Click "Review & Update" to apply the changes. Cross-site scripting (A7) According to K22729008: Securing against the OWASP Top 10 | Chapter 8: Cross-site scripting (A7): "Cross-site scripting (XSS or CSS) occurs when a web application receives untrusted data and then sends that data, without validation, to an end user's web browser. This transaction enables an attacker to send executable code to the victim’s browser and, ultimately, manipulate the end user's session by doing one of the following: Session hijacking Defacing the website Redirecting the user to a site with malicious intent (most common)" Securing against XSS attacks can be done by enabling the relevant attack signatures and disallowing meta-characters in parameters - the following demonstrates this configuration: "blocking-settings": { "violations": [ <...> { "name": "VIOL_URL_METACHAR", "alarm": true, "block": true, "learn": true }, { "name": "VIOL_PARAMETER_VALUE_METACHAR", "alarm": true, "block": true, "learn": true }, { "name": "VIOL_PARAMETER_NAME_METACHAR", "alarm": true, "block": true, "learn": true } ] Insecure deserialization (A8) As per K01034237: Securing against the OWASP Top 10 | Chapter 9: Insecure deserialization (A8): "Serialization occurs when an application converts data structures and objects into a different format, such as binary or structured text like XML and JSON, so that it is suitable for other purposes, including the following: Data storage Messaging Remote procedure call (RPC)/inter-process communication (IPC) Caching HTTP cookies HTML form parameters API authentication tokens Deserialization is when an application reverts the serialized output into its original form. To exploit insecure deserialization, attackers typically tamper with the data in data structures or objects that modify an object's content or change an application's logic." Protecting against deserialization attacks can be done by performing HTTP header, XML or JSON validation and by enabling the insecure deserialization attack signatures - note that these signatures are enabled by default. An example of JSON validation configuration can be seen below: "urls": [ <...> { "name": "/trading/rest/portfolio.php", "method": "GET", "protocol": "https", "type": "explicit", "urlContentProfiles": [ { "headerName": "Content-Type", "headerValue": "text/html", "type": "json", "contentProfile": { "name": "portfolio" } }, { "headerName": "*", "headerValue": "*", "type": "do-nothing" } ] } ], "json-profiles": [ { "name": "portfolio" } ] Using components with known vulnerabilities (A9) According to K90977804: Securing against the OWASP Top 10 | Chapter 10: Using components with known vulnerabilities (A9): "Component-based vulnerabilities occur when a web application component is unsupported, out of date, or vulnerable to a known exploit. The BIG-IP ASM system provides security features, such as attack signatures, that protect your web application against component-based vulnerability attacks." As a way to customize the WAF policy for the server technologies relevant to your back-end, consider adding them to the policy by enabling, for example, server technologies detection: "policy-builder-server-technologies": { "enableServerTechnologiesDetection": true } For a list of recommended actions to mitigate this vulnerability class, please consult the referred article. Once the mitigation measures are in place, check the relevant entries in the OWASP Conformance Dashboard. Insufficient logging and monitoring (A10) K97756490: Securing against the OWASP Top 10 | Chapter 11: Insufficient logging and monitoring (A10) describes the recommended configuration to enable comprehensive logging on BIG-IP Advanced WAF. This configuration is not part of the declarative WAF policy so it will not be described here - please follow the instructions in the referred article. Once logging has been configured, check the relevant items in the OWASP Compliance Dashboard. After updating the policy, the OWASP Compliance Dashboard should show a full compliance: Other recommended configurations In case client connections are passing through a trusted reverse proxy that replaces the source IP address and places the client's IP address in the X-Forwarded-For HTTP header, it is recommended to enable the "Trust X-Forwarded-For" option under the WAF policy: "general": { "trustXff": true } The enforcement mode can also be controlled through the policy file, the recommendation being to set it to "transparent" mode while running the CI/CD pipeline in Dev or QA environments (or, if the policy is build outside a CI/CD pipeline, in a similar Pre-Prod environment) and configure it as "blocking" when running into a Production environment: "enforcementMode":"transparent" Conclusion This article has shown a very basic OWASP Top 10 - compliant declarative WAF policy. It is worth noting that, although this WAF policy is fully compliant to OWASP Top 10 recommendations, it contains elements that need to be customized for each individual application and should only be treated as a starting point for a production-ready WAF policy that would most likely need to be configured with additional elements. The full configuration of the declarative policy used in this example can be found on CodeShare: Example OWASP Top 10-compliant declarative WAF policy3.2KViews3likes0CommentsAPI Discovery and Auto Generation of Swagger Schema

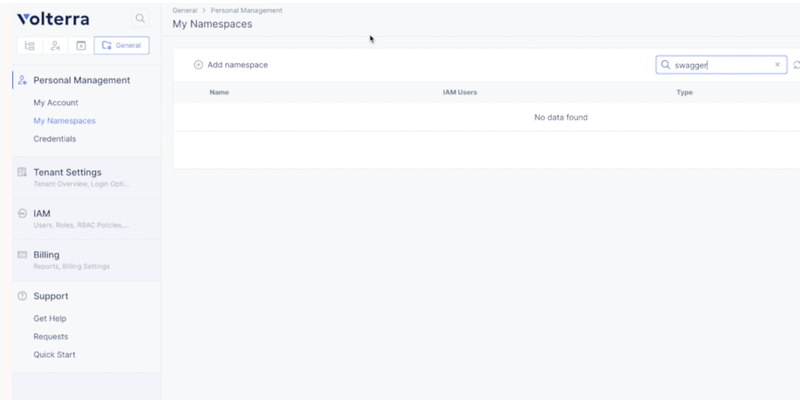

APIDiscovery API discovery helps inlearning, searching,and identifying loopholes when app communicatesvia APIsespecially in a large micro-services ecosystem.After discovery, the systemanalyzesthe APIs, then will know how to secure the APIs from malicious requests. Customer Challenges With large ecosystems, integrations,and distributed microservices, there is a huge increase in the number of API attacks. Most of these API attacks authenticated users and are very hard to detect. Also, security controls for web apps are totally different than security controls for APIs. Customersincreased adoption of microservice architecture has increased the complexity of managing, debugging,and securingapplications. With microservices,all the traffic between apps is using REST/gRPC APIs that are multiplexed onthesame network port (eg. HTTP 443) and as a result, the network level security and connectivityisnot very usefulanymore. Withcustomers adoptinga multi-cloud strategy, services might be residing on different cloud/vpc, so customers need a uniform way to apply app-level policies which will enforce zero trust API security acrossservice-to-servicecommunication. Also,the SecOps team has an administrative overhead to manually track the changes toAPI’sin a multi-cloud environment.Dev/DevOps team needs an easier tool to debug the apps. Solution Description Volterra’s AI/ML learning engine uniquely discovers API endpoints used during aservice-to-servicecommunication. This feature provides users debugging capabilities for distributed apps that are deployed on a single cloud or across multiple clouds. API endpoints discovered could be used to create API-level security policy to enforce tighter service-to-service communication. Also, the swagger schema helps in analyzing and debugging the request logs.Secopscan download and use it for audit purposes. This also serves as an input to thethird-partytools. Step by step process to enable API discovery and auto-generation of swagger schema There are mainly three steps in enabling the API discovery and downloading the swagger schema: Step1 .Enabling the API discoverywhich is to create an app-type config. Step2 .Deploying an applicationon Kubernetes and creating the HTTP load balancer. Step3 .Observing an appthrough service mesh graph and HTTP load balancer dashboardwhich includes downloading of swagger schema. Let us seehowto configureonVoltConsole: Step 1 Create anapplication namespaceunder generalnamespace tab: General > My Namespaces > Add name space. Step 2 Next,we need to create an app type which is under the shared tab: Shared > AI&ML > Add app type Here, while creating a new apptype,we need to enable the API discovery feature and markup setting to learn from direct traffic. Save and exit. Step 3 Next,let'sgo to the app tab to deploy the app: App > Applications >Virtual K8s > Add virtual K8s While creating new virtual K8s, you give it a name and selectves-io-all-res as a virtual site.Save and exit.Wehave towait till the cluster status is ready. Next, we will have to download thekubeconfigfile for the virtual K8S cluster. Now, we will use theKubectlto deploy the applicationusing the below command: kubeconfig$YOURKUBECONFIGFILE apply -fkubenetes-manifest.yml–namespace $YOURNAMESPACE Step 4 Now, we can go to theVoltConsoleto go and check the status of this app. We will wait for the pods to become ready and once all the pods are ready, we can now create theload balancer(LB)for frontend service. Next, we give the LB aname andlabelthe HTTP LB asves-io/app_typeand value as default (which is the name of the app type). Provide the domain nameunder basic config. This guideprovides instructions on how to delegate your DNS domain to Volterra using theVoltConsole. Delegating the domain to Volterra enables Volterra to manage the domain and be the authoritative domain name server for the domain. Volterra checks the DNS domain configured in the virtual host and verifies that the tenant owns that domain. Step 5 Now select theoriginpool, andcreate a new poolwith theoriginserver type and name as below.Also, select the network on the site as vK8s network on site.Select the correct port number for the service and save & exit the configuration. Step 6 Now,let'snavigate to HTTP LB dashboard > security monitoring > API endpoints. Here you willsee all the API endpoints that are discovered on the HTTPLBand you can download the swagger right from the HTTP LB dashboard page. Step 7 Now ,letsnavigate to the service mesh graph where we can view the entire application micro-services and how they are connected, and how the east-west and north-south traffic is flowing. App Namespace -> Mesh -> Service Mesh-> API endpoints tab Step 8 Now,let'sclick on the API endpoints tab, and we will see all the API endpoints discovered for the default app type. Now you can click on the Schema and then go to the PII& learned APIdata, andclick on the download button to download the swagger schema for each specific API endpoint. If we want to download the swagger schema for the entire application, then we go back to the API endpoints tab and download swagger as seen below: Conclusion With the increased attack surfaces with distributed microservices and other large ecosystems, having a feature such as API discovery andbeable to auto-generate the swagger file really helps in mitigating the malicious API attacks. This feature provides users debugging capabilities for distributed apps that are deployed on a single cloud or across multiple clouds. In addition,API endpoints discovered could be used to create API level security policy to enforce tighter service-to-service communication. The request logs are continuously analyzed to learn the schema for every learned API endpoint. The generated swagger schema could be downloaded through UI or Volterra API.SecOps can download and use it for audit purposesandDev/DevOps team can use to see the delta variables/path between differentversion.It can be used as input to otherthird-partytoolsand is less administrative overhead of managing the documents.2.6KViews2likes4CommentsSecuring GraphQL with Advanced WAF declarative policies

While REST has become the industry standard for designing Web APIs, GraphQL is rising in popularity as a more flexible and efficient alternative. Problem statement Similarly to REST, GraphQL is usually served over HTTP and is prone to the typical Web APIs security vulnerabilities, such as injection attacks, Denial of Service (DoS) attacks and abuse of flawed authorization. However, the mitigation strategies required to prevent a security breach of the GraphQL server must be specifically tailored to GraphQL. Unlike REST, where Web resources are identified by multiple URLs, GraphQL server operates on a single URL. Therefore, Web Application Firewalls (WAFs) configured to filter traffic based on URLs and query strings would not effectively protect GraphQL app. Instead, WAF policies for GraphQL must analyze and operate on the query level. In addition, GraphQL allows batching multiple queries in a single network call, which makes possible a batching attack specific to GraphQL. Proposed solution BIG-IP Advanced WAF has a number of features specifically designed for securing GraphQL APIs: A GraphQL Security Policy Template that enables quick deployment of GraphQL WAF policies A GraphQL Content Profile that groups all the relevant configurations relevant to GraphQL Support for the most common GraphQL use cases, where JSON payload is sent over POST (body) or GET (URL parameter) requests Native parsing of GraphQL enables the application of attack signature against each JSON field, with very low rate of false positives Protection against complexity-based Denial of Service attacks by allowing the configuration of a maximum depth of queries Support for enforcing the best practices of deployment GraphQL APIs with disabled introspection, which is the primary way for attackers to understand the API specification and tailor their attacks accordingly An option to control the number of allowed batched requests GraphQL-specific security violations allowing the fine tuning of the WAF policy Example configuration GraphQL configuration of the WAF policy can be done through the GUI or programatically, through the declarative policy model, allowing easy integration in automated environments that leverage, for example, CI/CD tools. As an example, below is a basic GraphQL declarative policy, demonstrating some of the features listed above: { "policy" : { "applicationLanguage" : "utf-8", "caseInsensitive" : false, "description" : "WAF Policy with GraphQL Profile", "enablePassiveMode" : false, "enforcementMode" : "blocking", "signature-settings": { "signatureStaging": false }, "filetypes" : [ { "allowed" : true, "checkPostDataLength" : true, "checkQueryStringLength" : true, "checkRequestLength" : true, "checkUrlLength" : true, "name" : "php", "performStaging" : true, "postDataLength" : 1000, "queryStringLength" : 1000, "requestLength" : 5000, "responseCheck" : false, "type" : "explicit", "urlLength" : 100 } ], "fullPath" : "/Common/waf_policy_withgraphql", "graphql-profiles" : [ { "attackSignaturesCheck" : true, "defenseAttributes" : { "allowIntrospectionQueries" : true, "maximumBatchedQueries" : 10, "maximumStructureDepth" : 10, "maximumTotalLength" : 100000, "maximumValueLength" : 10000, "tolerateParsingWarnings" : true }, "description" : "", "metacharElementCheck" : false, "name" : "graphql_profile" } ], "name" : "waf_policy_withgraphql", "protocolIndependent" : false, "softwareVersion" : "16.1.0", "template" : { "name" : "POLICY_TEMPLATE_GRAPHQL" }, "type" : "security", "urls" : [ { "attackSignaturesCheck" : true, "clickjackingProtection" : false, "description" : "", "disallowFileUploadOfExecutables" : false, "html5CrossOriginRequestsEnforcement" : { "enforcementMode" : "disabled" }, "isAllowed" : true, "mandatoryBody" : false, "method" : "*", "methodsOverrideOnUrlCheck" : false, "name" : "/graphql", "performStaging" : false, "protocol" : "https", "type" : "explicit", "urlContentProfiles" : [ { "contentProfile" : { "name" : "graphql_profile" }, "headerName" : "*", "headerOrder" : "default", "headerValue" : "*", "type" : "graphql" } ] } ] } } Conclusion As the adoption of GraphQL increases, so is the likelihood of emergence of security threats tailored for this (comparatively) new Web API technology. The features present in Advanced WAF enable a solid response against GraphQL-specific attacks, while allowing for integration in the most advanced CI/CD-driven environments. Other resources UDF lab: AWAF advanced security for GraphQL in CI/CD pipeline Many thanks to Serge Levin for his contribution to this article.2.2KViews3likes1CommentUse of NGINX Controller to Authenticate API Calls

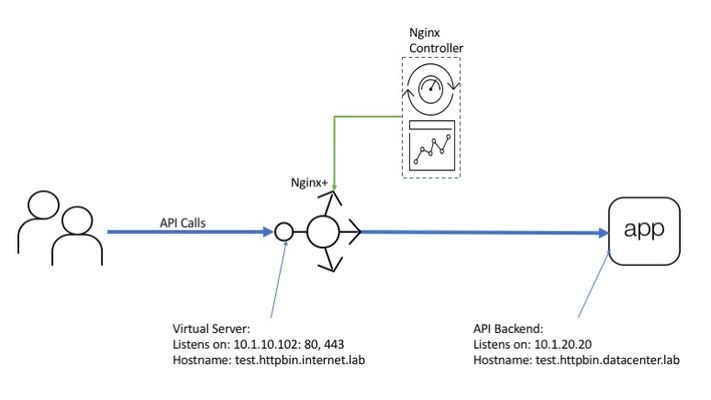

API calls authentication is essential for API security and billing. Authentication helps to reduce load by dropping anonymous calls and provides clear view on per user/group usage information since every call bears an identity marker. NGINX Controller provides an easy method for API owners to setup authentication for calls that traverse NGINX Plus instances as API gateways. What is API authentication and how is it different from authorization? API authentication it is an action when API gateway verifies an identity of a call by checking an identity marker (token, credentials, ...) in the call body. Authorization in turn is usually based on authentication. Authorization mechanisms extract an identity marker from a call and check if this identity is allowed to make the call or not. There are many approaches to authenticate an API call: HTTP Basic: API call carries clear text credentials in HTTP Authorization header. E.g. "Authorization: Basic dXNlcjpwYXNzd29yZA==" API key: API call carries an API key in request (multiple injection points possible) E.g: "GET /endpoint?token=dXNlcjpwYXNzd29yZA" Oauth: Complex open source standard for access delegation. When oAuth is in use API consumer obtains cryptographically signed JWT (json web token) token from an external identity provider and places it in the call. Server in turn uses JWK (json web key) obtained from the same identity provider to verify token signature and make sure data in JWT is true. As you may already know from previous articles NGINX Controller doesn't process traffic on its own but it configures NGINX Plus instances which run as API gateways to apply all necessary actions and policies to the traffic. The picture below shows how all these pieces to work together. Picture 1. Controller can setup two approaches for authenticating API calls: API key based oAuth (JWT) based This article covers procedures needed to configure both of supported API call authentication methods. As a prerequisite I assume you already have NGINX Plus and Controller setup along with at least one API published (if not please take a look at the previous article for details) Assume API owner developed an API and wants to make it avaliable for authenticated users only. Owner knows that customers have different use cases therefore different authentication methods fit each use case better. So it is required to authenticate users by API key or by JWT token. As discussed in previous article NGINX Controller abstracts API gateway configuration with higher level concepts for ease of configuration. The abstractions are shown on picture below. Picture 2. Therefore API definition, gateway, and workload group form a data path, the way how calls get accepted and where they get forwarded if all policies are passed. Policies contain necessary verifications/actions which apply for every API call traversing the data path. Picture 3. Amongst others there is authentication policy which allows to authenticate API calls. As shown on picture 2 the policy applies to published API instance which in turn represents data path for the traffic. Therefore the policy affects every call which flies through. Usually every authentication method fits one use case better then others. E.g. robots/bots better go with API key because process of obtaining of an JWT token from an authorization server is complex and requires a tools which bot/robot may not have. For human situation is opposite. It is much more native for user to type username/password and get token in exchange under the covers instead of copy/paste long API key to every call. Therefore oAuth fits better here. Steps below cover configuration of both supported authentication methods: API key and oAuth2.0. Assume Company_1 has bought access to an API. As a customer Company_1 wants to consume API automatically with robots and allow its employees to make requests manually as well. In order to authenticate employees using oAuth and robots with API key two different identity providers needs to be configured on controller. First we create a provider for employees. In order to NGINX Plus to verify JWT token in a call JWK key is required. There are two ways to supply the JWK key for the provider: upload a file or reference it as web URL. In case of reference NGINX Plus automatically refreshes the key periodically. These two approaches are shown in two pictures below. A second provider is used to authenticate Customer_1 robots with a simple API key. There are two options for creating API keys in provider. The first is to create them manually. The second is to upload CSV file containing user accounts credentials. user@linux$cat api-clients.csv CUSTOMER_1_ROBOT_1000,2b31388ccbcb4605cb2b77447120c27ecd7f98a47af9f17107f8f12d31597aa2 CUSTOMER_1_ROBOT_1001,71d8c4961e228bfc25cb720e0aa474413ba46b49f586e1fc29e65c0853c8531a CUSTOMER_1_ROBOT_1002,fc979b897e05369ebfd6b4d66b22c90ef3704ef81e4e88fc9907471b0d58d9fa CUSTOMER_1_ROBOT_1003,e18f4cacd6fc4341f576b3236e6eb3b5decf324552dfdd698e5ae336f181652a CUSTOMER_1_ROBOT_1004,3351ac9615248518348fbddf11d9c597967b1e526bd0c0c20b2fdf8bfb7ae30a The next step is to assign an authentication policy to an published API. Each authentication policy may include only one client group therefore we need two of them to authenticate employees with JWT or robots with API token. Policy for robots specifies the provider and a location where an API token is placed. Along with query string in our example also headers, cookies, and bearer token locations are supported. Policy for employees specifies the provider and the JWT location NOTE: (Limitation) Policies in an environment have AND operand between them. This means environment can have only one authentication policy otherwise identity requirements from both of them need to be satisfied for call to pass. Once policy for employees is set up and config has been pushed to NGINX Plus instance, calls authentication is in place and we can now test it. At first let us make sure unauthenticated calls are rejected. I use postman as API client. As you see request without JWT token is rejected with 401 "Unauthorized" response code. Now I obtain valid token from identity provider and insert it in the same call. A call with valid JWT token successfully passes authentication and brings response back! Now we can try to replace authentication policy with policy for robots and conduct the same tests. I am emulating a robot with console tool which can not act as an oAuth client to retrieve a JWT token. So robots simply append API key to the query string. API call without any token is blocked. ubuntu@ip-10-1-1-7:~$ httphttps://prod.httpbin.internet.lab/uuid HTTP/1.1 401 Unauthorized Connection: keep-alive Content-Length: 40 Content-Type: application/json Date: Wed, 18 Dec 2019 00:12:26 GMT Server: nginx/1.17.6 { "message": "Unauthorized", "status": 401 } API call with valid API key in query string is allowed. ubuntu@ip-10-1-1-7:~$ httphttps://prod.httpbin.internet.lab/uuid?token=2b31388ccbcb4605cb2b77447120c27ecd7f98a47af9f17107f8f12d31597aa2 HTTP/1.1 200 OK Access-Control-Allow-Credentials: true Access-Control-Allow-Origin: * Connection: keep-alive Content-Length: 53 Content-Type: application/json Date: Wed, 18 Dec 2019 00:12:57 GMT Server: nginx/1.17.6 { "uuid": "b57f6b72-7730-4d0e-bbb7-533af8e2a4c0" } Therefore even such a complex feature implementation as API calls authentication becomes much easier yet flexible when managed by NGINX Controller. Hope this overview was useful. Good luck!2.1KViews1like0CommentsAdvanced API security for Kubernetes containers running in AWS - NGINX App Protect per-service deployment through a CI/CD pipeline

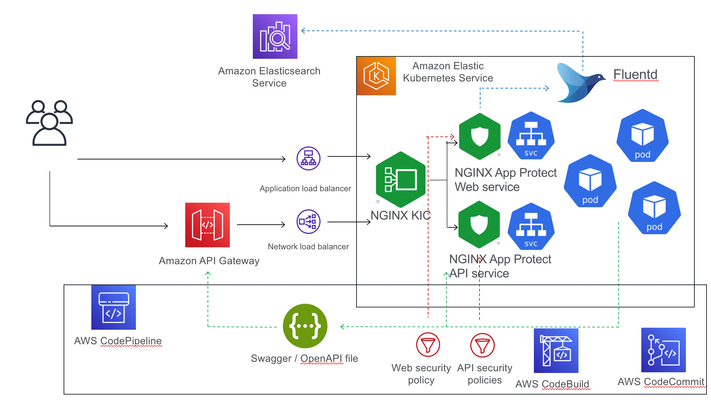

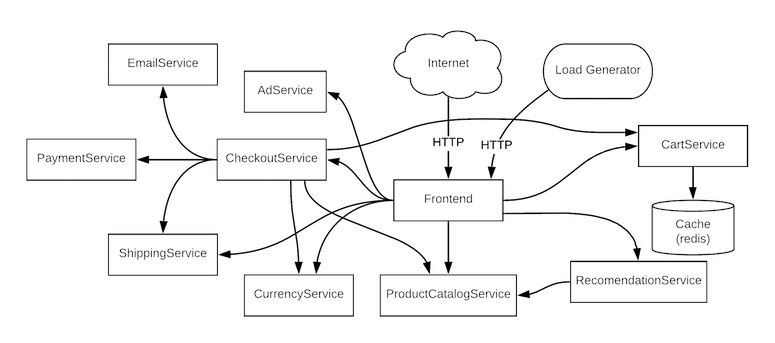

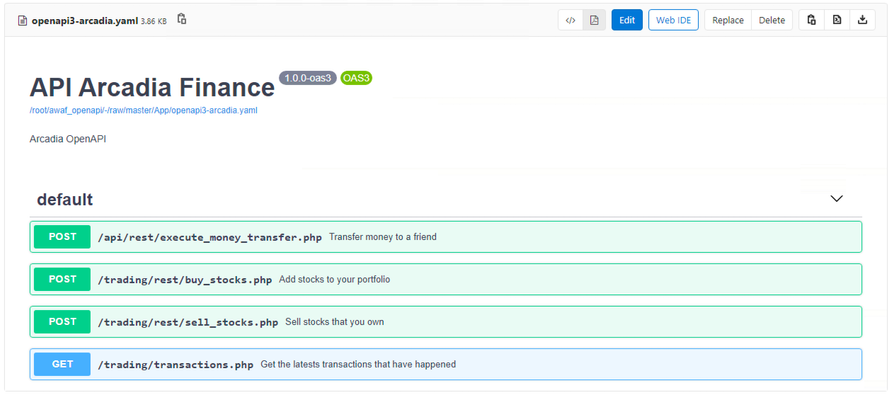

Introduction When the design objective for Kubernetes security is the separate management of WAF security policies, the solution is NGINX App Protect deployed per-service. This article describes such a deployment, with NGINX App Protect augmenting AWS API Gateway to provide advanced security to API workloads, deployed through a CI/CD pipeline. The advantage of NGINX App Protect deployed per-service in a Kubernetes environment is the separation of security policies between different services, allowing for better customisation of the policies and easier portability to different environments. In this particular instance, I used a demo application, Arcadia Finance, that has a Web interface and also exposes an API allowing the users to make financial transactions. The API is described in an OpenAPI 3.0 file, allowing for the automated building of the positive security policy elements (allow list elements) whereas in the case of the web security policy, this configuration will be done manually. The difference in configuration methods between the API and Web policies and the additional requirement for policy separation and independent portability between environments prompted the usage of two separate NGINX App Protect instance, each securing their respective service (Web and API). The deployment used AWS EKS as Kubernetes environment where, beside the Arcadia Finance application components and NGINX App Protect instances, there is also a Fluentd deploymentconfigured as a Syslog server for security logs sent by the NGINX App Protect instances. The logs are then being sent to AWS Elasticsearch and displayed via Kibana NGINX App Protect dashboards. Access to EKS cluster is being provided by an NGINX Ingress Controller instance. The Web interface is being exposed externally through an AWS Application LoadBalancer, handling the SSL offloading while the API is exposed through a Network LoadBalancer. The API is published externally through AWS API Gateway, providing basic security, using a VPC link to connect to the Network LoadBalancer. The configuration The configuration (Arcadia Finance deployment, NGINX KIC, NGINX App Protect configuration, OpenAPI file) is being stored in AWS CodeComit and deployed through AWS CodePipeline and AWS CodeBuild. The API Gateway configuration is being described in an OpenAPI file annotated for AWS API Gateway-specific elements: { "openapi" : "3.0.1", "info" : { "title" : "API Arcadia Finance", "description" : "Arcadia OpenAPI", "version" : "1.0.0-oas3" }, "servers" : [ { "url" : "https://api.cloud-app.uk" } ], "paths" : { "/api/rest/execute_money_transfer.php" : { "post" : { "requestBody" : { "content" : { "application/json" : { "schema" : { "$ref" : "#/components/schemas/MODEL9e8bc4" } } }, "required" : true }, "responses" : { "200" : { "description" : "200 response", "content" : { } } }, "x-amazon-apigateway-request-validator": "Validate body, query string parameters, and headers", "x-amazon-apigateway-gateway-responses": { "BAD_REQUEST_BODY": { "responseTemplates": { "application/json": "{\"message\": \"Bla Bla\"}" } } }, "x-amazon-apigateway-integration" : { "type" : "http_proxy", "uri" : "http://api.cloud-app.uk/api/rest/execute_money_transfer.php", "responses" : { "default" : { "statusCode" : "200" } }, "passthroughBehavior" : "when_no_match", "connectionType" : "VPC_LINK", "connectionId" : "emda4d", "httpMethod" : "POST" } } }, "/trading/transactions.php" : { "get" : { "responses" : { "200" : { "description" : "200 response", "content" : { } } }, "x-amazon-apigateway-request-validator": "Validate body, query string parameters, and headers", "x-amazon-apigateway-gateway-responses": { "BAD_REQUEST_BODY": { "responseTemplates": { "application/json": "{\"message\": \"$context.error.validationErrorString\"}" } } }, "x-amazon-apigateway-integration" : { "type" : "http_proxy", "uri" : "http://www.cloud-app.uk/trading/transactions.php", "responses" : { "default" : { "statusCode" : "200" } }, "passthroughBehavior" : "when_no_match", "connectionType" : "VPC_LINK", "connectionId" : "emda4d", "httpMethod" : "GET" } } }, "/trading/rest/sell_stocks.php" : { "post" : { "requestBody" : { "content" : { "application/json" : { "schema" : { "$ref" : "#/components/schemas/MODEL1ed7ad" } } }, "required" : true }, "responses" : { "200" : { "description" : "200 response", "content" : { } } }, "x-amazon-apigateway-request-validator": "Validate body, query string parameters, and headers", "x-amazon-apigateway-gateway-responses": { "BAD_REQUEST_BODY": { "responseTemplates": { "application/json": "{\"message\": \"$context.error.validationErrorString\"}" } } }, "x-amazon-apigateway-integration" : { "type" : "http_proxy", "uri" : "http://api.cloud-app.uk/trading/rest/sell_stocks.php", "responses" : { "default" : { "statusCode" : "200" } }, "passthroughBehavior" : "when_no_match", "connectionType" : "VPC_LINK", "connectionId" : "emda4d", "httpMethod" : "POST" } } }, "/trading/rest/buy_stocks.php" : { "post" : { "requestBody" : { "content" : { "application/json" : { "schema" : { "$ref" : "#/components/schemas/MODEL94f81c" } } }, "required" : true }, "responses" : { "200" : { "description" : "200 response", "content" : { } } }, "x-amazon-apigateway-request-validator": "Validate body, query string parameters, and headers", "x-amazon-apigateway-gateway-responses": { "BAD_REQUEST_BODY": { "responseTemplates": { "application/json": "{\"message\": \"$context.error.validationErrorString\"}" } } }, "x-amazon-apigateway-integration" : { "type" : "http_proxy", "uri" : "http://api.cloud-app.uk/trading/rest/buy_stocks.php", "responses" : { "default" : { "statusCode" : "200" } }, "passthroughBehavior" : "when_no_match", "connectionType" : "VPC_LINK", "connectionId" : "emda4d", "httpMethod" : "POST" } } } }, "components" : { "schemas" : { "MODEL94f81c" : { "required" : [ "action", "company", "qty", "stock_price", "trans_value" ], "type" : "object", "properties" : { "trans_value" : { "minimum" : 0, "type" : "number" }, "qty" : { "minimum" : 0, "type" : "integer", "format" : "int32" }, "company" : { "type" : "string" }, "action" : { "type" : "string", "enum" : [ "buy" ] }, "stock_price" : { "minimum" : 0, "type" : "number" } }, "additionalProperties" : false }, "MODEL1ed7ad" : { "required" : [ "action", "company", "qty", "stock_price", "trans_value" ], "type" : "object", "properties" : { "trans_value" : { "minimum" : 0, "type" : "number" }, "qty" : { "minimum" : 0, "type" : "integer", "format" : "int32" }, "company" : { "type" : "string" }, "action" : { "type" : "string", "enum" : [ "sell" ] }, "stock_price" : { "minimum" : 0, "type" : "number" } }, "additionalProperties" : false }, "MODEL9e8bc4" : { "required" : [ "account", "amount", "currency", "friend" ], "type" : "object", "properties" : { "amount" : { "minimum" : 0, "type" : "number" }, "account" : { "type" : "number" }, "currency" : { "type" : "string" }, "friend" : { "type" : "string" } }, "additionalProperties" : false } } }, "x-amazon-apigateway-policy" : { "Version" : "2012-10-17", "Statement" : [ { "Effect" : "Allow", "Principal" : "*", "Action" : "execute-api:Invoke", "Resource" : "arn:aws:execute-api:us-west-2:856265587682:7g8sbh9zs6/*" } ] }, "x-amazon-apigateway-request-validators": { "Validate body, query string parameters, and headers": { "validateRequestParameters": true, "validateRequestBody": true } } } The Ingress objects controlled by NGINX KIC are responsible for steering the traffic to either the Web or API instances of NGINX App Protect: apiVersion: extensions/v1beta1 kind: Ingress metadata: annotations: kubernetes.io/ingress.class: nginx name: arcadia-nginx-kic namespace: default spec: rules: - host: "*.cloud-app.uk" http: paths: - backend: serviceName: api-nap servicePort: 80 path: /api/rest/execute_money_transfer.php - backend: serviceName: api-nap servicePort: 80 path: /trading/rest/buy_stocks.php - backend: serviceName: api-nap servicePort: 80 path: /trading/rest/sell_stocks.php - backend: serviceName: web-nap servicePort: 80 path: /trading/transactions.php - backend: serviceName: web-nap servicePort: 80 path: / - backend: serviceName: web-nap servicePort: 80 path: /files - backend: serviceName: web-nap servicePort: 80 path: /api - backend: serviceName: web-nap servicePort: 80 path: /app3 The NGINX App Protect configuration is also controlled by AWS CodePipeline, deployed as a ConfigMap and mounted as a volume on the NGINX instance: apiVersion: v1 kind: ConfigMap metadata: name: nginx-conf-map-api namespace: default data: nginx.conf: |+ user nginx; worker_processes auto; load_module modules/ngx_http_app_protect_module.so; error_log /var/log/nginx/error.log debug; events { worker_connections 10240; } http { include /etc/nginx/mime.types; default_type application/octet-stream; sendfile on; keepalive_timeout 65; upstream main_DNS_name { server main; } upstream app2_DNS_name { server app2; } server { listen 80; server_name *.cloud-app.uk; proxy_http_version 1.1; app_protect_enable on; app_protect_policy_file "/etc/nginx/NAP_API_Policy.json"; app_protect_security_log_enable on; app_protect_security_log "/etc/nginx/custom_log_format.json" syslog:server=fluentd.logging.svc.cluster.local:24224; location /trading { client_max_body_size 0; default_type text/html; # set your backend here proxy_pass http://main_DNS_name; proxy_set_header Host $host; } location /api { client_max_body_size 0; default_type text/html; # set your backend here proxy_pass http://app2_DNS_name; proxy_set_header Host $host; } } } apiVersion: apps/v1 kind: Deployment metadata: name: api-nap labels: app: api-nap spec: selector: matchLabels: app: api-nap replicas: 1 template: metadata: labels: app: api-nap spec: containers: - name: api-nap image: 856265587682.dkr.ecr.us-west-2.amazonaws.com/per-service-nginx-app-protect:latest imagePullPolicy: IfNotPresent ports: - containerPort: 80 volumeMounts: - name: nginx-conf-map-api-volume mountPath: "/etc/nginx/nginx.conf" subPath: "nginx.conf" readOnly: true - name: nap-api-policy-volume mountPath: "/etc/nginx/NAP_API_Policy.json" subPath: "NAP_API_Policy.json" readOnly: true volumes: - name: nginx-conf-map-api-volume configMap: name: nginx-conf-map-api - name: nap-api-policy-volume configMap: name: nap-api-policy In case of API NGINX App Protect configuration, there is a reference to the OpenAPI file placed by the CI/CD pipeline in an S3 bucket: apiVersion: v1 kind: ConfigMap metadata: name: nap-api-policy namespace: default data: NAP_API_Policy.json: |+ { "policy": { "name": "policy_name", "template": { "name": "POLICY_TEMPLATE_NGINX_BASE" }, "applicationLanguage": "utf-8", "enforcementMode": "blocking", "signature-sets": [ { "name": "High Accuracy Signatures", "block": true, "alarm": true } ], "bot-defense": { "settings": { "isEnabled": true }, "mitigations": { "classes": [ { "name": "trusted-bot", "action": "alarm" }, { "name": "untrusted-bot", "action": "block" }, { "name": "malicious-bot", "action": "block" } ] } }, "open-api-files": [ { "link": "https://per-service-nginx-app-protect.s3.us-west-2.amazonaws.com/arcadia-openapi3-aws.json" } ], "blocking-settings": { "violations": [ { "name": "VIOL_JSON_FORMAT", "alarm": true, "block": true }, { "name": "VIOL_PARAMETER_VALUE_METACHAR", "alarm": false, "block": false }, { "name": "VIOL_HTTP_PROTOCOL", "alarm": true, "block": true }, { "name": "VIOL_EVASION", "alarm": true, "block": true }, { "name": "VIOL_FILETYPE", "alarm": true, "block": true }, { "name": "VIOL_METHOD", "alarm": true, "block": true }, { "block": true, "description": "Disallowed file upload content detected in body", "name": "VIOL_FILE_UPLOAD_IN_BODY" }, { "block": true, "description": "Mandatory request body is missing", "name": "VIOL_MANDATORY_REQUEST_BODY" }, { "block": true, "description": "Illegal parameter location", "name": "VIOL_PARAMETER_LOCATION" }, { "block": true, "description": "Mandatory parameter is missing", "name": "VIOL_MANDATORY_PARAMETER" }, { "block": true, "description": "JSON data does not comply with JSON schema", "name": "VIOL_JSON_SCHEMA" }, { "block": true, "description": "Illegal parameter array value", "name": "VIOL_PARAMETER_ARRAY_VALUE" }, { "block": true, "description": "Illegal Base64 value", "name": "VIOL_PARAMETER_VALUE_BASE64" }, { "block": true, "description": "Disallowed file upload content detected", "name": "VIOL_FILE_UPLOAD" }, { "block": true, "description": "Illegal request content type", "name": "VIOL_URL_CONTENT_TYPE" }, { "block": true, "description": "Illegal static parameter value", "name": "VIOL_PARAMETER_STATIC_VALUE" }, { "block": true, "description": "Illegal parameter value length", "name": "VIOL_PARAMETER_VALUE_LENGTH" }, { "block": true, "description": "Illegal parameter data type", "name": "VIOL_PARAMETER_DATA_TYPE" }, { "block": true, "description": "Illegal parameter numeric value", "name": "VIOL_PARAMETER_NUMERIC_VALUE" }, { "block": true, "description": "Parameter value does not comply with regular expression", "name": "VIOL_PARAMETER_VALUE_REGEXP" }, { "block": true, "description": "Illegal URL", "name": "VIOL_URL" }, { "block": true, "description": "Illegal parameter", "name": "VIOL_PARAMETER" }, { "block": true, "description": "Illegal empty parameter value", "name": "VIOL_PARAMETER_EMPTY_VALUE" }, { "block": true, "description": "Illegal repeated parameter name", "name": "VIOL_PARAMETER_REPEATED" } ], "http-protocols": [ { "description": "Header name with no header value", "enabled": true }, { "description": "Chunked request with Content-Length header", "enabled": true }, { "description": "Check maximum number of parameters", "enabled": true, "maxParams": 5 }, { "description": "Check maximum number of headers", "enabled": true, "maxHeaders": 30 }, { "description": "Body in GET or HEAD requests", "enabled": true }, { "description": "Bad multipart/form-data request parsing", "enabled": true }, { "description": "Bad multipart parameters parsing", "enabled": true }, { "description": "Unescaped space in URL", "enabled": true } ], "evasions": [ { "description": "Bad unescape", "enabled": true }, { "description": "Directory traversals", "enabled": true }, { "description": "Bare byte decoding", "enabled": true }, { "description": "Apache whitespace", "enabled": true }, { "description": "Multiple decoding", "enabled": true, "maxDecodingPasses": 2 }, { "description": "IIS Unicode codepoints", "enabled": true }, { "description": "%u decoding", "enabled": true } ] }, "methodReference": { "link": "https://per-service-nginx-app-protect.s3.us-west-2.amazonaws.com/methods.txt" }, "filetypeReference": { "link": "https://per-service-nginx-app-protect.s3.us-west-2.amazonaws.com/filetypes.txt" } } } In this case, the same OpenAPI file is pushed to both AWS API Gateway and, through the S3 bucket, to the NGINX App Protect API instance. NGINX App Protectaugmenting the security provided by AWS API Gateway The NGINX App Protect API instance enhances the security posture of API Gateway by providing negative security (attack signature matching) and advanced security like bot detection. To demonstrate this functionality, aSQLi attack is simulated against the API. This valid API call sent by Postman is successfully completed: A similar call that contains an attack pattern (SQL injection) is being blocked by the NGINX App Protect instance: To demonstrate the bot detection capability, the same valid call sent before is now sent from Curl (as opposed to Postman used earlier), now matching an untrusted bot signature and being blocked: curl -vvvk https://api.cloud-app.uk/api/rest/execute_money_transfer.php -d '{"account": 2075894, "amount": 1, "currency": "GBP", "friend": "Vincent"}' -H "Content-Type: application/json" -X POST Note: Unnecessary use of -X or --request, POST is already inferred. * Trying 143.204.170.103... * TCP_NODELAY set * Connected to api.cloud-app.uk (143.204.170.103) port 443 (#0) * WARNING: disabling hostname validation also disables SNI. * TLS 1.2 connection using TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256 * Server certificate: *.cloudfront.net * Server certificate: DigiCert Global CA G2 * Server certificate: DigiCert Global Root G2 > POST /api/rest/execute_money_transfer.php HTTP/1.1 > Host: api.cloud-app.uk > User-Agent: curl/7.54.0 > Accept: */* > Content-Type: application/json > Content-Length: 73 > * upload completely sent off: 73 out of 73 bytes < HTTP/1.1 403 Forbidden < Content-Type: application/json; charset=utf-8 < Content-Length: 37 < Connection: keep-alive < Date: Sat, 28 Nov 2020 20:59:01 GMT < x-amzn-RequestId: 213ca523-173b-42a9-ab41-e3420602bef9 < x-amzn-Remapped-Content-Length: 37 < x-amzn-Remapped-Connection: keep-alive < x-amz-apigw-id: WvIDaFu0PHcFZOg= < Cache-Control: no-cache < x-amzn-Remapped-Server: nginx/1.19.3 < Pragma: no-cache < x-amzn-Remapped-Date: Sat, 28 Nov 2020 20:59:01 GMT < X-Cache: Error from cloudfront < Via: 1.1 9a0d5427f47351631cdee4d5e38248d8.cloudfront.net (CloudFront) < X-Amz-Cf-Pop: LHR50-C1 < X-Amz-Cf-Id: _VYxM43hCyrzj5hJNitbxLkzjww7iygpoWXT7sege-5eySEaKmcRxQ== < {"supportID": "4099392564272510395"} * Connection #0 to host api.cloud-app.uk left intact This can be checked in the NGINX App Protect security logs displayed in Kibana NGINX App Protect dashboards, running in AWS ElasticSearch: The bot defense behavior can be controlled from the policy file under the CodeCommit repository. Changing the action from "block" to "alarm" for "untrusted bot" and commiting the change will trigger the pipeline to redeploy the NGINX App Protect policy: apiVersion: v1 kind: ConfigMap metadata: name: nap-api-policy namespace: default data: NAP_API_Policy.json: |+ { "policy": { "name": "policy_name", "template": { "name": "POLICY_TEMPLATE_NGINX_BASE" }, "applicationLanguage": "utf-8", "enforcementMode": "blocking", "signature-sets": [ { "name": "High Accuracy Signatures", "block": true, "alarm": true } ], "bot-defense": { "settings": { "isEnabled": true }, "mitigations": { "classes": [ { "name": "trusted-bot", "action": "alarm" }, { "name": "untrusted-bot", "action": "block" }, { "name": "malicious-bot", "action": "block" } ] } }, ............................................................................................................... The same Curl call is now being allowed: curl -vvvk https://api.cloud-app.uk/api/rest/execute_money_transfer.php -d '{"account": 2075894, "amount": 1, "currency": "GBP", "friend": "Vincent"}' -H "Content-Type: application/json" -X POST Note: Unnecessary use of -X or --request, POST is already inferred. * Trying 143.204.192.29... * TCP_NODELAY set * Connected to api.cloud-app.uk (143.204.192.29) port 443 (#0) * ALPN, offering h2 * ALPN, offering http/1.1 * successfully set certificate verify locations: * CAfile: /etc/ssl/cert.pem CApath: none * TLSv1.2 (OUT), TLS handshake, Client hello (1): * TLSv1.2 (IN), TLS handshake, Server hello (2): * TLSv1.2 (IN), TLS handshake, Certificate (11): * TLSv1.2 (IN), TLS handshake, Server key exchange (12): * TLSv1.2 (IN), TLS handshake, Server finished (14): * TLSv1.2 (OUT), TLS handshake, Client key exchange (16): * TLSv1.2 (OUT), TLS change cipher, Change cipher spec (1): * TLSv1.2 (OUT), TLS handshake, Finished (20): * TLSv1.2 (IN), TLS change cipher, Change cipher spec (1): * TLSv1.2 (IN), TLS handshake, Finished (20): * SSL connection using TLSv1.2 / ECDHE-RSA-AES128-GCM-SHA256 * ALPN, server accepted to use h2 * Server certificate: * subject: CN=*.cloud-app.uk * start date: Nov 18 00:00:00 2020 GMT * expire date: Dec 17 23:59:59 2021 GMT * issuer: C=US; O=Amazon; OU=Server CA 1B; CN=Amazon * SSL certificate verify ok. * Using HTTP2, server supports multi-use * Connection state changed (HTTP/2 confirmed) * Copying HTTP/2 data in stream buffer to connection buffer after upgrade: len=0 * Using Stream ID: 1 (easy handle 0x7fc877008200) > POST /api/rest/execute_money_transfer.php HTTP/2 > Host: api.cloud-app.uk > User-Agent: curl/7.64.1 > Accept: */* > Content-Type: application/json > Content-Length: 75 > * Connection state changed (MAX_CONCURRENT_STREAMS == 128)! * We are completely uploaded and fine < HTTP/2 200 < content-type: text/html; charset=UTF-8 < content-length: 155 < date: Mon, 30 Nov 2020 11:02:19 GMT < x-amzn-requestid: 9bdb2379-06c4-4970-a528-cd8de9cb75b3 < x-amzn-remapped-content-length: 155 < x-amzn-remapped-connection: keep-alive < x-amz-apigw-id: W0WhMH_TvHcFndQ= < x-amzn-remapped-server: nginx/1.19.3 < vary: Accept-Encoding < x-amzn-remapped-date: Mon, 30 Nov 2020 11:02:19 GMT < x-cache: Miss from cloudfront < via: 1.1 bb501579906725a97059c817430425cf.cloudfront.net (CloudFront) < x-amz-cf-pop: LHR3-C1 < x-amz-cf-id: xo4zfKVqUeejkHzLPArADi1rxRxTJkg61YgfhruAR4KjdpMSnYymkQ== < * Connection #0 to host api.cloud-app.uk left intact {"name":"Vincent", "status":"success","amount":"1", "currency":"GBP", "transid":"753910682", "msg":"The money transfer has been successfully completed "}* Closing connection 0 The new bot defense behavior can be checked in the NGINX App Protect Kibana dashboard: Conclusion To recap, this article has demoed the per-service model of deployment for NGINX App Protect in Kubernetes environment. The main advantage of this deployment model is the independent management of security policies and the portability of each security policy. NGINX App Protect elevates the security level provided by the API Gateway by providing negative security and advanced security features like bot detection. The configuration is being controlled by a CI/CD pipeline, in this case AWS CodePipeline, and the same OpenAPI file used to configure the AWS API Gateway is also ingested by the NGINX App Protect API instance. Lastly the security logs sent by NGINX App Protect through Fluentd to AWS ElasticSearch are being displayed in Kibana dashboards.2KViews1like0CommentsProtecting gRPC based APIs with NGINX App Protect