SANS 20 Critical Security Controls

A couple days ago, The SANS Institute announced the release of a major update (Version 3.0) to the 20 Critical Controls, a prioritized baseline of information security measures designed to provide continuous monitoring to better protect government and commercial computers and networks from cyber attacks. The information security threat landscape is always changing, especially this year with the well publicized breaches. The particular controls have been tested and provide an effective solution to defending against cyber-attacks. The focus is critical technical areas than can help an organization prioritize efforts to protect against the most common and dangerous attacks. Automating security controls is another key area, to help gauge and improve the security posture of an organization. The update takes into account the information gleaned from law enforcement agencies, forensics experts and penetration testers who have analyzed the various methods of attack. SANS outlines the controls that would have prevented those attacks from being successful. Version 3.0 was developed to take the control framework to the next level. They have realigned the 20 controls and the associated sub-controls based on the current technology and threat environment, including the new threat vectors. Sub-controls have been added to assist with rapid detection and prevention of attacks. The 20 Controls have been aligned to the NSA’s Associated Manageable Network Plan Revision 2.0 Milestones. They have added definitions, guidelines and proposed scoring criteria to evaluate tools for their ability to satisfy the requirements of each of the 20 Controls. Lastly, they have mapped the findings of the Australian Government Department of Defence, which produced the Top 35 Key Mitigation Strategies, to the 20 Controls, providing measures to help reduce the impact of attacks. The 20 Critical Security Controls are: Inventory of Authorized and Unauthorized Devices Inventory of Authorized and Unauthorized Software Secure Configurations for Hardware and Software on Laptops, Workstations, and Servers Secure Configurations for Network Devices such as Firewalls, Routers, and Switches Boundary Defense Maintenance, Monitoring, and Analysis of Security Audit Logs Application Software Security Controlled Use of Administrative Privileges Controlled Access Based on the Need to Know Continuous Vulnerability Assessment and Remediation Account Monitoring and Control Malware Defenses Limitation and Control of Network Ports, Protocols, and Services Wireless Device Control Data Loss Prevention Secure Network Engineering Penetration Tests and Red Team Exercises Incident Response Capability Data Recovery Capability Security Skills Assessment and Appropriate Training to Fill Gaps And of course, F5 has solutions that can help with most, if not all, the 20 Critical Controls. ps Resources: SANS 20 Critical Controls Top 35 Mitigation Strategies: DSD Defence Signals Directorate NSA Manageable Network Plan (pdf) Internet Storm Center Google Report: How Web Attackers Evade Malware Detection F5 Security Solutions1.2KViews0likes0CommentsWhat is a Strategic Point of Control Anyway?

From mammoth hunting to military maneuvers to the datacenter, the key to success is control Recalling your elementary school lessons, you’ll probably remember that mammoths were large and dangerous creatures and like most animals they were quite deadly to primitive man. But yet man found a way to hunt them effectively and, we assume, with more than a small degree of success as we are still here and, well, the mammoths aren’t. Marx Cavemen PHOTO AND ART WORK : Fred R Hinojosa. The theory of how man successfully hunted ginormous creatures like the mammoth goes something like this: a group of hunters would single out a mammoth and herd it toward a point at which the hunters would have an advantage – a narrow mountain pass, a clearing enclosed by large rock, etc… The qualifying criteria for the place in which the hunters would finally confront their next meal was that it afforded the hunters a strategic point of control over the mammoth’s movement. The mammoth could not move away without either (a) climbing sheer rock walls or (b) being attacked by the hunters. By forcing mammoths into a confined space, the hunters controlled the environment and the mammoth’s ability to flee, thus a successful hunt was had by all. At least by all the hunters; the mammoths probably didn’t find it successful at all. Whether you consider mammoth hunting or military maneuvers or strategy-based games (chess, checkers) one thing remains the same: a winning strategy almost always involves forcing the opposition into a situation over which you have control. That might be a mountain pass, or a densely wooded forest, or a bridge. The key is to force the entire complement of the opposition through an easily and tightly controlled path. Once they’re on that path – and can’t turn back – you can execute your plan of attack. These easily and highly constrained paths are “strategic points of control.” They are strategic because they are the points at which you are empowered to perform some action with a high degree of assurance of success. In data center architecture there are several “strategic points of control” at which security, optimization, and acceleration policies can be applied to inbound and outbound data. These strategic points of control are important to recognize as they are the most efficient – and effective – points at which control can be exerted over the use of data center resources. DATA CENTER STRATEGIC POINTS of CONTROL In every data center architecture there are aggregation points. These are points (one or more components) through which all traffic is forced to flow, for one reason or another. For example, the most obvious strategic point of control within a data center is at its perimeter – the router and firewalls that control inbound access to resources and in some cases control outbound access as well. All data flows through this strategic point of control and because it’s at the perimeter of the data center it makes sense to implement broad resource access policies at this point. Similarly, strategic points of control occur internal to the data center at several “tiers” within the architecture. Several of these tiers are: Storage virtualization provides a unified view of storage resources by virtualizing storage solutions (NAS, SAN, etc…). Because the storage virtualization tier manages all access to the resources it is managing, it is a strategic point of control at which optimization and security policies can be easily applied. Application Delivery / load balancing virtualizes application instances and ensures availability and scalability of an application. Because it is virtualizing the application it therefore becomes a point of aggregation through which all requests and responses for an application must flow. It is a strategic point of control for application security, optimization, and acceleration. Network virtualization is emerging internal to the data center architecture as a means to provide inter-virtual machine connectivity more efficiently than perhaps can be achieved through traditional network connectivity. Virtual switches often reside on a server on which multiple applications have been deployed within virtual machines. Traditionally it might be necessary for communication between those applications to physically exit and re-enter the server’s network card. But by virtualizing the network at this tier the physical traversal path is eliminated (and the associated latency, by the way) and more efficient inter-vm communication can be achieved. This is a strategic point of control at which access to applications at the network layer should be applied, especially in a public cloud environment where inter-organizational residency on the same physical machine is highly likely. OLD SKOOL VIRTUALIZATION EVOLVES You might have begun noticing a central theme to these strategic points of control: they are all points at which some kind of virtualization – and thus aggregation – occur naturally in a data center architecture. This is the original (first) kind of virtualization: the presentation of many resources as a single resources, a la load balancing and other proxy-based solutions. When there is a one —> many (1:M) virtualization solution employed, it naturally becomes a strategic point of control by virtue of the fact that all “X” traffic must flow through that solution and thus policies regarding access, security, logging, etc… can be applied in a single, centrally managed location. The key here is “strategic” and “control”. The former relates to the ability to apply the latter over data at a single point in the data path. This kind of 1:M virtualization has been a part of datacenter architectures since the mid 1990s. It’s evolved to provide ever broader and deeper control over the data that must traverse these points of control by nature of network design. These points have become, over time, strategic in terms of the ability to consistently apply policies to data in as operationally efficient manner as possible. Thus have these virtualization layers become “strategic points of control”. And you thought the term was just another square on the buzz-word bingo card, didn’t you?1.1KViews0likes6CommentsF5 BIG-IP Platform Security

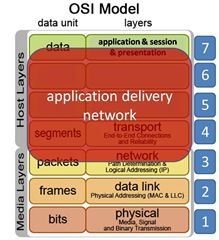

When creating any security-enabled network device, development teams must fully investigate security of the device itself to ensure it cannot be compromised. A gate provides no security to a house if the gap between the bars is large enough to drive a truck through. Many highly effective exploits have breached the very software and hardware that are designed to protect against them. If an attacker can breach the guards, then they don’t need to worry about being stealthy, meaning if one can compromise the box, then they probably can compromise the code. F5 BIG-IP Application Delivery Controllers are positioned at strategic points of control to manage an organization’s critical information flow. In the BIG-IP product family and the TMOS operating system, F5 has built and maintained a secure and robust application delivery platform, and has implemented many different checks and counter-checks to ensure a totally secure network environment. Application delivery security includes providing protection to the customer’s Application Delivery Network (ADN), and mandatory and routine checks against the stack source code to provide internal security—and it starts with a secure Application Delivery Controller. The BIG-IP system and TMOS are designed so that the hardware and software work together to provide the highest level of security. While there are many factors in a truly secure system, two of the most important are design and coding. Sound security starts early in the product development process. Before writing a single line of code, F5 Product Development goes through a process called threat modeling. Engineers evaluate each new feature to determine what vulnerabilities it might create or introduce to the system. F5’s rule of thumb is a vulnerability that takes one hour to fix at the design phase, will take ten hours to fix in the coding phase and one thousand hours to fix after the product is shipped—so it’s critical to catch vulnerabilities during the design phase. The sum of all these vulnerabilities is called the threat surface, which F5 strives to minimize. F5, like many companies that develop software, has invested heavily in training internal development staff on writing secure code. Security testing is time-consuming and a huge undertaking; but it’s a critical part of meeting F5’s stringent standards and its commitment to customers. By no means an exhaustive list but the BIG-IP system has a number of features that provide heightened and hardened security: Appliance mode, iApp Templates, FIPS and Secure Vault Appliance Mode Beginning with version 10.2.1-HF3, the BIG-IP system can run in Appliance mode. Appliance mode is designed to meet the needs of customers in industries with especially sensitive data, such as healthcare and financial services, by limiting BIG-IP system administrative access to match that of a typical network appliance rather than a multi-user UNIX device. The optional Appliance mode “hardens” BIG-IP devices by removing advanced shell (Bash) and root-level access. Administrative access is available through the TMSH (TMOS Shell) command-line interface and GUI. When Appliance mode is licensed, any user that previously had access to the Bash shell will now only have access to the TMSH. The root account home directory (/root) file permissions have been tightened for numerous files and directories. By default, new files are now only user readable and writeable and all directories are better secured. iApp Templates Introduced in BIG-IP v11, F5 iApps is a powerful new set of features in the BIG-IP system. It provides a new way to architect application delivery in the data center, and it includes a holistic, application-centric view of how applications are managed and delivered inside, outside, and beyond the data center. iApps provide a framework that application, security, network, systems, and operations personnel can use to unify, simplify, and control the entire ADN with a contextual view and advanced statistics about the application services that support business. iApps are designed to abstract the many individual components required to deliver an application by grouping these resources together in templates associated with applications; this alleviates the need for administrators to manage discrete components on the network. F5’s new NIST 800-53 iApp Template helps organizations become NIST-compliant. F5 has distilled the 240-plus pages of guidance from NIST into a template with the relevant BIG-IP configuration settings—saving organizations hours of management time and resources. Federal Information Processing Standards (FIPS) Developed by the National Institute of Standards and Technology (NIST), Federal Information Processing Standards are used by United States government agencies and government contractors in non-military computer systems. FIPS 140 series are U.S. government computer security standards that define requirements for cryptography modules, including both hardware and software components, for use by departments and agencies of the United States federal government. The requirements cover not only the cryptographic modules themselves but also their documentation. As of December 2006, the current version of the standard is FIPS 140-2. A hardware security module (HSM) is a secure physical device designed to generate, store, and protect digital, high-value cryptographic keys. It is a secure crypto-processor that often comes in the form of a plug-in card (or other hardware) with tamper protection built in. HSMs also provide the infrastructure for finance, government, healthcare, and others to conform to industry-specific regulatory standards. FIPS 140 enforces stronger cryptographic algorithms, provides good physical security, and requires power-on self tests to ensure a device is still in compliance before operating. FIPS 140-2 evaluation is required to sell products implementing cryptography to the federal government, and the financial industry is increasingly specifying FIPS 140-2 as a procurement requirement. The BIG-IP system includes a FIPS cryptographic/SSL accelerator—an HSM option specifically designed for processing SSL traffic in environments that require FIPS 140-1 Level 2–compliant solutions. Many BIG-IP devices are FIPS 140-2 Level 2–compliant. This security rating indicates that once sensitive data is imported into the HSM, it incorporates cryptographic techniques to ensure the data is not extractable in a plain-text format. It provides tamper-evident coatings or seals to deter physical tampering. The BIG-IP system includes the option to install a FIPS HSM (BIG-IP 6900, 8900, 11000, and 11050 devices). BIG-IP devices can be customized to include an integrated FIPS 140-2 Level 2–certified SSL accelerator. Other solutions require a separate system or a FIPS-certified card for each web server; but the BIG-IP system’s unique key management framework enables a highly scalable secure infrastructure that can handle higher traffic levels and to which organizations can easily add new services. Additionally the FIPS cryptographic/SSL accelerator uses smart cards to authenticate administrators, grant access rights, and share administrative responsibilities to provide a flexible and secure means for enforcing key management security. Secure Vault It is generally a good idea to protect SSL private keys with passphrases. With a passphrase, private key files are stored encrypted on non-volatile storage. If an attacker obtains an encrypted private key file, it will be useless without the passphrase. In PKI (public key infrastructure), the public key enables a client to validate the integrity of something signed with the private key, and the hashing enables the client to validate that the content was not tampered with. Since the private key of the public/private key pair could be used to impersonate a valid signer, it is critical to keep those keys secure. Secure Vault, a super-secure SSL-encrypted storage system introduced in BIG-IP version 9.4.5, allows passphrases to be stored in an encrypted form on the file system. In BIG-IP version 11, companies now have the option of securing their cryptographic keys in hardware, such as a FIPS card, rather than encrypted on the BIG-IP hard drive. Secure Vault can also encrypt certificate passwords for enhanced certificate and key protection in environments where FIPS 140-2 hardware support is not required, but additional physical and role-based protection is preferred. In the absence of hardware support like FIPS/SEEPROM (Serial (PC) Electrically Erasable Programmable Read-Only Memory), Secure Vault will be implemented in software. Even if an attacker removed the hard disk from the system and painstakingly searched it, it would be nearly impossible to recover the contents due to Secure Vault AES encryption. Each BIG-IP device comes with a unit key and a master key. Upon first boot, the BIG-IP system automatically creates a master key for the purpose of encrypting, and therefore protecting, key passphrases. The master key encrypts SSL private keys, decrypts SSL key files, and synchronizes certificates between BIG-IP devices. Further increasing security, the master key is also encrypted by the unit key, which is an AES 256 symmetric key. When stored on the system, the master key is always encrypted with a hardware key, and never in the form of plain text. Master keys follow the configuration in an HA (high-availability) configuration so all units would share the same master key but still have their own unit key. The master key gets synchronized using the secure channel established by the CMI Infrastructure as of BIG-IP v11. The master key encrypted passphrases cannot be used on systems other than the units for which the master key was generated. Secure Vault support has also been extended for vCMP guests. vCMP (Virtual Clustered Multiprocessing) enables multiple instances of BIG-IP software to run on one device. Each guest gets their own unit key and master key. The guest unit key is generated and stored at the host, thus enforcing the hardware support, and it’s protected by the host master key, which is in turn protected by the host unit key in hardware. Finally F5 provides Application Delivery Network security to protect the most valuable application assets. To provide organizations with reliable and secure access to corporate applications, F5 must carry the secure application paradigm all the way down to the core elements of the BIG-IP system. It’s not enough to provide security to application transport; the transporting appliance must also provide a secure environment. F5 ensures BIG-IP device security through various features and a rigorous development process. It is a comprehensive process designed to keep customers’ applications and data secure. The BIG-IP system can be run in Appliance mode to lock down configuration within the code itself, limiting access to certain shell functions; Secure Vault secures precious keys from tampering; and optional FIPS cards ensure organizations can meet or exceed particular security requirements. An ADN is only as secure as its weakest link. F5 ensures that BIG-IP Application Delivery Controllers use an extremely secure link in the ADN chain. ps Resources: F5 Security Solutions Security is our Job (Video) F5 BIG-IP Platform Security (Whitepaper) Security, not HSMs, in Droves Sometimes It Is About the Hardware Investing in security versus facing the consequences | Bloor Research White Paper Securing Your Enterprise Applications with the BIG-IP (Whitepaper) TMOS Secure Development and Implementation (Whitepaper) BIG-IP Hardware Updates – SlideShare Presentation Audio White Paper - Application Delivery Hardware A Critical Component F5 Introduces High-Performance Platforms to Help Organizations Optimize Application Delivery and Reduce Costs Technorati Tags: F5, PCI DSS, virtualization, cloud computing, Pete Silva, security, coding, iApp, compliance, FIPS, internet, TMOS, big-ip, vCMP467Views0likes1CommentSelf Serve Security

Education of users has become a hot topic of late. The final keynote at the recent RSA Conference was all about using education to combat cybercrime. This article has statistics showing that, when Small and Mid-Market companies were asked, ‘what would help improve the level of security at their companies,’ 75% (48% for employees & another 25% for senior management) said Security Awareness. And, the recent issue of SC Magazine featured an article where Dan Beard, the Chief Administration Office for the House of Representatives says that organizations must educate end users and that end user education is the weakest link in cyber security. In that same article, Stephen Scharf, CISO at Experian explains: “The human element is the largest security risk in any organization,”…“Most security incidents are the result of human errors and human ignorance and not malicious intent. Therefore, it is critical that significant effort is focused on education and awareness to reduce these occurrences.” The human element has always played a role in security, cyber or otherwise. Growing up in Rhode Island, we used to always leave the keys in the ignition of the vehicles parked in our driveway. We felt safe were we lived – and granted, we lived in a rural area so the main crimes committed were things like stealing eggs from Carpenter’s Farm. Certainly, there are still plenty of areas and towns that have that type cocoon. As I went off to college in Milwaukee, I had to remind myself early on – ‘you’re not in Wakefield anymore,’ since I’d instinctively leave my wallet crammed in the sun visor of my Rabbit Diesel. I had to change my behavior when I moved from a small rural area to a larger city. Internet users must do the same but we are creatures of habit. Similar to leaving a wallet in the car, since that’s what I did most of driving life up to that point, many internet users still behave as if it’s 1995 and they are still on Prodigy. The threats are different and more severe but behavior is the same. Times change but sometimes people don’t, won’t or can’t. As all those articles point out, End User Education is vitally important to any organization and should be a key part of the overall IT security strategy. Users knowing what and what not to do when something seems fishy is an important part of your defense – especially when it’s something your firewalls, WAFs, IDS/IPS and other perimeter mechanisms might have missed. Education needs to be ongoing however and not a one shot deal since, according to Dr. Maxwell Maltz, it takes 21 days to make or break a habit. This has since been deemed a myth and everyone is different but it does bring up a good point. Security education, training and knowledge is not an overnight cram session – any security professional will attest to that. A single afternoon meeting going over ‘corporate policies for end users’ regarding information security will not help those who already have bad habits. It needs to be ongoing, consistent and relevant to their daily lives, including the serious consequences of poor behavior. Help users understand the risks/threats, break the bad habits that might lead to exposure and secure your infrastructure in a way that no piece of hardware/software can. Help users help themselves. While not directly security related, F5 recently started offering Free Web Based Training for our end users. IT admins are end users too, ya know. F5 Networks Web-Based Training (WBT) courses introduce you to basic technology concepts related to F5 technology, recent changes to F5 products and basic configurations for BIG-IP Local Traffic Manager (LTM). These are self-paced and you can access them at any time and as many times as you like. The cool thing is if you complete all of the lectures and labs for the LTM Essentials WBT, you have met the prerequisite requirements for the Advanced Topics, Troubleshooting, and iRules classes. ps Related Items: F5 Networks Web-Based Training It all comes down to YOU - The User Weakest link: End-user education Information security policies upended by untrained end users Update your security lessons for end-users The Hugh Thompson Show (RSA) FREE TRAINING!!! …in case you didn’t know306Views0likes0CommentsBYOD Policies – More than an IT Issue Part 5: Trust Model

#BYOD or Bring Your Own Device has moved from trend to an permanent fixture in today's corporate IT infrastructure. It is not strictly an IT issue however. Many groups within an organization need to be involved as they grapple with the risk of mixing personal devices with sensitive information. In my opinion, BYOD follows the classic Freedom vs. Control dilemma. The freedom for user to choose and use their desired device of choice verses an organization's responsibility to protect and control access to sensitive resources. While not having all the answers, this mini-series tries to ask many the questions that any organization needs to answer before embarking on a BYOD journey. Enterprises should plan for rather than inherit BYOD. BYOD policies must span the entire organization but serve two purposes - IT and the employees. The policy must serve IT to secure the corporate data and minimize the cost of implementation and enforcement. At the same time, the policy must serve the employees to preserve the native user experience, keep pace with innovation and respect the user's privacy. A sustainable policy should include a clear BOYD plan to employees including standards on the acceptable types and mobile operating systems along with a support policy showing the process of how the device is managed and operated. Some key policy issue areas include: Liability, Device Choice, Economics, User Experience & Privacy and a Trust Model. Today we look at Trust Model. Trust Model Organizations will either have a BYOD policy or forbid the use all together. Two things can happen if not: if personal devices are being blocked, organizations are losing productivity OR the personal devices are accessing the network (with or without an organization's consent) and nothing is being done pertaining to security or compliance. Ensure employees understand what can and cannot be accessed with personal devices along with understanding the risks (both users and IT) associated with such access. While having a written policy is great, it still must be enforced. Define what is ‘Acceptable use.’ According to a recent Ponemon Institute and Websense survey, while 45% do have a corporate use policy, less than half of those actually enforce it. And a recent SANS Mobility BYOD Security Survey, less than 20% are using end point security tools, and out of those, more are using agent-based tools rather than agent-less. According to the survey, 17% say they have stand-alone BYOD security and usage policies; 24% say they have BYOD policies added to their existing policies; 26% say they "sort of" have policies; 3% don't know; and 31% say they do not have any BYOD policies. Over 50% say employee education is one way they secure the devices, and 73% include user education with other security policies. Organizations should ensure procedures are in place (and understood) in cases of an employee leaving the company; what happens when a device is lost or stolen (ramifications of remote wiping a personal device); what types/strength of passwords are required; record retention and destruction; the allowed types of devices; what types of encryption is used. Organizations need to balance the acceptance of consumer-focused Smartphone/tablets with control of those devices to protect their networks. Organizations need to have a complete inventory of employee's personal devices - at least the one’s requesting access. Organizations need the ability to enforce mobile policies and secure the devices. Organizations need to balance the company's security with the employee's privacy like, off-hours browsing activity on a personal device. Whether an organization is prepared or not, BYOD is here. It can potentially be a significant cost savings and productivity boost for organizations but it is not without risk. To reduce the business risk, enterprises need to have a solid BYOD policy that encompasses the entire organization. And it must be enforced. Companies need to understand: • The trust level of a mobile device is dynamic • Identify and assess the risk of personal devices • Assess the value of apps and data • Define remediation options • Notifications • Access control • Quarantine • Selective wipe • Set a tiered policy Part of me feels we’ve been through all this before with personal computer access to the corporate network during the early days of SSL-VPN, and many of the same concepts/controls/methods are still in place today supporting all types of personal devices. Obviously, there are a bunch new risks, threats and challenges with mobile devices but some of the same concepts apply – enforce policy and manage/mitigate risk As organizations move to the BYOD, F5 has the Unified Secure Access Solutions to help. ps Related BYOD Policies – More than an IT Issue Part 1: Liability BYOD Policies – More than an IT Issue Part 2: Device Choice BYOD Policies – More than an IT Issue Part 3: Economics BYOD Policies – More than an IT Issue Part 4: User Experience and Privacy BYOD–The Hottest Trend or Just the Hottest Term FBI warns users of mobile malware Will BYOL Cripple BYOD? Freedom vs. Control What’s in Your Smartphone? Worldwide smartphone user base hits 1 billion SmartTV, Smartphones and Fill-in-the-Blank Employees Evolving (or not) with Our Devices The New Wallet: Is it Dumb to Carry a Smartphone? Bait Phone BIG-IP Edge Client 2.0.2 for Android BIG-IP Edge Client v1.0.4 for iOS New Security Threat at Work: Bring-Your-Own-Network Legal and Technical BYOD Pitfalls Highlighted at RSA261Views0likes0CommentsDo you control your application network stack? You should.

Owning the stack is important to security, but it’s also integral to a lot of other application delivery functions. And in some cases, it’s downright necessary. Hoff rants with his usual finesse in a recent posting with which I could not agree more. Not only does he point out the wrongness of equating SaaS with “The Cloud”, but points out the importance of “owning the stack” to security. Those that have control/ownership over the entire stack naturally have the opportunity for much tighter control over the "security" of their offerings. Why? because they run their business and the datacenters and applications housed in them with the same level of diligence that an enterprise would. They have context. They have visibility. They have control. They have ownership of the entire stack. Owning the stack has broader implications than just security. The control, visibility, and context-awareness implicit in owning the stack provides much more flexibility in all aspects covering the delivery of applications. Whether we’re talking about emerging or traditional data center architectures the importance of owning the application networking stack should not be underestimated. The arguments over whether virtualized application delivery makes more sense in a cloud computing- based architecture fail to recognize that a virtualized application delivery network forfeits that control over the stack. While it certainly maintains some control at higher levels, it relies upon other software – the virtual machine, hypervisor, and operating system – which shares control of that stack and, in fact, processes all requests before it reaches the virtual application delivery controller. This is quite different from a hardened application delivery controller that maintains control over the stack and provides the means by which security, network, and application experts can tweak, tune, and exert that control in myriad ways to better protect their unique environment. If you don’t completely control layer 4, for example, how can you accurately detect and thus prevent layer 4 focused attacks, such as denial of service and manipulation of the TCP stack? You can’t. If you don’t have control over the stack at the point of entry into the application environment, you are risking a successful attack. As the entry point into application, whether it’s in “the” cloud, “a” cloud, or a traditional data center architecture, a properly implemented application delivery network can offer the control necessary to detect and prevent myriad attacks at every layer of the stack, without concern that an OS or hypervisor-targeted attack will manage to penetrate before the application delivery network can stop it. The visibility, control, and contextual awareness afforded by application delivery solutions also allows the means by which finer-grained control over protocols, users, and applications may be exercised in order to improve performance at the network and application layers. As a full proxy implementation these solutions are capable of enforcing compliance with RFCs for protocols up and down the stack, implement additional technological solutions that improve the efficiency of TCP-based applications, and offer customized solutions through network-side scripting that can be used to immediately address security risks and architectural design decisions. The importance of owning the stack, particularly at the perimeter of the data center, cannot and should not be underestimated. The loss of control, the addition of processing points at which the stack may be exploited, and the inability to change the very behavior of the stack at the point of entry comes from putting into place solutions incapable of controlling the stack. If you don’t own the stack you don’t have control. And if you don’t have control, who does?251Views0likes0CommentsFrom Car Jacking to Car Hacking

With the promise of self-driving cars just around the corner of the next decade and with researchers already able to remotely apply the brakes and listen to conversations, a new security threat vector is emerging. Computers in cars have been around for a while and today with as many as 50 microprocessors, it controls engine emissions, fuel injectors, spark plugs, anti-lock brakes, cruise control, idle speed, air bags and more recently, navigation systems, satellite radio, climate control, keyless entry, and much more. In 2010, a former employee of Texas Auto Center hacked into the dealer’s computer system and remotely activated the vehicle-immobilization system which engaged the horn and disabled the ignition system of around 100 cars. In many cases, the only way to stop the horns (going off in the middle of the night) was to disconnect the battery. Initially, the organization dismissed it as a mechanical failure but when they started getting calls from customers, they knew something was wrong. This particular web based system was used to get the attention of those who were late on payments but obviously, it was used for something completely different. After a quick investigation, police were able to arrest the man and charge him with unauthorized use of a computer system. University of California - San Diego researchers, in 2011, published a report (pdf) identifying numerous attack vectors like CD radios, Bluetooth (we already knew that) and cellular radio as potential targets. In addition, there are concerns that, in theory, a malicious individual could disable the vehicle or re-route GPS signals putting transportation (fleet, delivery, rental, law enforcement) employees and customers at risk. Many of these electronic control units (ECUs) can connect to each other and the internet and so they are vulnerable to the same internet dangers like malware, trojans and even DoS attacks. Those with physical access to your vehicle like mechanics, valets or others can access the On-Board Diagnostic System (OBD-II) usually located right under the dash. Plug in, and upload your favorite car virus. Tests have shown that if you can infect the diagnostics tools at a dealership, when cars were connected to the system, they were also infected. Once infected, the car would contact the researcher’s servers asking for more instructions. At that point, they could activate the brakes, disable the car and even listen to conversations in the car. Imagine driving down a highway, hearing a voice over the speakers and then someone remotely explodes your airbags. They’ve also been able to insert a CD with a malicious file to compromise a radio vulnerability. Most experts agree that right now, it is not something to overly worry about since many of the previously compromised systems are after-market equipment, it takes a lot of time/money and car manufactures are already looking into protection mechanisms. But as I’m thinking about current trends like BYOD, it is not far fetched to imagine a time when your car is VPN’d to the corporate network and you are able to access sensitive info right from the navigation/entertainment/climate control/etc screen. Many new cars today have USB ports that recognize your mobile device as an AUX and allow you to talk, play music and other mobile activities right through the car’s system. I’m sure within the next 5 years (or sooner), someone will distribute a malicious mobile app that will infect the vehicle as soon as you connect the USB. Suddenly, buying that ‘84 rust bucket of a Corvette that my neighbor is selling doesn’t seem like that bad of an idea even with all the C4 issues. ps249Views0likes0CommentsF5 Long Distance VMotion Solution Demo

Watch how F5's WAN Optimization enables long distance VMotion migration between data centers over the WAN. This solution can be automated and orchestrated and preserves user sessions/active user connections allowing seamless migration. Erick Hammersmark, Product Management Engineer, hosts this cool demonstration. ps232Views0likes0CommentsToday’s Target: Corporate Secrets

Intellectual Property is one of a company’s most precious assets and includes things like patents, inventions, designs, source code, trademarks, trade secrets and more. These formulas, processes, practices and other inside information can differentiate your brand and give a competitive edge in the marketplace. An often cited example is Coca-Cola’s formula or KFC’s 11 herbs and spices. For technology companies it can be their software, hardware design, development process, roadmaps, patents and others pertinent to the company. In F5’s case, we own the patent for Cookie Persistence technology and have had to lawfully protect that valuable intellectual property. A new study from Forrester in conjunction with RSA and Microsoft entitled The Value of Corporate Secrets (pdf) concludes that while companies do focus and invest in compliance driven data security programs like PCI-DSS, they miss the mark on protecting corporate secrets and valuable intellectual property. "Nearly 90% of enterprises we surveyed agreed that compliance with PCI-DSS, data privacy laws, data breach regulations, and existing data security policies is the primary driver of their data security programs. Significant percentages of enterprise budgets (39%) are devoted to compliance-related data security programs," according to Forrester Consulting's study. "But secrets comprise 62% of the overall information portfolio's total value while compliance- related custodial data comprises just 38%, a much smaller proportion. This strongly suggests that investments are overweighed toward compliance." (from the RSA press release) Companies spend enormous amounts of time and money protecting the Custodial Data; things like medical & card payment information along with sensitive customer data, as they should and are required to do, yet losing Intellectual Property or Trade Secrets can have long lasting ramifications. The study indicated that loss of sensitive information from employee theft is 10 times more costly to a company than a single accidental loss – ‘hundreds of thousands verses tens of thousands’, the study says. Also, companies are targeted and attacked more frequently the more valuable their information. From the study, the key findings are: Secrets comprise two-thirds of the value of firms’ information portfolios. Compliance, not security, drives security budgets. Firms focus on preventing accidents, but theft is where the money is. The more valuable a firm’s information, the more incidents it will have. CISOs do not know how effective their security controls actually are. The study’s Key Recommendations: Identify the most valuable information assets in your portfolio. Create a “risk register” of data security risks. Assess your program’s balance between compliance and protecting secrets. and Reprioritize enterprise security investments. Increase vigilance of external and third-party business relationships. Measure effectiveness of your data security program. ps222Views0likes0Comments